Improve (5) - Troubleshooting - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Fix underfitting first by verifying the model can reach target training-set performance before addressing generalization gaps.

Briefing

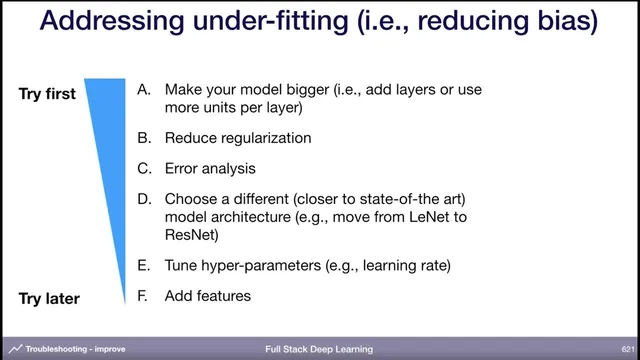

Model improvement starts with a simple priority order: fix underfitting first, then tackle overfitting, and only after both training and validation performance look acceptable should attention shift to distribution shift. The key practical rule is to make sure the model can reach the target training-set performance before worrying about generalization. If training error is far above the goal—an example given is 20% training error when the target is 1%—the fastest path is usually to increase model capacity by widening layers or adding depth. Other underfitting remedies include reducing regularization and swapping in a more modern architecture closer to state of the art, followed by tuning hyperparameters and adding features only when necessary (since deep nets are expected to learn useful representations themselves).

Once training error is in line with expectations, a large gap between training and validation error signals overfitting. The most effective remedy, when feasible, is adding more training data. When data collection isn’t possible, the toolbox expands: normalization layers such as batch normalization or layer normalization can act as regularizers; data augmentation (like flipping and rotating images) often improves robustness; and classic regularization methods such as dropout or L2 weight decay remain viable. The guidance also cautions against over-relying on early stopping as the main anti-overfitting strategy—early stopping can save compute when validation loss plateaus, but more principled approaches (data, augmentation, regularization, architecture changes) typically yield more “juice.”

A worked example ties these ideas together. With a tiny dataset of 10,000 examples, validation error can stay high even if training error improves. Increasing the dataset size (to something like 250,000) can reduce validation error, even if training error rises. From there, adding weight decay and data augmentation can bring both training and validation errors into a desirable range. At that point, the process shifts to a broad hyperparameter optimization sweep to fine-tune performance.

After the model is performing well on both training and validation, the next problem becomes distribution shift: the model may fail on systematic cases it rarely sees in training. The recommended approach is error analysis on the validation/test set, then categorizing mistakes to identify which gaps matter most. A concrete example uses pedestrian detection errors: some failures come from pedestrians being hard to see, some from windshield reflections, and some only from nighttime scenes. The prioritization logic is straightforward—estimate each error type’s contribution to total error and weigh the difficulty of intervention. Nighttime errors may be a small fraction of training mistakes but a large driver of test failures, so the priority becomes collecting more nighttime data; if that’s impossible, synthetic darkening or nighttime simulation, or domain adaptation, can help.

Domain adaptation is framed as a way to transfer from a source distribution with labels to a target distribution with limited or no labels, often using unlabeled target data. Supervised domain adaptation can involve fine-tuning a pretrained model or mixing in labeled target data; unsupervised domain adaptation uses methods such as correlation alignment, domain confusion, or cycle-based techniques. Finally, there’s a meta-step: periodically rebalance validation and test splits if validation performance looks suspiciously better than the held-out test set—an issue that can arise after extensive hyperparameter tuning on the same validation set. The session closes with practical Q&A: fixed random seeds help reproducibility (especially in reinforcement learning), uncertainty and “hard examples” can guide labeling, and the right objective is best verified by tracking the metric that actually matters in deployment.

Cornell Notes

Improvement follows a bias-variance-style priority: first eliminate underfitting by ensuring the model can reach the target training performance; then address overfitting when training is good but validation lags; finally handle distribution shift once both training and validation are in a good range. Underfitting is often fixed by increasing capacity (more layers or wider layers), reducing regularization, adopting a stronger architecture (e.g., ResNet), and tuning hyperparameters. Overfitting is best reduced with more training data; alternatives include normalization (batch/layer norm), data augmentation, and regularization like dropout or L2 weight decay. Distribution shift is tackled through systematic error analysis—categorize failures (e.g., hard-to-see pedestrians, reflections, nighttime) and prioritize interventions based on contribution and feasibility. Domain adaptation helps when target labels are scarce, using labeled source data plus unlabeled or limited labeled target data.

How should a team decide what to fix first when a model’s performance is poor?

What are the main levers for underfitting, and why is model capacity the first choice?

When training error is low but validation error is high, what’s the recommended overfitting playbook?

How does the error-analysis workflow prioritize which data to collect or synthesize?

What is domain adaptation, and when does it make sense?

Why might validation and test splits need rebalancing, and how is that detected?

Review Questions

- If training error is far above the target, what specific diagnostic step prevents wasting time on overfitting fixes?

- In the pedestrian-error example, what evidence justifies prioritizing nighttime data over reflections or hard-to-see pedestrians?

- What signals suggest that validation performance has become unreliable due to repeated tuning, and what corrective action is recommended?

Key Points

- 1

Fix underfitting first by verifying the model can reach target training-set performance before addressing generalization gaps.

- 2

Increase capacity (wider layers or deeper networks) and reduce regularization when training error is high relative to the goal.

- 3

Treat a large training–validation gap as overfitting and prioritize more training data; use normalization, data augmentation, dropout, or L2 weight decay when data is limited.

- 4

Use systematic error analysis to categorize failures, estimate each category’s contribution, and prioritize interventions based on both impact and feasibility.

- 5

Handle distribution shift after training/validation are aligned by collecting targeted data, synthesizing data, or applying domain adaptation when target labels are scarce.

- 6

Periodically check whether validation error is unrealistically better than held-out test error after extensive tuning, and reshuffle/resample splits if needed.