Investigating Model Based RL for Continuous Control | Alex Botev | 2018 Summer Intern Open House

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

A dynamics model can look excellent for one-step prediction yet still produce unusable rollouts because small errors grow over time.

Briefing

Model-based reinforcement learning for continuous control can look nearly perfect at one-step prediction, yet still fail badly when its learned dynamics are rolled out over longer horizons. That gap—tiny single-step error compounding into large long-horizon drift—helps explain why many model-based methods lag behind model-free approaches in practice, even when the dynamics model appears accurate.

Alexander Botev frames the core tradeoff around what “learning a model” really buys. In model-free RL, agents learn a policy and/or value function from interaction data, relying on reward signals and bootstrapping. In model-based RL, agents also learn an internal dynamics model that predicts how the environment evolves from observations and actions. The ideal case is powerful: with a perfect model, tasks could be solved by simulation without further environment interaction. In reality, learning the model introduces costs and failure modes—especially when planning requires unrolling predictions far into the future.

Botev illustrates the compounding-error problem using a simple torque-controlled ball environment. A one-step dynamics model predicts the next state with extremely small error, appearing almost indistinguishable from the real system. But when predictions are unrolled independently over multiple steps, trajectories diverge substantially from reality. Training policies on these long-horizon imagined rollouts produces suboptimal behavior and can even fail outright in the real environment.

Several practical difficulties follow. Not all environment details matter for a given task, so model capacity can be wasted on irrelevant factors. More importantly, long-horizon rollouts amplify errors, and uncertainty estimation for flexible neural dynamics models remains difficult. Finally, sample efficiency is not guaranteed: learning an accurate dynamics model may require so much data that it cancels out any gains from planning, leaving model-free methods competitive or better in many settings.

To address these issues, Botev’s internship research focuses on “value expansion” for actor-critic architectures. After learning a dynamics model, the method uses it to unroll trajectories from offline data and generate multi-step on-policy value targets. Instead of relying on standard bootstrapped targets that can be unstable, value expansion produces training targets over longer horizons, reducing the need for corrections such as importance sampling.

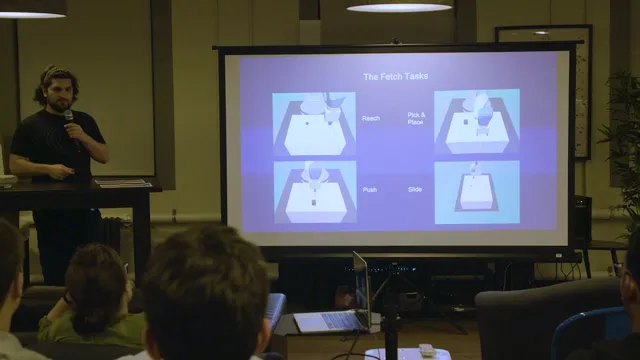

Results come from standard Fetch robotics tasks in simulation: reaching, pick-and-place, pushing, and a harder sliding-table scenario. Against a carefully tuned baseline deterministic policy gradient setup (with replay and double Q-learning), value expansion with learned dynamics sometimes delivers up to five times better sample efficiency, though performance can be fragile to hyperparameters. Across experiments, several patterns stood out: a single dynamics model was insufficient; training dynamics models with multi-step losses (to improve consistency when feeding predictions back into the model) was necessary; and value expansion benefits required expanding beyond one or two steps.

A notable detail is the role of pessimism. When combining multi-horizon targets, taking a minimum across horizons—rather than averaging—improved performance in harder environments, echoing the idea behind reducing overestimation bias in double Q-learning. Overall, the work suggests model-based RL can help continuous control, but only when dynamics learning is robust to rollout errors and value targets are constructed in a way that stabilizes training over meaningful horizons.

Cornell Notes

Continuous-control model-based RL can be misleading: a dynamics model may predict the next state almost perfectly, yet long-horizon rollouts can drift far from reality due to compounding error. Botev’s research targets this by using “value expansion” in actor-critic methods. After learning a dynamics model, the approach unrolls it from offline data to create multi-step on-policy value targets, improving stability and sample efficiency compared with a tuned deterministic policy gradient baseline. Experiments on Fetch tasks show gains up to about five times better sample efficiency in some cases, but only when dynamics models are trained with multi-step losses and value expansion uses more than one or two steps. Pessimistically combining multi-horizon targets (taking a minimum) helps on harder tasks.

Why can a dynamics model that is nearly perfect at one-step prediction still fail in model-based RL?

What is the main purpose of “value expansion” in actor-critic RL?

What training changes were necessary for dynamics models to make value expansion work?

How did value expansion perform on the Fetch robotics tasks, and what limited its reliability?

Why did pessimism—taking a minimum across horizons—help?

Review Questions

- What specific mechanism turns a low one-step dynamics error into poor long-horizon control performance?

- How does value expansion modify the target construction compared with standard bootstrapped actor-critic training?

- Which two experimental design choices were repeatedly necessary for gains: one related to dynamics-model training and one related to the number of expansion steps?

Key Points

- 1

A dynamics model can look excellent for one-step prediction yet still produce unusable rollouts because small errors grow over time.

- 2

Model-based RL’s promise depends on robust long-horizon behavior, not just accurate next-state prediction.

- 3

Value expansion improves actor-critic training by using a learned dynamics model to generate multi-step on-policy value targets from offline data.

- 4

Dynamics models must be trained with multi-step losses (feeding predictions back into the model) to prevent rollout inconsistency from erasing sample-efficiency gains.

- 5

Value expansion benefits require expanding beyond one or two steps; short expansions often fail to improve over baselines.

- 6

Pessimistically aggregating multi-horizon targets (taking a minimum) can reduce overestimation bias and help on harder tasks.

- 7

Even with improvements, performance can be hyperparameter-sensitive and may not always improve asymptotic performance across all environments.