Is Stable Diffusion 2.0 Worth the Upgrade?

Based on MattVidPro's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Stable Diffusion 2.0 is positioned as an open-source upgrade with notable changes in upscaling, depth-guided image-to-image, and text-guided inpainting.

Briefing

Stable Diffusion 2.0 is landing with a mix of backlash and counter-evidence: critics claim it’s worse than Stable Diffusion 1.5 and can’t reliably generate famous people or characters, but hands-on comparisons suggest the model’s overall image quality and creative flexibility are improved—especially when the NSFW filter is disabled.

On the release side, Stability AI positions Stable Diffusion 2.0 as a meaningful upgrade rather than a minor tweak. The model is described as open source and built around a robust text-to-image setup using OpenCLIP and the LAION 5B dataset (filtered to remove adult content via an NSFW filter). The release also highlights practical generation upgrades: resolution upscaling from 128×128 to 512×512, with the potential to go even higher (the transcript mentions 248×248 as an intermediate ceiling). A new depth-guided diffusion feature (“depth to image”) extends image-to-image workflows by detecting depth in an input image and applying styles or characters while preserving spatial structure—demonstrated with themed transformations where foreground elements stay sharper than background ones. Inpainting is also updated, with text-guided inpainting fine-tuned on Stable Diffusion 2.0 to make it easier to swap parts of an image.

The controversy centers on whether Stable Diffusion 2.0 can generate well-known public figures and recognizable characters as effectively as earlier versions. The transcript’s testing points to a key lever: the NSFW filter. A free Google Colab linked in the discussion is described as having the NSFW filter removed, and the results shown include multiple generations of Barack Obama and Elon Musk. While hands still show typical diffusion-era problems—sometimes producing “troll hands” or malformed fingers—the model appears capable of producing recognizable faces. The same approach is suggested for users struggling with famous-person prompts: disabling the NSFW filter may restore access.

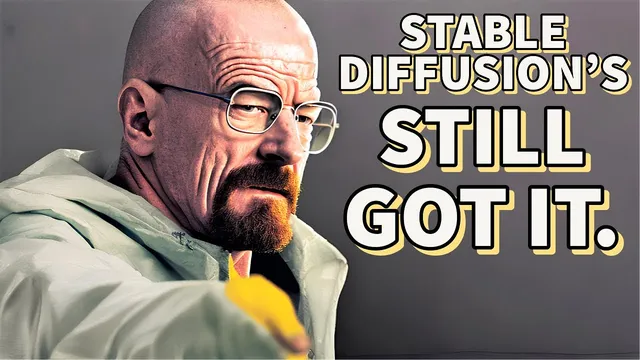

Comparisons against other popular systems reinforce the nuance. For a “3D render lemon” prompt, Stable Diffusion 2.0 is described as closer to a photographic look than earlier versions but still less coherent than Midjourney V4, which produces a more consistent character and action. For a haunted sarcophagus prompt, Stable Diffusion 2.0 delivers a vibrant, glowing result, but Midjourney V4 remains more polished. A more pointed test involves Breaking Bad’s Walter White: the transcript claims Stable Diffusion 2.0 can generate a close-to-photographic Walter White in lab attire, sometimes with minor wonkiness (including occasional double-composition artifacts), while Midjourney V4’s output is described as more artistic and less strictly photographic.

Overall, the upgrade case rests on three pillars: improved upscaling, new depth-guided image-to-image control, and better text handling and inpainting—while acknowledging that hands remain a weak spot across major models. The transcript’s bottom line is that Stable Diffusion 2.0 is “better than 1.5” in general, with some edge cases where 1.5 may still win, and that the NSFW filter setting likely explains much of the “can’t generate famous people” complaint.

Cornell Notes

Stable Diffusion 2.0 is presented as a substantial upgrade over 1.5, with improvements aimed at higher-quality outputs and more controllable editing. The release emphasizes upscaling (128×128 to 512×512), a new depth-to-image feature that preserves spatial structure when transforming an input image, and updated text-guided inpainting for easier part replacement. The main controversy—claims that famous people and characters can’t be generated—gets a counterpoint: results shown in the transcript suggest disabling the NSFW filter can restore the ability to generate recognizable public figures. Even with better coherence and text handling, hands remain inconsistent, aligning with broader limitations seen across other image models.

What concrete upgrades does Stable Diffusion 2.0 add beyond the earlier model?

Why do some people claim Stable Diffusion 2.0 is worse than 1.5 for famous people?

What evidence is offered that famous people can still be generated in Stable Diffusion 2.0?

How does Stable Diffusion 2.0 compare to Midjourney V4 and Dolly 2 in the transcript’s examples?

What limitation persists even when Stable Diffusion 2.0 improves other areas?

How do resolution and native size affect output consistency?

Review Questions

- Which three Stable Diffusion 2.0 features are emphasized as the biggest practical improvements, and what kind of user workflow does each one target?

- How does the NSFW filter factor into the transcript’s explanation of famous-person generation failures or successes?

- What recurring artifact is highlighted as still unsolved across multiple models, and how is it demonstrated in the examples?

Key Points

- 1

Stable Diffusion 2.0 is positioned as an open-source upgrade with notable changes in upscaling, depth-guided image-to-image, and text-guided inpainting.

- 2

Upscaling is described as moving from 128×128 to 512×512, improving detail recovery in faces and other fine features.

- 3

Depth-to-image adds spatial control by using depth detection from an input image to apply styles or characters while keeping foreground/background structure consistent.

- 4

Text-guided inpainting is fine-tuned on Stable Diffusion 2.0 to make part replacement easier and cleaner.

- 5

Claims that famous people can’t be generated are countered by results where the NSFW filter is disabled, suggesting the filter—not the model’s core capability—is the bottleneck.

- 6

Hands remain unreliable, with malformed fingers appearing even in otherwise recognizable famous-person generations.

- 7

Output consistency can depend on resolution choice; native 768×768 is described as more reliable than non-native 512×512 for at least one prompt.