KAN: Kolmogorov–Arnold Networks Paper Explained

Based on AI Researcher's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

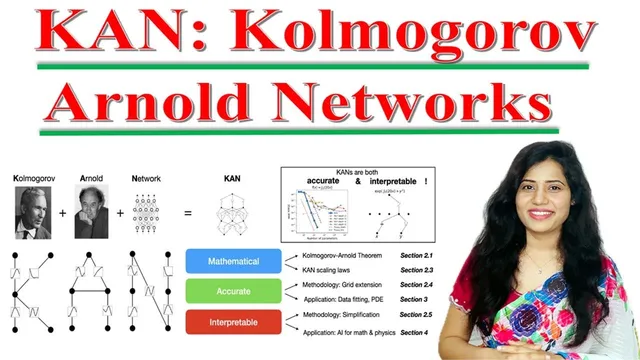

KAN is positioned as a function-representation network inspired by the Kolmogorov–Arnold representation theorem, aiming for accuracy with improved interpretability.

Briefing

Kolmogorov–Arnold Networks (KAN) are presented as a multi-layer neural network alternative designed to represent functions with fixed activation functions while keeping the overall model more parameter-efficient and easier to interpret than a standard multi-layer perceptron (MLP). The central claim is that KAN can achieve comparable or better accuracy using fewer parameters, while also producing symbolic, human-readable expressions for the learned relationships—an advantage for tasks where interpretability and continual learning matter.

The walkthrough begins by contrasting KAN with the familiar MLP setup: an MLP stacks input, hidden, and output layers, where each node applies a fixed activation function and edges carry learnable weights. It also references the universal approximation theorem, which underpins why MLPs can approximate any continuous function given enough hidden units. From there, KAN is positioned as a different construction. Instead of relying on learnable activations at every node, KAN uses a representation rooted in the Kolmogorov–Arnold representation theorem: any continuous function can be expressed using compositions of simpler continuous functions. In network terms, this becomes a structured decomposition of a complex function into layered operations.

A key architectural detail highlighted is that KAN nodes use learnable activation functions (described through basis-plane decompositions), while the network’s layered form processes inputs sequentially through multiple transformations. The transcript emphasizes a specific mechanism: a single basis-plane activation can be decomposed into a weighted sum of basis planes, where coefficients control the shape. Increasing the number of nodes and basis functions lets the model fit more detailed patterns, which supports higher accuracy on complex functions.

Performance comparisons are then framed around several empirical themes. First, increasing KAN “grid size” (ranging from 3 up to 1,000,000) is described as improving the ability to model complex mathematical functions, with error dropping sharply at larger grid resolutions. Second, a symbolic regression pipeline is outlined as a multi-step process: train with specification to focus on relevant features, prune less significant connections, set nodes to represent specific operations (like sign, squaring, and exponentials), fine-tune parameters for coefficients in the symbolic expression, output a symbolic formula, and finally normalize numeric values for readability. The result is a compact mathematical expression rather than only a black-box predictor.

The transcript also contrasts continual learning behavior. In a toy scenario where data arrives in sequence around multiple Gaussian clusters, KAN is described as adapting to new data without erasing earlier patterns, while MLP-style training is associated with catastrophic forgetting. Finally, interpretability is illustrated through arithmetic-like compositional behavior—multiplication and division operations can emerge as explicit structured computations inside the network.

A decision framework closes the discussion: choose KAN when compositional structure, complicated functions, continual learning, interpretability, high-dimensional data, or small model size are priorities. Choose MLP when fast training and simpler optimization are more important, since KAN may require more setup despite its efficiency in parameters and its symbolic outputs.

Cornell Notes

Kolmogorov–Arnold Networks (KAN) are presented as an alternative to multi-layer perceptrons that can represent complex functions through structured compositions inspired by the Kolmogorov–Arnold representation theorem. The approach aims for mathematical accuracy and interpretability while using parameters more efficiently than MLPs. KAN’s basis-plane activations can be decomposed into weighted sums, and increasing grid size and basis complexity improves fit, often with sharp error reductions. A symbolic regression workflow (train/specify → prune → set operation nodes → fine-tune coefficients → output symbolic formula → normalize) turns learned relationships into explicit expressions. In continual learning tests, KAN is described as retaining earlier knowledge better than MLPs, which are linked to catastrophic forgetting.

What distinguishes KAN from a standard multi-layer perceptron in how it represents functions?

How does KAN’s basis-plane activation decomposition work, and why does increasing complexity help?

What does the symbolic regression pipeline for KAN look like step by step?

What evidence is given for KAN’s parameter efficiency compared with MLPs?

How does KAN behave in continual learning compared with MLP, and what problem does that address?

When should someone choose KAN over MLP according to the decision framework?

Review Questions

- How does the Kolmogorov–Arnold representation theorem motivate KAN’s network structure, and how is that reflected in the layered decomposition described?

- Which steps in the KAN symbolic regression workflow are responsible for turning learned computations into an explicit formula rather than only a prediction?

- What continual learning failure mode is attributed to MLPs, and what behavior is attributed to KAN in the described Gaussian-cluster scenario?

Key Points

- 1

KAN is positioned as a function-representation network inspired by the Kolmogorov–Arnold representation theorem, aiming for accuracy with improved interpretability.

- 2

Unlike a typical MLP’s fixed activations with learnable edge weights, KAN uses basis-plane activation structures whose coefficients shape learned nonlinearities.

- 3

Increasing grid size and basis complexity (nodes/basis functions) is described as improving KAN’s ability to fit complex functions, often with sharp error drops.

- 4

A symbolic regression workflow for KAN translates training results into explicit symbolic formulas through specification, pruning, operation-node configuration, coefficient fine-tuning, and normalization.

- 5

In continual learning tests with sequential Gaussian clusters, KAN is described as retaining earlier knowledge while MLPs are linked to catastrophic forgetting.

- 6

A practical selection guide recommends KAN for compositional structure, complicated functions, continual learning, interpretability, high-dimensional data, and small model size; it recommends MLP when fast training is critical.