Learn to Spell: Prompt Engineering (LLM Bootcamp)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Prompt engineering works by conditioning a language model’s next-token probabilities through the exact text you provide, narrowing which continuations become likely.

Briefing

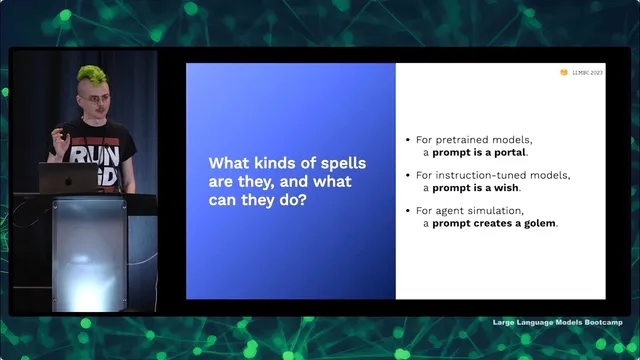

Prompt engineering is the practical art of choosing the exact text you feed a language model so it behaves the way you need—often replacing what used to require training, fine-tuning, or other model-building work. The core insight is that prompts act like “magic spells,” not because models contain wizards, but because they reshape the probability landscape of what text could come next. A language model is fundamentally an autoregressive statistical model: it tokenizes input, then assigns probabilities to every possible next token, repeating that process to generate an entire document. Adding a prompt conditions that generation—reweighting which “alternate universes” (possible continuations) become more likely—so the model effectively narrows from a huge space of documents to the one that matches the user’s intent.

That “alternate universe” framing matters because it clarifies both what prompting can do and what it can’t. Prompts can steer toward nearby, already-written patterns—like finding a Reddit-style answer to a question that exists somewhere in the training distribution—but they can’t reliably jump to universes where missing facts were never written. The talk uses examples to show the danger of overreaching: asking for a cancer cure “from the early 21st century” won’t magically produce a correct molecular mechanism if the model’s learned text doesn’t support that specific claim. Instead, prompting is closer to running search across nearby documentation: it can recombine ideas that exist in the data, but it can’t conjure precise, real-world results on demand.

For instruction-tuned models (including chat-style systems), prompting is framed as “wishes” that can succeed—sometimes dramatically—when the request is phrased correctly. A key example comes from work on reducing social bias: a pre-trained model may default to stereotypes (e.g., choosing “grandfather” in an Uber booking scenario involving age and phone comfort). But adding a clear instruction—asking for an unbiased answer that avoids stereotypes—can produce large improvements on bias benchmarks. The flip side is that wishes require precision: vague or poorly structured instructions can lead to failure modes, including the model following the wrong interpretation of the request.

To make prompting work reliably, the talk lays out an emerging playbook built from practical constraints. First, prompts should use formatted, structured text—especially code fences—because models predict formatted patterns well and are less likely to drift. Second, decomposition is a recurring technique: break tasks into smaller steps, or use “self-ask” style follow-up questions so the model decides what sub-questions to generate at query time. Chain-of-thought prompting is treated as a way to elicit reasoning traces by showing examples where explanations precede answers; it often improves multi-step reasoning and question answering, though it increases latency and token cost.

Finally, the talk warns against common misconceptions. “Few-shot learning” via prompts is not a dependable substitute for training; models may ignore label permutations or struggle with character-level operations because they operate on tokens, not raw characters. Tokenization quirks can break seemingly simple string tasks, though spacing letters can sometimes change tokenization behavior. Across all of it, the practical message is that prompt engineering is mostly a bag of tricks—effective, but sensitive to fiddly details—and that the best approach often combines multiple techniques while managing tradeoffs in compute, latency, and reliability.

Cornell Notes

Prompt engineering is presented as a way to control language models by conditioning their next-token probabilities through carefully chosen input text. The talk frames prompts as “magic spells”: they don’t add hidden intelligence, but they reweight which continuations (imagined “alternate universes”) become likely. Instruction-tuned models can behave like “wish-granters,” improving outcomes such as bias reduction when requests are explicit and structured, but they can fail when instructions are vague or rely on negations poorly. A practical playbook emphasizes formatted prompts (like code fences), task decomposition (including self-ask and chain-of-thought), and post-generation checking or ensembling. The overall takeaway is that prompting is powerful yet constrained by tokenization, distributional limits, and cost tradeoffs like latency and token usage.

How can a prompt change what a language model generates if the model is just predicting the next token?

Why does the “alternate universe” metaphor come with a warning?

What’s the difference between pre-trained models and instruction-tuned models in how prompts function?

Why do negations and character-level tricks often fail in prompting?

What are the main techniques in the emerging prompting playbook, and what do they cost?

How does ensembling improve answers, and why does randomness matter?

Review Questions

- Which parts of prompting are best understood as conditioning a probability distribution, and how does that differ from “learning a new task on the fly”?

- Give one example of a prompting technique that improves reasoning quality and one example of a technique that mainly changes cost (latency/compute). Explain why.

- Why might a model fail at a character-level transformation even when it can summarize or write creatively?

Key Points

- 1

Prompt engineering works by conditioning a language model’s next-token probabilities through the exact text you provide, narrowing which continuations become likely.

- 2

Language models are autoregressive statistical text models; prompts don’t add hidden intelligence, they reweight the space of possible documents.

- 3

Instruction-tuned models can reduce issues like social bias when instructions are explicit and structured, but vague or poorly phrased requests can still fail.

- 4

Formatted structure (especially code fences) makes model outputs more predictable and reduces drift.

- 5

Task decomposition—via self-ask or chain-of-thought—often improves multi-step performance but increases token usage and latency.

- 6

Negations and character-level operations are fragile because models follow token-level patterns and can mishandle “not” phrasing or tokenization quirks.

- 7

Quality can improve through ensembling and self-correction, but these methods trade off against compute and response time.