Learning Dexterity

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

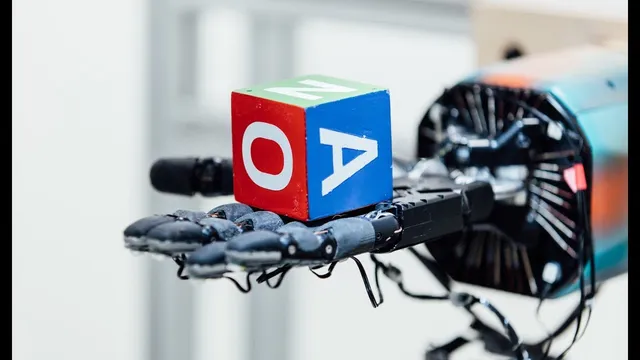

A robot hand learns to rotate a block into arbitrary orientations by using reinforcement learning with simulation and real-world transfer.

Briefing

Teaching robots to handle everyday objects without hand-coding every movement is getting a practical boost from a training approach built around robustness. A robot hand learns to rotate a block into any requested orientation, then repeatedly receives new goals—using reinforcement learning to discover control strategies that work across many variations of the world. The key is that success isn’t trained in a single, fixed environment; instead, the system is exposed to shifting physics and visuals so the learned manipulation transfers to real hardware.

The method relies on reinforcement learning paired with simulation and a technique called domain randomization. During training, the robot encounters countless versions of the task where rules change slightly each time. Some changes are cosmetic—like the cube’s color and the background—but the training goes further by randomizing physical and dynamic factors that strongly affect dexterous motion: how fast the hand can move, the block’s weight, and the friction between the block and the hand. By learning from this spread of conditions, the controller develops a manipulation policy that remains effective even when real-world properties differ from the simulated ones.

To make that scale possible, the project uses a cloud training system named Rapid. It runs training across thousands of machines to simulate the many environment variants needed for robust learning. The workflow is iterative: rollout workers gather experience from diverse simulated worlds, send that data to an optimizer that updates the model parameters controlling the robot, and then distribute the updated parameters back to the rollout workers for another cycle of data collection and improvement.

Generalization is a central theme. The same learning framework isn’t limited to rotating one kind of object; it can manipulate other shapes as well, suggesting the learned dexterity is not tied to a single geometry. That contrasts sharply with traditional robotics programming, where a controller might be written as a meticulous set of conditional instructions—mapping specific hand positions to specific finger motions. Here, the system learns those behaviors directly from experience, without additional human-authored rules for each new object configuration.

The practical implication is straightforward: a robot that can reliably rotate blocks under varied physical conditions is a stepping stone toward broader, more complex manipulation tasks. With continued scaling of this approach, the goal is to move beyond today’s hand-programmed robots toward systems that can learn new skills with less bespoke engineering—potentially expanding what robots can do in real-world settings.

Cornell Notes

A human-like robot hand learns dexterous manipulation by training in simulation with reinforcement learning and domain randomization. Instead of optimizing for one fixed environment, training repeatedly changes both appearance and physics—cube/background colors, hand motion limits, block weight, and hand-block friction—so the learned policy stays robust when real conditions differ. A cloud system called Rapid runs this large-scale training across thousands of machines, cycling between rollout workers that collect experience and an optimizer that updates model parameters. The resulting controller generalizes beyond a single object: it can rotate blocks into arbitrary orientations and also manipulate other shapes without extra human-written control logic. This matters because it reduces the need for meticulous, hand-coded controllers and supports learning new tasks more efficiently.

Why does domain randomization matter for transferring dexterous manipulation from simulation to the real world?

What specific factors are randomized during training beyond visual appearance?

How does the Rapid training system fit into the learning loop?

What does “generalization” look like in this dexterity setup?

How does the learned approach differ from traditional hand-programmed robot controllers?

Review Questions

- How do randomized changes to friction and block weight influence what the robot learns compared with training on a single fixed block?

- Describe the role of rollout workers and the optimizer in Rapid’s training cycle. What information moves between them?

- What evidence in the description suggests the learned controller can handle more than one object shape?

Key Points

- 1

A robot hand learns to rotate a block into arbitrary orientations by using reinforcement learning with simulation and real-world transfer.

- 2

Domain randomization changes not only visuals (cube and background colors) but also physical parameters like hand speed limits, block weight, and friction.

- 3

Training across many randomized environments encourages the controller to develop robust manipulation strategies rather than brittle, environment-specific behaviors.

- 4

Rapid scales simulation-based training by running iterative cycles across thousands of cloud machines.

- 5

The training loop alternates between rollout workers that collect experience and an optimizer that updates model parameters, which are then redistributed for further learning.

- 6

The learned manipulation policy generalizes beyond one object type, enabling tasks with other shapes without additional human-written control rules.

- 7

The approach aims to reduce reliance on meticulous, hand-coded controllers that map specific hand positions to specific finger motions.