Lecture 05: Deployment (FSDL 2022)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

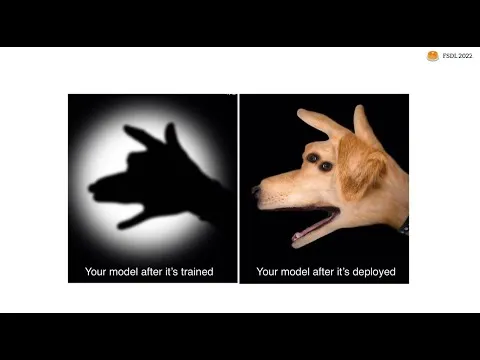

Deploy early and deploy often to validate real user usefulness, since offline evaluation can miss failures that appear only under production inputs and latency constraints.

Briefing

Model deployment is where machine learning stops being a lab exercise and starts proving it can solve real user problems—often revealing flaws that offline evaluation misses. The core message is to deploy early and deploy often: start with a minimum viable model in a prototype, get feedback from real inputs, and iterate. That feedback loop matters because many subtle failure modes only show up once users interact with the system and the model’s latency, correctness, and edge cases are tested under real conditions.

The lecture lays out a practical path from prototype to production, emphasizing simplicity first and adding complexity only when scaling demands it. For early prototypes, tools like Gradio (acquired by Hugging Face) and Streamlit let developers wrap a model with a basic UI quickly. A key best practice is to put the prototype behind a shareable web URL rather than running it only on a laptop, so collaborators can test it and latency trade-offs become visible. At this stage, the goal isn’t perfection; it’s a working demo that can be built in hours (or about a day for a first pass) and improved based on user feedback.

Once prototypes outgrow their initial setup, the lecture explains why “model-in-the-web-server” patterns fail: limited UI flexibility, weak scaling for concurrent requests, difficulty redeploying when models change frequently, poor fit for large model inference, and mismatches between typical web hardware and ML workloads (often no GPU). The fix is to separate the model from the UI and choose an appropriate serving pattern.

Two major patterns are contrasted. Batch prediction runs the model offline on scheduled data, writes results to a database, and serves users from that store. It’s easy to implement with existing DAG-style workflow tools (Dagster, Airflow, Prefect) and scales well via databases, but it can’t handle huge or unbounded input spaces and can deliver stale results if features change faster than the batch cadence.

Online model serving runs inference on demand as a dedicated model service. This improves scalability and flexibility—models can be hosted on the right hardware and updated independently—but introduces network latency and operational complexity. The lecture then tours the building blocks of model services: REST APIs (with JSON payloads, noting no true industry standard for input schemas), dependency management (pinning library versions or using containers), and performance optimization.

Performance guidance focuses on whether to use GPUs, and stresses that GPU training doesn’t automatically mean GPU inference is required. For CPU-first scaling, concurrency and thread tuning can deliver high throughput; for GPUs, techniques like caching, batching, quantization, and model distillation can reduce cost and latency. The lecture also highlights horizontal scaling via load balancing, using container orchestration (Kubernetes) or serverless approaches (e.g., AWS Lambda), each with trade-offs around cold starts, package size limits, and GPU availability.

Finally, the lecture covers rollouts (gradual traffic shifting, instant rollback, traffic shadowing for A/B-style validation) and managed options such as AWS SageMaker, plus an advanced path: edge deployment. Edge prediction can cut latency and improve privacy by keeping data on-device, but it adds complexity around model size, hardware constraints, model update strategies, debugging difficulty, and tool maturity. The closing guidance ties everything together: deploy early, keep it simple, separate model from UI when scaling breaks, use managed services when possible, and only move to the edge when latency, privacy, or connectivity requirements truly demand it.

Cornell Notes

Deployment turns offline metrics into real-world performance. The lecture pushes a “deploy early, deploy often” workflow: build a prototype with Gradio or Streamlit, share it via a web URL, collect user feedback, and iterate. When prototypes hit limits, separate the model from the UI—either with batch prediction (offline jobs writing results to a database) or with online model services (on-demand inference behind REST APIs). Production serving then depends on dependency management (often via containers), performance tactics (CPU concurrency, GPU batching/caching, quantization, distillation), and safe rollouts (gradual traffic shifts, rollback, shadow testing). For extreme latency/privacy needs, move inference to the edge using device-specific frameworks, but expect added complexity in updates, debugging, and hardware constraints.

Why does deployment often uncover problems that offline evaluation misses?

When should a team choose batch prediction instead of online serving?

What are the main drawbacks of embedding the model directly inside a web server/UI?

How do dependency management and containers reduce deployment risk?

Which performance levers matter most, and why might CPU inference be enough?

What makes edge deployment fundamentally different from web deployment?

Review Questions

- What decision criteria determine whether batch prediction or online model serving is the better fit for a product?

- Describe two ways dependency drift can break model correctness in production and how containers mitigate that risk.

- List three performance optimization techniques mentioned for faster inference and explain one key trade-off for each.

Key Points

- 1

Deploy early and deploy often to validate real user usefulness, since offline evaluation can miss failures that appear only under production inputs and latency constraints.

- 2

Prototype with Gradio or Streamlit, but share via a web URL to gather feedback and expose latency trade-offs before committing to a production architecture.

- 3

Avoid running large or frequently changing models inside the same web server/UI code path; separate the model from the UI when scaling, redeploy frequency, or hardware fit becomes a problem.

- 4

Use batch prediction when inputs are bounded and scheduled refresh is acceptable; use online model services when users need customized, on-demand predictions.

- 5

Treat dependency management as part of the model: pin versions or package inference with containers to prevent preprocessing/library mismatches from changing outputs.

- 6

Optimize inference with the right mix of concurrency (CPU), caching, batching (GPU), and model compression (quantization, distillation) rather than defaulting to GPUs.

- 7

For production safety, implement rollouts with gradual traffic shifting, instant rollback, and shadow testing to validate new model versions before full exposure.