Lecture 06: Continual Learning (FSDL 2022)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Continual learning should be implemented as a structured retraining strategy with explicit rules for logging, curation, triggers, dataset formation, offline testing, and online testing.

Briefing

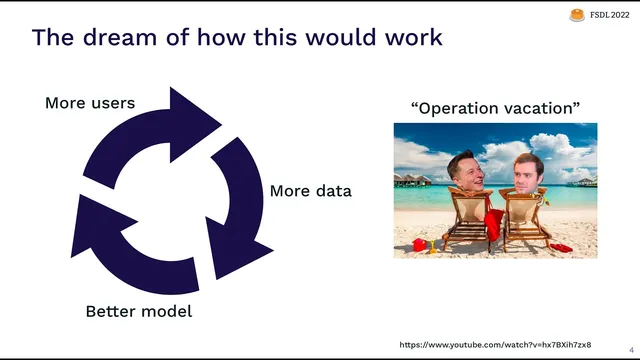

Continual learning in production is less about “retraining whenever something feels off” and more about running a structured retraining strategy that can adapt to a continuous stream of real-world data. The core problem is that production models rarely have reliable, end-to-end measurement: teams often rely on spot checks until user complaints or business metrics dip, at which point fixes become ad hoc—SQL queries, notebooks, emergency retraining, and another round of limited validation. The lecture argues that this is why continual learning remains one of the least mature parts of the production ML lifecycle, and it proposes a clearer way to design it: treat retraining as an outer loop with explicit rules for logging, data curation, retraining triggers, dataset formation, offline testing, and online testing.

At the center is the idea of a “retraining strategy,” a bundle of decisions that governs every stage of the loop. Logging determines what data from an infinite stream gets stored for later analysis. Curation decides which unlabeled (or weakly labeled) production data gets prioritized for labeling and potential retraining, producing a finite reservoir of candidate training points. Retraining triggers specify when the system should retrain. Dataset formation selects which subset of the reservoir feeds a particular training job. Offline testing defines what “good enough” means for stakeholders, typically via a sign-off report comparing the candidate model to the previous one across key metrics and slices. Online testing then validates that the deployment succeeded—often via shadow mode, A/B tests, gradual rollout, and rollback if needed.

Once the first model ships, the lecture reframes the ML engineer’s job: not constant model retraining, but “babysitting” and improving the retraining strategy itself using monitoring and observability. That means tuning the rules that decide what to log, what to sample, what to test, and when to retrain—based on signals that indicate whether the system is improving over time despite changes in the world.

A baseline strategy is periodic retraining: log everything (or as much as feasible), sample uniformly for labeling up to capacity, retrain on a fixed cadence (e.g., weekly using the last month of data), and validate via offline accuracy thresholds plus manual spot checks. But periodic retraining can fail when data volume exceeds logging/labeling capacity (especially with long-tail edge cases or expensive human-in-the-loop labeling), when retraining costs are high, or when the cost of bad predictions makes frequent retraining too risky.

To iterate beyond the baseline, the lecture emphasizes monitoring signals ranked by usefulness: user outcome/feedback first, then model performance metrics, then proxy metrics (domain-specific indicators correlated with failure), followed by data quality and distribution drift. Distribution drift is important for debugging but ranked lower because models can be robust to shifts in input distributions; drift alerts don’t necessarily indicate degraded performance. The monitoring mindset borrows from observability: measure “known unknowns” with key metrics and enable “unknown unknowns” by retaining raw context for deep dives.

Finally, the lecture connects monitoring to data curation and retraining: the same tools—projections, uncertainty, cohort analysis, and feedback loops—help decide what data to label and what to test. It closes with a concrete workflow: an alert (e.g., user feedback worsens), subgroup investigation (e.g., new users), error analysis (e.g., emojis), strategy updates (new cohorts, new projections, new test cases), retraining, and a new model sign-off—turning continual learning into a repeatable improvement loop rather than a cycle of surprises.

Cornell Notes

Continual learning is framed as an outer loop around a production model: log production data, curate it into a labeled reservoir, decide when to retrain, form datasets for each training run, validate candidates offline, and confirm success online before rollout. The lecture’s key move is defining a “retraining strategy” as explicit rules for each stage, then using monitoring and observability to tune those rules over time. User feedback is treated as the most valuable signal, with model metrics and proxy metrics following when direct outcomes aren’t available. Distribution drift is useful for debugging but not sufficient on its own because models can remain accurate even when input distributions shift. The practical recommendation is to start simple (periodic retraining) and add automation and smarter sampling only after measurement and evaluation become reliable.

What does a “retraining strategy” include, and why is it central to continual learning?

Why is user feedback ranked above offline accuracy for monitoring?

When does periodic retraining work well, and what are its main failure modes?

Why is distribution drift monitoring ranked lower than other signals?

How do projections help with monitoring and drift detection for high-dimensional data?

What are the main options for selecting data during retraining (dataset formation)?

Review Questions

- Which components of a retraining strategy determine what data is stored, what data is labeled, when retraining happens, and how “good enough” is decided?

- Give an example of a proxy metric for a specific ML task and explain what failure it would catch.

- Why might distribution drift alerts fail to predict user-facing degradation, and what signal would be more reliable?

Key Points

- 1

Continual learning should be implemented as a structured retraining strategy with explicit rules for logging, curation, triggers, dataset formation, offline testing, and online testing.

- 2

User feedback/outcome signals are the most valuable monitoring metrics because they reflect product impact and avoid loss mismatch.

- 3

Periodic retraining is a reasonable starting point, but it breaks down with long-tail/rare events, high retraining costs, or high risk from bad predictions.

- 4

Monitoring should follow an observability mindset: retain raw context for deep debugging, measure known unknowns with key metrics, and support unknown unknowns with flexible analysis.

- 5

Distribution drift is useful for debugging but not sufficient as a primary intervention signal because models can remain accurate despite input distribution changes.

- 6

Data curation and monitoring are two sides of the same coin: cohort analysis, projections, uncertainty, and feedback loops can drive both what to label and what to investigate.

- 7

Dataset formation choices (all-data, sliding windows, sampling/online batch selection, or continual fine-tuning) trade off recency, coverage, cost, and forgetting risk.