Lecture 11B: Monitoring ML Models (Full Stack Deep Learning - Spring 2021)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Deployed ML models often degrade due to changes in p(x), changes in p(y|x), and sampling artifacts like long-tail undercoverage.

Briefing

Monitoring deployed machine learning models is about catching silent performance decay—often driven by changes in data, user behavior, or sampling artifacts—before it turns into revenue loss. After deployment, model quality rarely stays fixed: input distributions shift (data drift), the relationship between inputs and outcomes changes (model or concept drift), and the way training data was sampled can leave blind spots (domain shift/long-tail gaps). The practical takeaway is that “ship and forget” fails because ML systems degrade through subtle, hard-to-detect changes rather than loud crashes.

The lecture frames drift through three probability-level failure modes. First, p(x) can change: upstream pipelines may redefine features or introduce bugs (e.g., a feature suddenly becoming all -1), malicious users can feed adversarial inputs, new regions or new user demographics can alter the input mix. Second, p(y|x) can change, often because user behavior adapts to the model—especially in recommenders, where clicks reshape future preferences. Third, sampling artifacts can matter: long-tail events that occur rarely but are costly when wrong may be underrepresented, and bias in sampling can systematically exclude critical cases.

Drift isn’t just theoretical; it shows up in production. During the pandemic, widespread distribution shifts caused many models to drift, leading to unexpected behavior. In one e-commerce-style case, a retraining pipeline bug repeatedly served the same recommendations, driving churn and costing millions over a month or two before detection. These examples motivate a structured monitoring plan.

The lecture lays out what to monitor using four signal types, ordered by how directly they reflect model performance and how hard they are to obtain. The most informative is ground-truth model performance, but labels are often delayed or expensive. Business metrics (click-through rate, engagement) are easier to measure but can be confounded by many factors unrelated to accuracy. Input and prediction distributions help detect drift without labels, though measuring them well takes care. System health metrics (GPU utilization, latency) are straightforward but only catch coarse failures like crashes or memory leaks.

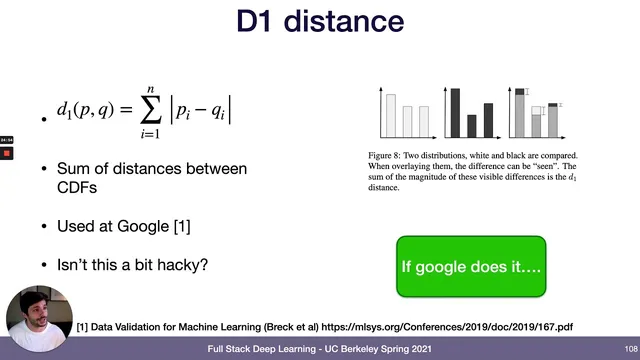

To measure change, monitoring systems compare a “reference” distribution to a “current” window. A pragmatic default is using training/evaluation data as the reference. Windowing can be fixed (e.g., last day) or sliding (e.g., last hour), and there’s a special case where window size equals one, leading toward outlier detection. For distance metrics, rule-based data quality checks (ranges, missingness, ordering constraints) are recommended as a strong first layer because they catch many issues and are easier to operationalize. Statistical distances exist—KS statistic is favored for interpretability, while KL divergence is discouraged due to tail sensitivity, asymmetry, and numerical pitfalls.

Deciding whether a detected change is “bad” remains less settled. Statistical tests can produce tiny p-values with large datasets even for negligible shifts, so teams often rely on thresholds, anomaly detection, and manual rule-setting. Tooling is emerging across three categories: system monitoring (e.g., CloudWatch), data quality frameworks (e.g., Great Expectations), and ML monitoring platforms (e.g., Arize, Arthur, Fiddler).

Finally, monitoring should be integrated into the broader ML lifecycle, not bolted on. The lecture argues for an “evaluation store” that ties monitoring to offline evaluation and training—helping close the data flywheel by guiding what to label, how to oversample low-performing regions, and when retraining is worth the compute and data cost. The field still lacks mature research on linking drift scores to actual performance impact, leaving major open problems for reliable ML in the real world.

Cornell Notes

Deployed ML models commonly degrade because the world changes after training. The lecture breaks drift into three probability-level failures: p(x) changes (data drift), p(y|x) changes (model/concept drift), and sampling artifacts leave gaps such as long-tail undercoverage. Monitoring should prioritize signals that best approximate performance—ground-truth metrics when labels exist, business metrics when they don’t, and input/prediction distribution checks as a drift proxy—while system metrics mainly catch infrastructure failures. Change detection typically compares a reference window (often training/eval data) to a current window using rule-based checks and selected distance metrics like the KS statistic (while avoiding KL divergence for shift detection). The long-term goal is tighter integration between monitoring and training so the system can guide labeling, oversampling, and retraining decisions to close the data flywheel.

What are the main ways deployed ML systems fail after training, and how do they map to probability distributions?

Why are labels and business metrics not enough for reliable monitoring?

How should monitoring systems choose reference data and measurement windows?

Which distance metrics and rule-based checks are recommended for detecting distribution change, and why?

How do teams decide whether a detected drift is actually harmful?

What does “closing the data flywheel” mean for monitoring, and how does it change the role of an evaluation store?

Review Questions

- How do data drift, model drift (concept drift), and sampling artifacts differ in terms of what probability distribution changes?

- Why might the KS statistic be more useful than KL divergence for monitoring distribution shift in production?

- What are the trade-offs among ground-truth performance metrics, business metrics, and input/prediction distribution monitoring?

Key Points

- 1

Deployed ML models often degrade due to changes in p(x), changes in p(y|x), and sampling artifacts like long-tail undercoverage.

- 2

Monitoring should balance four signal types: ground-truth performance (best but label-heavy), business metrics (easier but confounded), input/prediction distributions (label-light drift proxy), and system health metrics (coarse infrastructure signals).

- 3

Use a reference window—often training/evaluation data—and compare it to a current sliding window to detect distribution change.

- 4

Start with rule-based data quality checks because they are easier to operationalize and catch many real bugs; layer statistical distances like the KS statistic when appropriate.

- 5

Avoid using KL divergence as the primary shift metric because it’s tail-sensitive, asymmetric, and can behave poorly when distributions don’t align.

- 6

Treat “drift detected” and “drift harmful” as different problems; p-values can be misleading at scale, so teams rely on thresholds, anomaly detection, and human judgment.

- 7

Integrate monitoring with training/evaluation (an “evaluation store”) so it can guide labeling, oversampling, A/B testing, and retraining decisions to close the data flywheel.