Lecture 12: Research Directions (Full Stack Deep Learning - Spring 2021)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

arXiv’s machine learning paper volume is high enough that individuals need curation strategies; reading everything is not feasible.

Briefing

Deep learning research is shifting from “interesting ideas” to “rapidly deployable tools,” and the lecture’s through-line is that the fastest progress now comes from learning setups that reduce human labeling and from scaling compute and data—often in ways that let one model transfer across many tasks. The talk starts by quantifying the problem: arXiv posts thousands of machine learning and AI papers per month, with the curve still rising, making it impossible for any individual to read everything. That flood forces a strategy: sample research directions, look for shared themes, and use high-bandwidth learning methods like tutorials and curated paper lists.

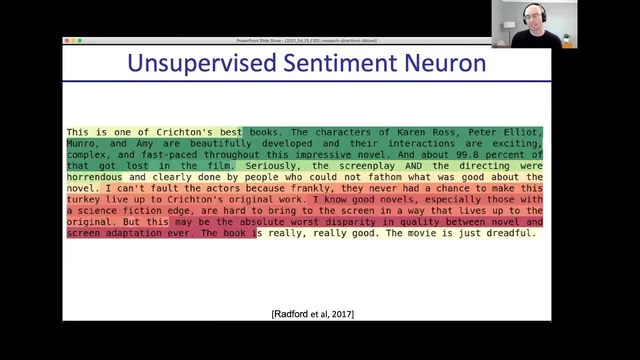

The first major research direction is unsupervised learning—once mostly a research-only lane, now increasingly practical. The lecture contrasts supervised learning’s dependence on labeled data with two ways to loosen that constraint. In semi-supervised learning, labels propagate from a small set of annotated examples into nearby unlabeled points (the intuition is “close points share labels”), and the noisy student approach improves results by training a teacher on labeled data, generating pseudo-labels for unlabeled data, then retraining a student on those pseudo-labels while injecting additional noise via dropout, data augmentation, and stochastic depth. A key limitation is distribution mismatch: pseudo-labeling assumes unlabeled data comes from roughly the same distribution as the labeled set.

To remove that assumption, the talk highlights unsupervised pretraining with a shared trunk and two heads: one head learns an auxiliary task without human annotation (e.g., next-token prediction, denoising, or grayscale-to-color), while the other head is fine-tuned on the task that actually matters. This framing is used to explain why large language models became a default for NLP. GPT-2 is presented as a landmark: trained on massive text to predict the next token, then fine-tuned on supervised benchmarks, it achieved new state-of-the-art results across many tasks with a single general model. Scaling up parameters consistently improved performance, and the lecture links this to a broader industry push for more compute—citing that research funding helped drive large-scale training efforts and that GPT-3 outperformed GPT-2.

The same “pretrain then adapt” logic is extended to vision. Because predicting raw pixels is combinatorially hard, vision researchers use proxy tasks: jigsaw puzzles, rotation prediction, and—most prominently—contrastive learning (SimCLR and MoCo). In contrastive learning, two augmented views of the same image are treated as positives while other images act as negatives; fine-tuning can then use only a linear classifier. The lecture emphasizes that, with enough scale, unsupervised vision features can match fully supervised performance and often improve with larger models.

Next comes reinforcement learning, where an agent acts in an environment and learns from delayed reward—raising two central challenges: credit assignment (which actions caused success) and stability during trial-and-error exploration. The lecture surveys major milestones (Atari with DeepMind’s approach, AlphaGo, AlphaGo Zero/AlphaZero) and robotics successes, including learned locomotion and manipulation. It then tackles a practical bottleneck: learning from pixels is far slower than learning from underlying state. A proposed bridge uses contrastive unsupervised learning inside RL pipelines to recover state-like representations from images, enabling image-based RL to match state-based learning in many settings.

Finally, the lecture broadens beyond standard ML tasks into robotics and science. In robotics, it highlights meta-learning for faster adaptation, imitation learning for stronger supervision signals, and—especially—simulation-to-real transfer via domain randomization and domain adaptation. The lecture argues that training in simulation with many randomized variations can generalize surprisingly well, even when the simulator is not realistic. In science and engineering, it points to DeepMind’s AlphaFold and AlphaFold 2 as headline examples of deep learning predicting protein structure from sequence, plus related ideas like speeding design with learned surrogates and using generative models for physics and differential equations.

The closing advice is pragmatic: keep up without reading thousands of papers by relying on conference tutorials, graduate courses, and curated explainers (YouTube channels, newsletters, and “archive sanity” style tools). When reading papers, prioritize those referenced by tutorials or highly discussed via saves and hype, use skim-first strategies, and consider forming reading groups. The lecture ends with a career perspective: a PhD is no longer required to do impactful AI work; it’s mainly justified for those aiming to become deep technical experts who build new tools rather than apply existing ones.

Cornell Notes

The lecture argues that AI progress increasingly depends on scalable learning strategies that reduce human labeling and on using more data and compute to make general pretrained models transferable. Unsupervised and self-supervised learning are highlighted as the fastest-moving research-to-practice area: semi-supervised label propagation and “noisy student” improve accuracy, while modern unsupervised pretraining (e.g., next-token prediction in GPT-2) enables one model to be fine-tuned across many supervised tasks. Vision adapts the same idea using proxy objectives and contrastive learning (SimCLR, MoCo), where fine-tuning can approach fully supervised results. Reinforcement learning is framed around delayed reward and credit assignment, with a key bottleneck that pixel-based learning is much slower than state-based learning; contrastive representation learning can close much of that gap. The talk concludes with practical ways to keep up—tutorials, courses, curated explainers—and with career guidance on when a PhD is worth it.

Why did unsupervised learning shift from “pure research” to something that quickly becomes practice?

How does the noisy student method work, and what intuition connects it to label propagation?

What is the key assumption behind semi-supervised label propagation, and why does unsupervised pretraining avoid it?

Why does contrastive learning matter for vision, and what does it train the model to do?

What makes reinforcement learning harder than supervised learning?

How does contrastive unsupervised learning speed up reinforcement learning from pixels?

Review Questions

- Which parts of semi-supervised learning rely on distribution alignment, and how does unsupervised pretraining change the assumptions?

- Explain the credit assignment problem in reinforcement learning and give an example of why delayed reward makes it difficult.

- What role does contrastive learning play in bridging the pixel-vs-state learning gap for reinforcement learning?

Key Points

- 1

arXiv’s machine learning paper volume is high enough that individuals need curation strategies; reading everything is not feasible.

- 2

Semi-supervised learning can improve accuracy by propagating labels from a small labeled set into dense regions of unlabeled data, then retraining with noise (noisy student).

- 3

Noisy student depends on pseudo-label confidence and on the unlabeled data matching the labeled distribution; distribution mismatch limits its reliability.

- 4

Unsupervised pretraining with a shared trunk plus a supervised fine-tuning head enables one general model to transfer across many supervised tasks, with scaling often improving results.

- 5

In vision, contrastive learning (SimCLR/MoCo) uses augmented views as positives and other images as negatives, producing reusable features that can approach supervised performance after fine-tuning.

- 6

Reinforcement learning’s core difficulties are delayed reward (credit assignment) and training stability during exploration; pixel-based RL is often much slower than state-based RL.

- 7

Simulation-to-real progress often comes from domain randomization and domain adaptation rather than perfect realism, and science applications like AlphaFold show deep learning’s reach beyond classic ML benchmarks.