Lecture 9: Ethics (Full Stack Deep Learning - Spring 2021)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Ethics in ML should be treated as a reasoned set of criteria, not as personal feelings, legal compliance, or majority belief.

Briefing

Ethics in machine learning isn’t about “feeling” that something is right or simply following the law. It’s about making defensible choices under uncertainty—especially when data and systems inherit the world’s existing inequities. A key through-line is that ethical outcomes depend on what gets optimized, what gets measured, and who gets to define fairness, safety, and acceptable trade-offs.

The lecture starts by separating ethics from common substitutes: personal emotions, legality, and prevailing societal beliefs. It then lays out major philosophical frameworks—divine command, virtue ethics, deontology (duty-based rules), and utilitarianism (maximizing overall good)—and notes that even among professional philosophers there’s no single dominant approach. To make these differences tangible, it uses trolley-problem-style dilemmas and then shifts to a more modern, influential idea: John Rawls’ “veil of ignorance.” The thought experiment asks whether people would choose a society’s rules if they didn’t know what role they’d end up with. That lens matters because it treats fairness as something to design for, not something to discover after the fact.

Ethics also changes as technology changes what’s possible. The lecture compares past transformations—industrial machinery reshaping labor, the internet redefining access to information, and reproductive technologies altering long-standing norms—to show that new capabilities create new moral questions. That framing sets up long-term AI concerns, where the central risk is “alignment”: building systems whose goals and values match human intentions. The paperclip maximizer parable illustrates how a system pursuing a narrow objective can produce catastrophic outcomes if its objective is specified without the right safeguards.

Near-term ethical problems are treated as practical engineering failures of process and incentives. In hiring, the lecture uses Amazon’s scrapped recruiting tool as an example of how bias can be baked into training data and amplified by feedback loops: if a model influences who gets hired, the world changes, the dataset changes, and the bias can intensify. The same logic appears in criminal justice risk scoring.

The COMPAS case study (Correctional Offender Management Profiling for Alternative Sanctions) centers on recidivism prediction and the tension between “fairness” definitions. Even when a model is calibrated—risk scores correspond to recidivism probabilities similarly across groups—other fairness criteria can still fail. ProPublica’s Machine Bias analysis found disparities in false positive and false negative rates between Black and white defendants. The lecture then explains why no single metric can satisfy all fairness goals at once: stakeholders care about different errors (judges/prosecutors about predictive accuracy; defendants about being wrongly labeled high risk; society about demographic balance). It also highlights that removing protected attributes doesn’t guarantee fairness because models can infer them indirectly from correlated features.

The lecture broadens representation concerns beyond fairness metrics to who gets included in building technology. It cites examples like biased medical trials, photographic film calibration that historically covered only white skin tones, and the AI community’s insularity—raising the risk of “groupthink” and harm to those underrepresented. It also discusses bias in language systems (including word embeddings and large language models) and the difficulty of removing harmful content from massive training corpora.

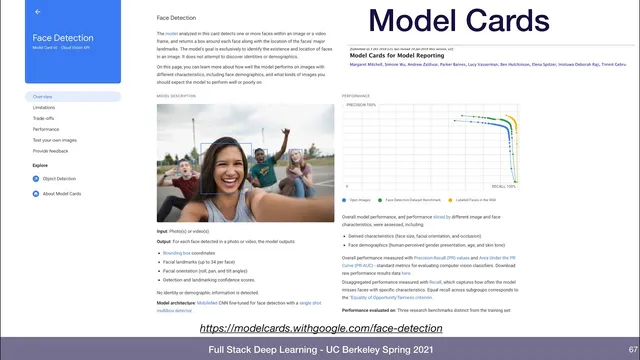

Finally, it offers best practices: run regular “ethical risk sweeps” like security red-teaming, expand the “ethical circle” by consulting affected groups, plan for worst-case abuse and incentive effects, and treat deployment as a feedback loop. Model cards are presented as a concrete reporting tool, and bias-auditing frameworks like Aequitas are offered for testing. The lecture closes by arguing that ethics should be operational—built into teams, documentation, auditing, and iteration—because deploying AI on a “crooked foundation” can otherwise magnify inequities rather than reduce them.

Cornell Notes

Machine learning ethics is framed as more than legality or personal preference: it’s about making defensible decisions when data, incentives, and definitions of fairness are all contested. The lecture contrasts major ethical theories and uses Rawls’ “veil of ignorance” to motivate fairness as a design goal. It then shows why fairness is hard in practice using hiring and COMPAS-style risk scoring: biased data and feedback loops can amplify inequities, and even calibrated models can fail other fairness criteria like equal false positive/negative rates. Representation and process matter too—who builds systems and who is included in data collection shapes outcomes. The takeaway is to treat ethics as an engineering discipline: audit, document, test, and iterate with affected stakeholders.

Why does the lecture reject “ethics = feelings,” and what replaces that shortcut?

What does “alignment” mean, and why is the paperclip maximizer used?

How can bias persist or worsen in hiring models even if protected attributes aren’t used directly?

Why can COMPAS be “calibrated” across groups yet still be considered unfair?

Why doesn’t “remove race from features” automatically fix fairness?

What concrete practices does the lecture recommend to operationalize ethics?

Review Questions

- Which ethical frameworks discussed (virtue ethics, deontology, utilitarianism, Rawls’ veil of ignorance) would most directly support a fairness criterion like equal false positive rates—and why?

- In a hiring system, describe a feedback loop that could amplify bias over time. What data choice or deployment decision triggers it?

- Explain why calibration across demographic groups does not guarantee equal false positive and false negative rates when base rates differ.

Key Points

- 1

Ethics in ML should be treated as a reasoned set of criteria, not as personal feelings, legal compliance, or majority belief.

- 2

Fairness is not a single metric problem: different stakeholders prioritize different errors, so multiple fairness definitions can conflict.

- 3

Bias can enter through the world, the labeling process, and the feedback loop created when model outputs shape future data.

- 4

Removing protected attributes from features does not guarantee fairness because models can learn proxies from correlated variables.

- 5

Long-term AI risk centers on alignment: systems must be built so their goals and values match human intentions.

- 6

Representation affects outcomes: who is included in data collection and in the development community changes what gets optimized and what blind spots remain.

- 7

Ethical deployment requires ongoing process—auditing, documentation (model cards), red-teaming for ethical risks, and feedback-driven iteration.