Linear Algebra 14 | Column Picture of the Matrix-Vector Product

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Treat an m×n matrix A as a list of its n column vectors a1,…,an, each in R^m.

Briefing

A matrix can be understood as a “machine” that outputs a vector built from its own columns: multiplying a matrix A by a vector x produces a result Ax that is always a linear combination of A’s columns, with the coefficients taken from x. That shift—from viewing A as a mere table of numbers to viewing it as a structured collection of column vectors—clarifies what the matrix-vector product is really doing and why it naturally corresponds to a linear map.

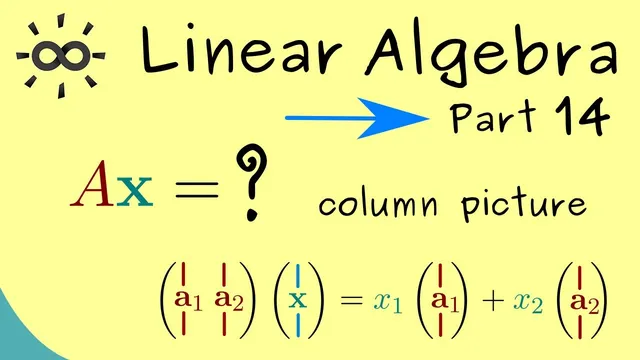

Start with an m×n matrix A. Instead of treating it as m rows and n columns, the column picture renames the columns: the first column is a1, the second is a2, and so on up to the nth column an. Each column ai is itself a column vector with m components. Now consider a vector x in R^n, written as x = (x1, x2, …, xn). The matrix-vector product Ax can be rewritten using the columns of A:

Ax = x1 a1 + x2 a2 + ··· + xn an.

This identity turns the computation into a clear recipe: multiply each scalar component of x by the corresponding column of A, then add the resulting vectors. Because each ai lives in R^m, the sum also lives in R^m, matching the expected output size of Ax.

That observation has a conceptual payoff. The transformation x ↦ Ax takes an input vector from R^n and returns an output vector in R^m. In mathematics, any function that maps vectors between vector spaces is a map, and here the map is determined by the matrix. So the matrix A can be “lifted” into an abstract object: define a map f_A : R^n → R^m by f_A(x) = Ax. The key point is that the information in the matrix and the information in the map are the same—A fully determines how every input vector is transformed.

Finally, this framing sets up the broader linear-algebra storyline: the column picture explains the matrix-vector product in terms of linear combinations of columns, and it anticipates a complementary “row picture” approach next. The column viewpoint also foreshadows why linear maps matter: later, it will be shown that f_A is a linear map, tying the concrete computation Ax to the abstract structure of linear transformations.

Cornell Notes

An m×n matrix A can be viewed as a collection of its n column vectors a1, a2, …, an, each with m components. For any vector x in R^n with components (x1, …, xn), the product Ax can be written as Ax = x1 a1 + x2 a2 + ··· + xn an. This shows that Ax is always a linear combination of A’s columns, with the coefficients coming directly from the entries of x. Because the input is in R^n and the output is in R^m, A defines a map f_A : R^n → R^m via f_A(x) = Ax. Understanding the matrix this way makes the matrix-vector product’s structure—and its connection to linear maps—much clearer.

How does the column picture rewrite the matrix-vector product Ax?

Why does Ax always land in R^m in the column picture?

What does the identity Ax = x1 a1 + ··· + xn an tell you about what a matrix does?

How does the matrix A become an abstract map f_A?

What role do the components of x play in the column picture?

Review Questions

- Given an m×n matrix A with columns a1,…,an and a vector x=(x1,…,xn), write Ax using the column picture.

- Why must the output of Ax lie in R^m when A has m rows?

- How does defining f_A(x)=Ax turn a matrix into a map between vector spaces?

Key Points

- 1

Treat an m×n matrix A as a list of its n column vectors a1,…,an, each in R^m.

- 2

For x ∈ R^n, the product Ax equals the linear combination x1 a1 + x2 a2 + ··· + xn an.

- 3

The entries of x serve as the coefficients for combining the columns of A.

- 4

Because all columns lie in R^m, Ax always lies in R^m.

- 5

A matrix defines a transformation from R^n to R^m via x ↦ Ax.

- 6

The transformation x ↦ Ax can be formalized as a map f_A : R^n → R^m with f_A(x)=Ax.

- 7

The column picture sets up a parallel “row picture” perspective for the next step.