Linear Algebra 22 | Linear Independence (Definition)

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Two vectors in R² are linearly dependent exactly when one is a scalar multiple of the other (collinearity).

Briefing

Linear dependence is defined by a simple “feedback loop” in vector spaces: a family of vectors is linearly dependent if some non-trivial linear combination of them equals the zero vector. That matters because it captures when vectors fail to add genuinely new directions—some vectors can be reconstructed from others, so the set doesn’t expand the space’s independent directions.

In the two-dimensional plane R², the idea becomes concrete. Two vectors u and v are collinear when they lie on the same line, meaning u is just a scalar multiple of v: there exists a real number λ such that u = λv. Such collinear vectors are called linearly dependent because together they don’t define more than one line’s worth of direction.

In three dimensions R³, the same logic scales up. Three vectors u, v, and w are coplanar when they lie in the same plane. Algebraically, that means u can be written as a linear combination of v and w: u = λv + μw for some real scalars λ and μ. If that happens, the three vectors don’t generate a full three-dimensional set of independent directions; they collapse into a plane.

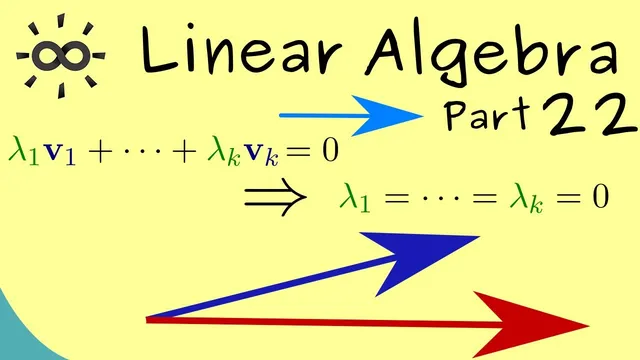

The transcript then generalizes from geometry to the formal definition in Rⁿ. Take k vectors v₁, v₂, …, v_k in Rⁿ and consider them as a family (often written as an ordered tuple, though sets can also be used). The family is linearly dependent if there exist real coefficients λ₁, λ₂, …, λ_k, not all zero, such that

λ₁v₁ + λ₂v₂ + ··· + λ_k v_k = 0.

The “not all zero” condition is crucial: if every coefficient were zero, the equation would be true for any vectors and would be meaningless. With at least one non-zero coefficient, the equality to the zero vector signals a genuine relationship among the vectors—some vectors can be expressed using the others.

Linear independence is defined as the exact opposite condition. A family of vectors is linearly independent when no non-trivial linear combination can produce the zero vector. Equivalently, whenever

λ₁v₁ + λ₂v₂ + ··· + λ_k v_k = 0,

the only solution is λ₁ = λ₂ = ··· = λ_k = 0 (aside from the trivial case where scaling all vectors by zero yields the zero vector). This distinction—dependent sets admit “loops,” independent sets do not—sets up the core tool used throughout later linear algebra topics, such as understanding bases and dimension.

Cornell Notes

A family of vectors in Rⁿ is linearly dependent if some non-trivial linear combination of the vectors equals the zero vector. “Non-trivial” means at least one coefficient is non-zero; otherwise the zero combination would always work and would not reveal anything. In R², this matches collinearity: if u = λv, the two vectors depend on each other. In R³, it matches coplanarity: if u = λv + μw, the three vectors lie in a plane and don’t provide fully independent directions. Linear independence is the opposite: the only way to get the zero vector from a linear combination is to set all coefficients to zero.

Why does the definition of linear dependence require coefficients that are not all zero?

How does linear dependence look in R²?

How does linear dependence look in R³?

What is the general algebraic test for a family of vectors to be linearly dependent in Rⁿ?

What is the defining condition for linear independence?

Review Questions

- In your own words, how does “non-trivial linear combination equals zero” distinguish linear dependence from the trivial zero combination?

- Give the R² geometric condition for linear dependence and translate it into an equation.

- For vectors v₁, …, v_k in Rⁿ, what must be true about coefficients λ₁, …, λ_k if the family is linearly independent?

Key Points

- 1

Two vectors in R² are linearly dependent exactly when one is a scalar multiple of the other (collinearity).

- 2

Three vectors in R³ are linearly dependent exactly when one can be written as a linear combination of the other two (coplanarity).

- 3

A family of k vectors in Rⁿ is linearly dependent if some non-trivial linear combination equals the zero vector.

- 4

“Non-trivial” means at least one coefficient is non-zero; otherwise the condition is automatically satisfied.

- 5

A family is linearly independent when the only linear combination that yields the zero vector uses all zero coefficients.

- 6

Linear dependence signals redundancy: some vectors can be described using others, so the set doesn’t add new independent directions.