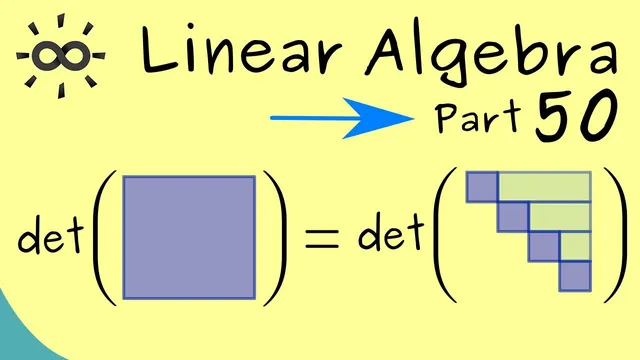

Linear Algebra 50 | Gaussian Elimination for Determinants

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

det(AB)=det(A)·det(B) lets row/column operations be analyzed via multiplication by operation matrices.

Briefing

Gaussian elimination provides a faster, more systematic route to determinants than Laplace (cofactor) expansion—especially for large matrices—by converting a determinant problem into a sequence of determinant-preserving (or determinant-scaled) row/column operations.

The core starting point is the determinant multiplication rule: for two square matrices, det(AB) = det(A)·det(B). That matters because Gaussian elimination is implemented through elementary row operations, which can be represented as left-multiplication by special matrices. Once those “operation matrices” are identified, their determinants tell exactly how the determinant of the original matrix changes.

Three elementary row operations drive the rules. First, adding a multiple of one row to another row leaves the determinant unchanged. This is justified by rewriting the operation as multiplication by a triangular matrix with ones on the diagonal, whose determinant equals 1. Second, swapping two rows flips the sign of the determinant. The corresponding operation matrix has determinant −1, so the overall determinant changes sign. Third, scaling a row by a factor multiplies the determinant by the same factor. Scaling multiple rows by the same factor multiplies the determinant by that factor raised to the number of scaled rows—an easy detail to miss if one only scales “one row at a time” mentally.

Although Gaussian elimination is typically described using row operations (because row operations preserve the kernel, which is crucial for solving linear systems), column operations are also allowed for determinant calculations. The reason is that column operations on a matrix A correspond to row operations on Aᵀ, and det(Aᵀ) = det(A). So the same determinant-change rules apply when manipulating columns.

An extended worked example demonstrates the strategy for a 5×5 determinant. The approach is to use Gaussian elimination to create a column (or row) with many zeros—ideally a single nonzero entry—so that Laplace expansion becomes cheap. In the example, elimination is used to turn the third column into a form suitable for cofactor expansion. The process requires careful attention to the checkerboard sign pattern in Laplace expansion, since each cofactor carries a ± sign depending on position.

After the first elimination step reduces the problem to a 4×4 determinant, additional column operations further simplify the structure. By combining columns in a way that does not involve multiplying a row or column by a scalar, the determinant remains unchanged, while the matrix becomes sparse enough to expand again. The calculation continues until only a 3×3 determinant remains, which is then evaluated to finish the chain. The final result for the original 5×5 determinant is 26.

The takeaway is practical: for computation, Gaussian elimination is the workhorse. In general, reducing a matrix to triangular form makes the determinant equal to the product of the diagonal entries, which is far more efficient than Laplace expansion or the Leibniz formula for large n. The transcript closes by noting that the next step is to connect determinants to linear maps in a more abstract way.

Cornell Notes

Determinants can be computed efficiently using Gaussian elimination by tracking how elementary row/column operations affect det(A). Row operations correspond to left-multiplication by special matrices, letting det(AB)=det(A)det(B) determine the determinant change. Adding a multiple of one row to another keeps the determinant the same; swapping two rows multiplies the determinant by −1; scaling a row by λ multiplies the determinant by λ (and scaling k rows by λ multiplies by λ^k). Column operations are also valid for determinants because they mirror row operations on the transpose and det(Aᵀ)=det(A). A 5×5 example shows elimination to create zeros for Laplace expansion, leading to the final determinant value 26.

Why does Gaussian elimination translate into rules for how determinants change?

What are the exact determinant effects of the three elementary row operations?

When is it safe to use column operations while computing determinants?

How does the example combine Gaussian elimination with Laplace expansion?

What is the final determinant value in the worked 5×5 calculation?

Review Questions

- In a determinant computation, what changes if you swap two rows versus add a multiple of one row to another?

- Why does scaling two different rows by the same factor λ multiply the determinant by λ^2 rather than by λ?

- How does det(Aᵀ)=det(A) justify using column operations during determinant calculations?

Key Points

- 1

det(AB)=det(A)·det(B) lets row/column operations be analyzed via multiplication by operation matrices.

- 2

Adding a multiple of one row to another does not change the determinant.

- 3

Swapping two rows multiplies the determinant by −1.

- 4

Scaling a row by λ multiplies the determinant by λ; scaling k rows by λ multiplies it by λ^k.

- 5

Column operations are valid for determinants because det(Aᵀ)=det(A), so column operations mirror row operations on the transpose.

- 6

Gaussian elimination is most efficient for large matrices because it can reduce the matrix to triangular form, where the determinant is the product of diagonal entries.

- 7

When using Laplace expansion after elimination, the cofactor sign must follow the checkerboard pattern.