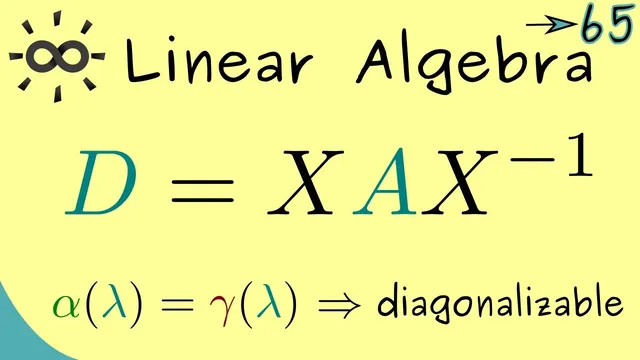

Linear Algebra 65 | Diagonalizable Matrices

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

A matrix is diagonalizable iff it has eigenvectors that form a basis of .

Briefing

Diagonalizable matrices are exactly the square matrices whose eigenvectors are rich enough to form a full coordinate system: if the eigenvectors span all of , then the matrix can be converted into a diagonal matrix by a change of basis. That matters because diagonal matrices make powers, functions, and long-term behavior of linear transformations far easier to compute—everything reduces to scaling along independent eigen-directions.

The key mechanism comes from rewriting vectors in an eigenvector basis. If is the matrix whose columns are eigenvectors of , then in that basis the action of becomes a diagonal scaling matrix . Concretely, the transformation takes the form , where the diagonal entries of are the eigenvalues (counted with multiplicity). The central question becomes whether one can choose eigenvectors that span . Equivalently, the eigenvector matrix must be invertible—meaning the eigenvectors are linearly independent and form a basis.

Examples clarify when this works. A diagonal matrix is automatically diagonalizable because its standard basis vectors are eigenvectors. A non-diagonal triangular matrix can also be diagonalizable: for a upper-triangular matrix with eigenvectors such as and , there are enough independent eigenvectors to span , so a suitable and exist and the matrix can be diagonalized.

But diagonalization can fail even in simple cases. For a triangular matrix with only one eigenvalue and an eigenspace that is only one-dimensional, there are not enough eigenvector directions to span . In that situation, the eigenvectors cannot form a basis, so no diagonalization of the form is possible.

To decide diagonalizability without guessing eigenvectors, the transcript emphasizes multiplicities. Algebraic multiplicity counts how many times an eigenvalue appears as a root of the characteristic polynomial, while geometric multiplicity counts the dimension of the eigenspace. A matrix is diagonalizable precisely when, for every eigenvalue, geometric multiplicity equals algebraic multiplicity (and the geometric multiplicities add up to ).

Two practical criteria follow. First, every normal matrix—such as self-adjoint (Hermitian) matrices—is diagonalizable, and its eigenvectors can be chosen as an orthonormal basis. Second, a quick sufficient test: if a matrix has distinct eigenvalues (so each eigenvalue has algebraic multiplicity 1), then it is automatically diagonalizable. The overall takeaway is a clear checklist: diagonalization is about having enough independent eigenvectors, and several structural properties guarantee that requirement.

Cornell Notes

A square matrix is diagonalizable exactly when it has eigenvectors that form a basis of . If the eigenvectors are assembled as columns of , then can be written as , where is diagonal and its diagonal entries are the eigenvalues (with multiplicity). Diagonalization fails when the eigenspaces are too small—for instance, a matrix with only one eigenvalue and a one-dimensional eigenspace cannot produce enough independent eigenvectors to span . The transcript links this to multiplicities: diagonalizability holds iff, for every eigenvalue, geometric multiplicity equals algebraic multiplicity. Normal matrices (including self-adjoint ones) always satisfy this, and having distinct eigenvalues is a simple sufficient condition.

Why does diagonalization reduce to finding enough eigenvectors?

What goes right in the triangular example where diagonalization succeeds?

Why does the other triangular example fail to be diagonalizable?

How do algebraic and geometric multiplicities determine diagonalizability?

What special guarantee does normality provide?

What is the quick criterion involving distinct eigenvalues?

Review Questions

- State the definition of a diagonalizable matrix in terms of eigenvectors and spanning .

- Explain the relationship between geometric multiplicity and algebraic multiplicity, and how their equality determines diagonalizability.

- Give two sufficient conditions for diagonalizability mentioned in the transcript and describe why they work.

Key Points

- 1

A matrix is diagonalizable iff it has eigenvectors that form a basis of .

- 2

If is formed from eigenvectors as columns, diagonalization takes the form , with diagonal and its entries equal to eigenvalues (with multiplicity).

- 3

Diagonalization fails when eigenspaces are too small—e.g., a matrix with only one eigenvalue and a one-dimensional eigenspace cannot span .

- 4

A matrix is diagonalizable exactly when, for every eigenvalue, geometric multiplicity equals algebraic multiplicity.

- 5

Normal matrices (including self-adjoint/Hermitian ones) are always diagonalizable, with an orthonormal eigenbasis.

- 6

Having distinct eigenvalues is a simple sufficient test for diagonalizability.