Literature Search Process || Hindi

Based on eSupport for Research's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Start by defining the research question and topic scope before searching, because it drives keyword selection and later screening.

Briefing

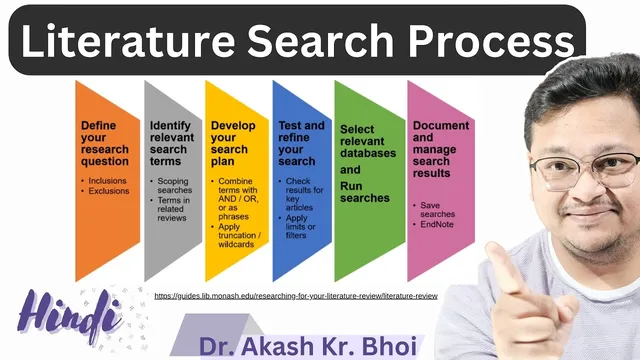

A systematic literature search starts with a clear research question and ends with repeatable search-and-refine cycles across multiple databases—so researchers can find what’s already known, locate gaps, and build a defensible foundation for a literature review or systematic review. The process matters because it helps justify a study, identify relevant theory and methods, and surface useful documentation and resources before writing a review paper.

The workflow begins by defining the topic and converting it into a research question. From there, researchers brainstorm keywords and synonyms tied to the subject area, because search engines and academic databases rely heavily on exact or closely related terms. The transcript emphasizes narrowing broad areas into workable search concepts. For example, “brain-computer interface” is treated as a broad domain that can be narrowed into specific dimensions such as EEG-based brain interfaces or motor imagery–based brain-computer interfaces. This narrowing step is presented as the difference between getting vague results and producing targeted, relevant literature.

Next comes constructing a search strategy tailored to the database being used. Rather than using one generic query everywhere, the approach is to adapt keyword combinations and search logic to match each platform. The transcript lists common options for running searches, including Google Scholar, Semantic Scholar, Scopus, Web of Science, and Lens.org—each offering different coverage and indexing. Researchers are encouraged to run searches, collect results, and then repeat with refined queries when needed.

The middle of the process focuses on why literature searches are performed: to discover existing knowledge on a topic, locate research gaps, justify the need for a study, and identify important theories, ideas, and methodologies. It also highlights practical screening steps such as inclusion and exclusion criteria, applying limits and filters, and using tools like wildcards (for matching word variations) to improve retrieval.

After searching, the workflow shifts to managing outputs. Results should be documented and organized so they can be used later in writing. The transcript mentions saving searches and notes, exporting references (including formats like BibTeX), and using reference management tools such as Zotero and Mendeley. The overall aim is to keep the process traceable and efficient—especially when moving toward systematic literature review formats like systematic reviews, meta-analysis, rapid reviews, scoping reviews, mixed-methods reviews, and related review types.

Finally, the search is treated as iterative: run the search, check results, refine keywords and queries, and repeat across databases until the literature set is sufficiently relevant. That loop—define question, build keyword strategy, search across databases, screen with criteria, and manage references—forms the backbone of a systematic literature review pipeline.

Cornell Notes

A strong literature search is built around a defined research question, then executed through iterative keyword strategy and database-specific searching. Researchers brainstorm keywords and synonyms, narrow broad topics into specific sub-areas (e.g., EEG-based or motor imagery–based brain-computer interfaces), and tailor queries to databases such as Google Scholar, Semantic Scholar, Scopus, Web of Science, and Lens.org. The search then becomes repeatable: run, check results, refine queries, and re-run as needed. Inclusion/exclusion criteria, limits/filters, and techniques like wildcards help improve relevance. Finally, results are documented and exported for later writing using tools like Zotero or Mendeley, supporting literature reviews and systematic review workflows.

Why does a literature search need a research question before any database searching begins?

How does keyword narrowing improve search results in academic databases?

What does “construct a search strategy” mean in practice?

Why run searches across multiple databases instead of relying on a single platform?

How do inclusion/exclusion criteria and screening steps fit into the search process?

What should happen to search results after retrieval so writing becomes easier?

Review Questions

- What steps convert a broad topic into a searchable research question and keyword set?

- How would you narrow a broad domain (like “brain-computer interface”) into multiple sub-areas for better retrieval?

- Why is it necessary to refine queries and repeat searches across databases rather than running a single search once?

Key Points

- 1

Start by defining the research question and topic scope before searching, because it drives keyword selection and later screening.

- 2

Brainstorm keywords and synonyms, then narrow broad concepts into specific dimensions to improve relevance.

- 3

Build database-specific search strategies instead of using one generic query across platforms.

- 4

Run searches across multiple databases (e.g., Google Scholar, Semantic Scholar, Scopus, Web of Science, Lens.org) to improve coverage.

- 5

Use inclusion/exclusion criteria, limits, filters, and techniques like wildcards to reduce irrelevant results.

- 6

Treat searching as iterative: run, review results, refine queries, and repeat until the set is sufficiently relevant.

- 7

Export and manage references using tools like Zotero or Mendeley (including formats like BibTeX) to support later writing and review workflows.