Livecoding: Getting Started with LLMs, by Jeremy Howard

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

MAP@3 rewards ranking the correct option within the top three choices, so systems should optimize for top-k placement rather than single-choice accuracy.

Briefing

The core takeaway is that strong performance on an LLM multiple-choice science benchmark comes less from clever prompting and more from disciplined exploratory data analysis (EDA)—including learning how the evaluation metric can be “gamed” by surface patterns—and from building baselines that mirror how the model will be scored. The walkthrough uses a Kaggle science exam competition dataset generated from Wikipedia passages and GPT-3.5, then demonstrates how to inspect the data, test GPT-3.5 as a baseline, and iterate toward better scoring strategies.

The competition itself is framed around two constraints: it tests whether smaller models can answer science questions under limited compute, and it measures success using mean average precision at 3 (MAP@3)—meaning the correct option must appear somewhere in the model’s top three ranked answers, not necessarily as the single best choice. That scoring rule shifts the optimization target away from “always be correct” toward “rank the right answer highly enough.” The dataset generation process matters just as much: Wikipedia passages are used to create questions, GPT-3.5 generates the multiple-choice items and selects the correct option, and the “correct” answer may reflect GPT-3.5’s interpretation rather than Wikipedia’s factual correctness. This distinction forces a strategy that treats the benchmark as a model-mimicry problem, not a pure knowledge test.

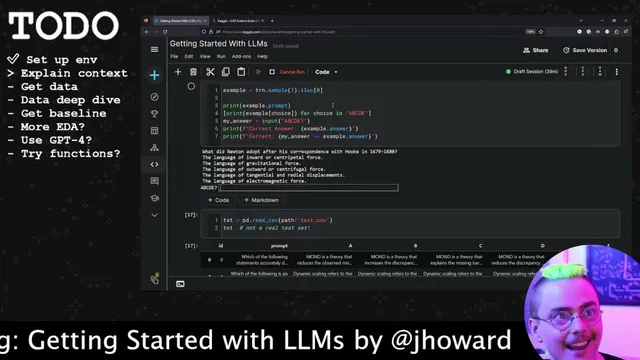

Before writing any modeling code, the session emphasizes doing EDA by hand: manually answering sample questions to understand what kinds of information are required. The presenter finds that many questions hinge on highly specific details rather than general science knowledge. More importantly, repeated attempts reveal that simple heuristics—like choosing answers whose text overlaps with key terms shared across options, or selecting the longest option—can sometimes land the correct answer within the top three. Those observations suggest a practical “cheat surface” for models: they may learn shallow textual patterns that correlate with the correct option, even without deep understanding.

The notebook then establishes baselines by running GPT-3.5 turbo across the dataset. Because API calls are slow and costly, the approach uses concurrency (thread pool execution) and includes a rough cost estimate based on prompt length in tokens/words. The baseline scoring lands around 78 (with partial credit logic tied to whether the correct option appears in first, second, or third rank), which is described as “decent” for a naive setup.

From there, the session tests a more structured prompting approach: a two-step conversational flow where the model first reasons through options and then outputs a ranked set of A–E choices. On a resistivity example where the naive prompt fails, the more deliberate “think then choose” interaction corrects the answer. The walkthrough also warns that chain-of-thought style explanations can be unreliable—models can produce plausible rationalizations that don’t reflect the true reasoning.

Finally, the session explores data generation as an extension of EDA: generating plausible incorrect alternatives using GPT-3.5 so the training set better matches the benchmark’s multiple-choice style. The attempt highlights real-world failure modes—duplicate or malformed alternatives, inconsistent formatting, and the need for validation checks—reinforcing the broader message: LLMs accelerate data work, but the resulting data still requires careful quality control.

Overall, the session argues for a repeatable workflow: inspect the dataset deeply (especially how evaluation rewards ranking), run a baseline that matches the scoring format, mine hard errors to understand whether mistakes come from the model or the dataset, and only then invest in prompt engineering, function calling, or synthetic data generation.

Cornell Notes

The walkthrough targets a Kaggle science multiple-choice benchmark where success depends on ranking: mean average precision at 3 (MAP@3) rewards having the correct option within the model’s top three choices. Because the dataset is generated from Wikipedia passages and GPT-3.5, the “correct” answer can reflect GPT-3.5’s interpretation rather than Wikipedia’s factual truth, so strategy must mimic the benchmark’s data-generating process. The session demonstrates EDA by manually answering questions, uncovering that surface heuristics (like answer-length or shared phrasing) can sometimes correlate with correctness. It then builds baselines using GPT-3.5 turbo, estimates API cost, and improves one failure case by prompting the model to evaluate options before selecting a ranked A–E output. Finally, it experiments with generating plausible incorrect alternatives for synthetic training data, exposing formatting and duplication pitfalls that require validation.

Why does MAP@3 change how you should approach “getting the right answer” in this benchmark?

What makes this competition different from a pure “science knowledge” test?

What does manual EDA reveal about how models might succeed on these questions?

How does the notebook build a baseline that matches the evaluation format?

Why can “chain-of-thought” style prompting help, and why is it risky to trust the explanations?

What goes wrong when generating synthetic multiple-choice alternatives with an LLM?

Review Questions

- How does MAP@3 scoring change the target behavior of a multiple-choice LLM system compared with accuracy?

- What role does the dataset’s GPT-3.5 + Wikipedia generation process play in determining what “correct” means for model training and evaluation?

- When improving prompts, what evidence in the session suggests that failures may come from dataset labeling/creation rather than model reasoning?

Key Points

- 1

MAP@3 rewards ranking the correct option within the top three choices, so systems should optimize for top-k placement rather than single-choice accuracy.

- 2

The benchmark’s “correct answers” are produced via GPT-3.5 over Wikipedia passages, so correctness may reflect GPT-3.5’s interpretation rather than purely factual Wikipedia content.

- 3

Manual EDA can uncover exploitable correlations (e.g., answer-length or shared phrasing) that let models score without deep understanding.

- 4

A baseline that matches the evaluation format (ranked A–E output) is essential before investing in prompt engineering or fine-tuning.

- 5

API-based baselines require cost and latency planning; rough token/word estimates plus concurrency help keep runs feasible.

- 6

Structured prompting (evaluate options, then rank) can fix specific errors, but chain-of-thought rationales can be unreliable as evidence of true reasoning.

- 7

Synthetic data generation for multiple-choice alternatives needs validation for formatting, duplication, and plausibility to avoid teaching the model the wrong patterns.