Llama 2: Full Breakdown

Based on AI Explained's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Llama 2’s upgrade over Llama 1 is driven by more training tokens, more robust data cleaning, doubled context length, and extensive chat fine-tuning.

Briefing

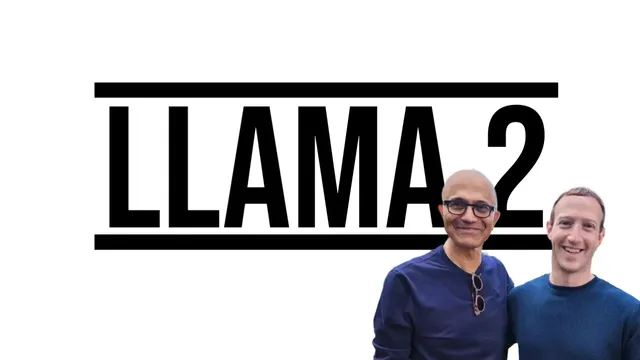

Meta’s Llama 2 lands as a more capable open-weight successor to Llama 1, with the biggest gains coming from a larger training run, a longer context window, and heavy fine-tuning for chat—yet the benchmark picture is still mixed, especially on coding and some reasoning-style tests. The most consequential headline is that Llama 2 is trained on 40 more total tokens than Llama 1, uses more robust data cleaning, and doubles context length, while also undergoing tens of millions of dollars’ worth of chat-oriented fine-tuning. In practice, that combination pushes Llama 2 ahead of most open-source competitors in broad language tasks, even if it doesn’t fully dominate every category.

Across the benchmark suite, Llama 2 is deliberately compared against Llama 1 and other well-known open-source models, not against GPT-4. The results trend toward “clear improvement” over open models, with a major lift on knowledge-heavy evaluation such as MMLU, where it shows strong coverage across subjects. Human evaluation paints a more nuanced story: Llama 2 is described as not amazing at coding, and it also shows “false refusal” behavior—declining to answer certain prompts even when the task is straightforward. That refusal pattern shows up in experiments tied to common-sense question answering (HellaSwag), where the model reportedly refuses a query rather than producing an answer.

The training and safety pipeline is a central part of the story. After pre-training on roughly 2 trillion tokens, the model’s loss keeps falling without signs of saturation, suggesting the training run could have been extended. For alignment, Meta uses reinforcement learning with human feedback built on reward modeling: humans rank model outputs, and the system learns which responses are preferred. Two reward models are trained separately—one optimized for helpfulness and one for safety—creating a measurable trade-off. As safety training increases, helpfulness scores can drop, producing more “can’t satisfy your request” style responses.

On safety and governance, the release rationale is contested. Meta argues that open sourcing promotes transparency, democratizes access, and levels the playing field for organizations worldwide. Critics point to concerns that powerful models can be misused, and the transcript notes a U.S. Senate letter urging caution. Meta’s paper responds by emphasizing tuning and red-teaming, but the responsible-use guide is described as vague, and safety testing is reported as conducted in English.

Finally, the release strategy is framed as both technical and political. The transcript highlights that open access can accelerate research—especially for multimodal and robotics work—because researchers can build beyond API-only constraints. It also notes that Llama 2 is expected to appear on phones and PCs, with licensing terms that may restrict certain uses for very large user bases. Even within the open ecosystem, early derivatives are already emerging, meaning Llama 2’s impact will likely spread quickly through fine-tunes and new model variants.

Overall, Llama 2 looks like a meaningful step forward for open-weight chat and general language performance, but the gains are uneven: coding and some reasoning benchmarks remain areas where other models can match or outperform it, and safety alignment introduces both refusals and occasional over-refusal.

Cornell Notes

Llama 2 is positioned as a step up from Llama 1 through more training data (about 40 more total tokens), more robust data cleaning, doubled context length, and substantial chat fine-tuning. Benchmarks show strong gains over many open-source models—especially on knowledge-style tests like MMLU—but results are mixed on coding and some common-sense tasks, with reports of “false refusal” where the model declines prompts it should answer. Alignment relies on reinforcement learning with human feedback using two reward models: one for helpfulness and one for safety, creating a measurable trade-off between being responsive and being cautious. The open-release strategy is also debated, balancing transparency and research acceleration against misuse risks and the limits of English-only safety testing.

What concrete training changes distinguish Llama 2 from Llama 1, and why do they matter?

How do benchmark results characterize Llama 2’s strengths and weaknesses?

What is “false refusal,” and what example behavior is described?

How does reinforcement learning with human feedback shape Llama 2’s helpfulness vs safety?

Why does the transcript highlight the reward model’s initialization from a chat checkpoint?

What governance and safety concerns accompany open release?

Review Questions

- Which specific training and alignment changes are credited with Llama 2’s performance gains over Llama 1?

- How does using two reward models (helpfulness and safety) create measurable trade-offs in behavior?

- What does “false refusal” imply about Llama 2’s safety alignment, and why might it be a deployment problem even without malicious intent?

Key Points

- 1

Llama 2’s upgrade over Llama 1 is driven by more training tokens, more robust data cleaning, doubled context length, and extensive chat fine-tuning.

- 2

Benchmark comparisons emphasize open-source rivals rather than GPT-4, showing broad gains but not uniform dominance across tasks.

- 3

Human evaluation suggests weaker performance in coding, and common-sense tests can be affected by refusal behavior.

- 4

Alignment uses reinforcement learning with human feedback via two reward models—helpfulness and safety—creating a trade-off between responsiveness and caution.

- 5

Reward models are initialized from chat checkpoints to better detect base-model errors and reduce blind spots.

- 6

Safety testing and guidance are described as English-focused, with developers urged to run their own safety evaluations and tuning.

- 7

Open release accelerates research and derivative model creation, but it also raises misuse concerns that are difficult to fully mitigate with generic safeguards.