Logarithm Fundamentals | Ep. 6 Lockdown live math

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

A logarithmic axis plots log(total) so multiplicative growth becomes additive, making exponential-like trends easier to interpret.

Briefing

Logarithms are presented as the “exponent-inverse” tool for turning multiplicative growth into additive patterns—making huge, fast-changing quantities easier to plot, reason about, and compute. The lesson starts with a practical intuition: when case counts grow multiplicatively, a normal linear axis hides the trend, while a logarithmic axis (plotting the log of the total) turns exponential-like growth into something close to a straight line. That framing matters because it changes how quickly “far away” numbers feel: a best-fit line on a log scale can predict when a quantity reaches a threshold (like a million cases) far more plausibly than a naive linear extrapolation, and it also explains why growth in nature and engineered systems often looks multiplicative from one step to the next.

From there, the transcript pivots to definitions and notation. A key question from Twitter—whether exponentiation’s inverse is nth roots or logarithms—gets answered by showing that the same relationship can be written three ways: 10^3 = 1000, the cube root of 1000 = 10, and log base 10 of 1000 = 3. The “triangle” notation is used to make the inverse relationship concrete: exponentiation asks for the exponent, while logarithms ask for that exponent too, but with the exponent as the unknown. The lesson also clarifies conventions: “log” typically means log base 10, while ln denotes natural log (base e), and in computer science “log” often defaults to base 2.

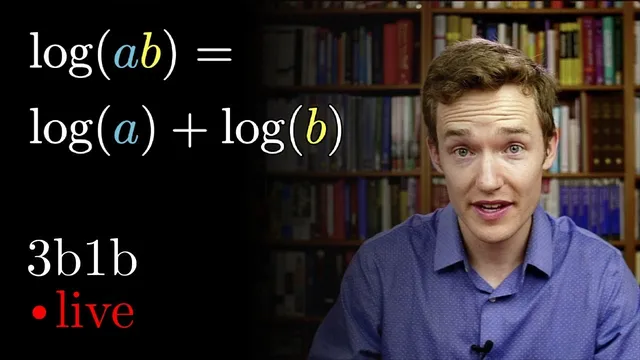

A sequence of quiz-style problems then builds the core logarithm rules through intuition—especially the “zero-counting” idea for powers of 10. The first major property is the product rule: log(A·B) = log(A) + log(B). Next comes the power rule: log(x^n) = n·log(x), explained as “the exponent hops down in front” because raising to a power multiplies the number of zeros. Another rule addresses swapping base and argument: log_a(b) = 1 / log_b(a), illustrated with an example like log_1000(10) = 1/3. The transcript also stresses what does *not* simplify: log(a+b) has no clean rule like log(a)+log(b), and adding inside a log is “weird” compared with multiplying inside.

The real-world payoff arrives with logarithmic scales. The Richter scale is used to show that each +1 step corresponds to multiplying earthquake energy (TNT-equivalent) by about 32, so small-looking differences in magnitude can imply enormous energy changes. The same logic is connected to decibels in audio, where +10 dB corresponds to multiplying intensity by 10.

Finally, the lesson moves into computation and generalization. Change of base is highlighted as the practical bridge between bases: log_b(a) = log_c(a)/log_c(b). That formula enables calculator-friendly evaluation and supports a culminating challenge involving a sum of reciprocals of logs of factorials. By converting everything to a common logarithm and using the fact that logs turn products into sums, the expression collapses neatly to 1. The takeaway is that logarithm rules aren’t memorization tricks; they’re consistent translations of multiplication, exponentiation, and scaling into a language where growth becomes manageable.

Cornell Notes

Logarithms convert multiplicative relationships into additive ones, making exponential-like growth easier to see and compute. The transcript builds this from the inverse relationship between exponentiation and logarithms (e.g., 10^3 = 1000 ↔ log_10(1000)=3), then establishes key rules using the “count the zeros” intuition for powers of 10. Core identities include log(A·B)=log(A)+log(B) and log(x^n)=n·log(x), plus the base-swap rule log_a(b)=1/log_b(a). It also emphasizes a boundary: log(a+b) does not simplify into log(a)+log(b). Change of base, log_b(a)=log_c(a)/log_c(b), ties everything together for real calculations and powers the final factorial/log challenge that simplifies to 1.

Why does a logarithmic axis make exponential growth look linear?

How do logarithms relate to exponentiation, roots, and “inverse” operations?

What are the two most important logarithm rules and how are they justified here?

What happens when the log argument is a sum, like log(a+b)?

How does swapping the base and the argument work?

Why is change of base so useful, and what does it look like?

Review Questions

- Use the product rule and power rule to simplify log(3x^5) assuming log means base 10. Show each step.

- Explain why log(a+b) cannot be simplified using the same rule as log(A·B). Give a quick numerical counterexample.

- Derive the change of base formula log_b(a)=log_c(a)/log_c(b) starting from the definition of logarithms as inverse exponentials.

Key Points

- 1

A logarithmic axis plots log(total) so multiplicative growth becomes additive, making exponential-like trends easier to interpret.

- 2

Exponentiation and logarithms are inverse relationships: base^exponent=value corresponds to log_base(value)=exponent.

- 3

The product rule holds: log(A·B)=log(A)+log(B), supported by the “zeros add when you multiply powers of 10” intuition.

- 4

The power rule holds: log(x^n)=n·log(x), supported by the idea that raising to n multiplies the zeros count.

- 5

Swapping base and argument follows log_a(b)=1/log_b(a).

- 6

No comparable simplification exists for log(a+b); addition inside the log generally does not split into a sum of logs.

- 7

Change of base enables computation across bases: log_b(a)=log_c(a)/log_c(b), and it can simplify complex expressions like the factorial/log challenge to a single value.