Microsoft's Phi 3.5 - The latest SLMs

Based on Sam Witteveen's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Phi 3.5 mini instruct is a 3.8B, fast, locally runnable upgrade with a 128K context window and reported gains on multilingual and multimodal-related benchmarks.

Briefing

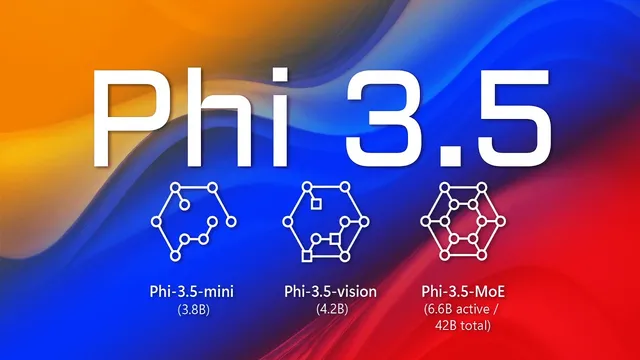

Microsoft has expanded its Phi 3 lineup with three new Phi 3.5 models—two instruction-tuned language models and an updated vision model—pushing small, locally runnable systems closer to the performance of much larger competitors. The headline is Phi 3.5 mini instruct: a 3.8B model designed for speed and local deployment that shows clear benchmark gains over the earlier Phi 3.1 mini naming, including improvements on multilingual and non-European languages, while keeping a 128K context window.

Across the reported MMLU-style results, Phi 3.5 mini instruct posts a “decent bump” over the prior mini variant, with especially noticeable gains in multimodal-related scoring and languages such as Arabic, Chinese, Thai, Japanese, and Korean. Microsoft also frames the model’s position by comparing it against larger systems—Gemma 2 9B, Gemini flash, and GPT-4o mini—suggesting that model choice should depend on the language and task mix. On long-context behavior, the model is described as capable of reaching 128K tokens for most users, with shorter contexts performing near an 8B-class baseline (Llama 3.1 8B), while longer contexts favor the larger Llama 3.1.

The second release, Phi 3.5 MOE instruct, brings a Mixture-of-Experts approach to Phi 3.5 with a much larger overall configuration: described as a 16×3.8B model with a forward path around 6.6B parameters. It’s trained on nearly 5T tokens (versus 3.4T for the mini) and also supports a 128K context window. Benchmarks are positioned as strong for an open model—MMLU results are claimed to be on par with Gemini flash and GPT-4o mini, and GSM 8K results are said to beat Llama 3.1 and Gemma 2 9B, though it still trails GPT-4o mini. The tradeoff is practicality: local use demands substantial GPU memory, with reported VRAM usage around 33+GB, implying A100-class hardware or multiple consumer GPUs.

The third model updates Phi 3 vision: a roughly 4.2B-parameter system fine-tuned on 500B tokens on top of Phi 3 mini language models. Microsoft indicates it was trained between July and August, only weeks before release. Benchmark comparisons place it ahead of some smaller proprietary options (like Gemini 1.5 flash and GPT-4o mini) while still behind Gemini Pro and GPT-4o. It’s released under an MIT license, and Microsoft pairs the release with an updated Phi 3 cookbook containing recipes for fine-tuning and end-to-end workflows using the vision model.

In hands-on testing described alongside the release, the Phi 3.5 mini instruct is the most compelling day-to-day option: it runs faster than the MOE model and performs at least as well on GSM 8K, including correcting issues seen in the MOE output. The MOE model can enable more agentic/tool-use patterns (including JSON-style outputs) but is slower and not dramatically better for the tester’s standard tasks. Overall, the new Phi 3.5 models aim to make local, private, fine-tunable assistants more capable—especially for multilingual and long-context use—without requiring the jump to proprietary, much larger systems.

Cornell Notes

Microsoft’s Phi 3.5 release adds three instruction- and vision-focused models: Phi 3.5 mini instruct (3.8B), Phi 3.5 MOE instruct (16×3.8B with ~6.6B forward path), and an updated Phi 3 vision model (~4.2B). The mini instruct is positioned as a fast, locally runnable upgrade with a 128K context window and notable gains on multilingual and multimodal-related benchmarks, including Arabic and Chinese. The MOE instruct targets higher benchmark performance but comes with heavy hardware demands (reported ~33+GB VRAM) and slower generation. The vision update is fine-tuned on 500B tokens and released under an MIT license, alongside an updated Phi 3 cookbook for vision fine-tuning and end-to-end recipes. The practical takeaway: for most local use, Phi 3.5 mini instruct is the best balance of speed and quality, while MOE is for users who can afford the compute.

What makes Phi 3.5 mini instruct stand out compared with earlier Phi 3 mini variants?

How do the reported language and data-training details support the multilingual claims?

What is the compute tradeoff behind Phi 3.5 MOE instruct, and why does it matter for local deployment?

Where does Phi 3.5 MOE instruct land on benchmark comparisons, and what still limits it?

How does the updated Phi 3 vision model differ from the language models, and what practical resources come with it?

Review Questions

- Which Phi 3.5 model is most practical for local use in the transcript, and what two reasons are given for that choice?

- How do the transcript’s benchmark comparisons differ between Phi 3.5 mini instruct and Phi 3.5 MOE instruct?

- What hardware requirement is highlighted for running Phi 3.5 MOE instruct, and how does it affect who should choose it?

Key Points

- 1

Phi 3.5 mini instruct is a 3.8B, fast, locally runnable upgrade with a 128K context window and reported gains on multilingual and multimodal-related benchmarks.

- 2

Microsoft attributes the mini’s improvements to training on 3.4 trillion tokens plus newly created synthetic data for math, coding, and common sense.

- 3

Phi 3.5 MOE instruct uses a Mixture-of-Experts design (16×3.8B; ~6.6B forward path) and targets stronger benchmark performance, but it is far more demanding to run locally.

- 4

The MOE model is reported to require roughly 33+GB VRAM in testing, making A100-class or multi-GPU setups more realistic than single consumer cards.

- 5

The updated Phi 3 vision model is about 4.2B parameters, fine-tuned on 500B tokens, and released under an MIT license.

- 6

Microsoft pairs the releases with an updated Phi 3 cookbook, including recipes for vision fine-tuning and end-to-end workflows (including structured outputs and tool-use patterns).