ML Test Score (2) - Testing & Deployment - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Production ML requires testing across training, inference, and serving-time change; static pre-deployment checks are not enough.

Briefing

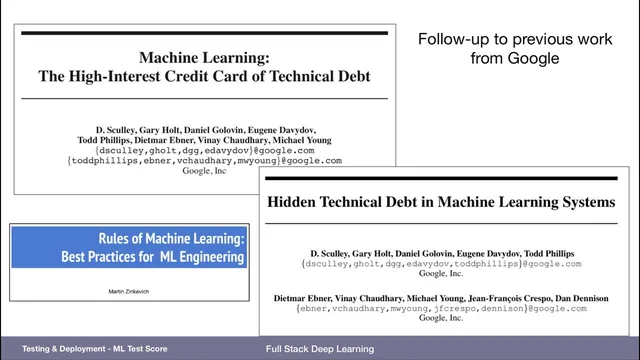

Machine learning systems accumulate “hidden technical debt” because the work doesn’t end at model training. Once a model is deployed, it becomes a living pipeline that ingests new data, depends on upstream data and infrastructure, and must be continuously validated for data drift, numerical stability, and performance regressions. A practical way to manage that complexity is the ML Test Score rubric, which breaks production readiness into distinct buckets—training/infrastructure tests, model tests for the prediction system, and serving-time monitoring for data skew and prediction quality.

The rubric starts by mapping where failures originate. Training is a process combining code and data; the training system needs infrastructure tests that ensure reproducibility and correct integration of the pipeline. The deployed model then runs inference as a prediction system; model tests should validate model specs, offline/online metric correlation, hyperparameter tuning, and debuggability. Serving-time checks focus on what changes after deployment: feature and input expectations, data distribution shifts, dependency changes, and invariants that must hold for inputs across training and serving. The goal is to catch breakages that traditional software testing misses—like a silent change in input shape or feature semantics that only appears once real traffic flows through the system.

To score teams, the rubric assigns points across these dimensions, with a score of zero indicating a research-like setup rather than a production system. Scores above roughly five signal exceptional automation and monitoring; around two to four suggests some testing exists but significant gaps remain. In a study of 36 Google teams with production ML systems, the average score across dimensions was under one for most categories, implying that even large organizations often treat many of these safeguards as aspirational rather than routine.

The rubric also gets specific about what “good” looks like. Data expectations should be captured in a schema, features should be justified and governed, and privacy controls must prevent sensitive fields from leaking into training or inference (for example, masking or dropping a user-name column while keeping non-sensitive user actions, or mapping identifiers to anonymized IDs). Model specs should be reviewed and unit tested offline and online metrics should correlate; hyperparameters should be tuned; and staleness and inclusion/bias considerations should be tracked. Deployment safety mechanisms matter too: models should be rolled out via canary releases so that only a small slice of traffic uses the new model, and rollbacks should be ready if monitoring detects problems.

For teams with limited resources, the guidance prioritizes fundamentals: make training reproducible so failures can be traced and rerun; invest in monitoring so dependency or data changes trigger alerts; and validate model quality on important data slices rather than relying solely on aggregate academic metrics. The underlying message is that production ML engineering is closer to operating a complex system than shipping a static artifact—so testing and monitoring must span the entire lifecycle, not just the moment a model hits “train.”

Cornell Notes

The ML Test Score rubric treats production ML as a system with multiple failure points: training, model inference, and serving-time data changes. It organizes testing into infrastructure tests for reproducible training pipelines, model tests that validate model specs and quality (including offline/online metric correlation and numerical stability), and serving monitoring for data skew, dependency changes, and input invariants. Teams are scored across these dimensions, and a study of 36 production teams found average scores under one in most areas—suggesting many safeguards are still uncommon. For resource-constrained teams, the highest priorities are reproducible training, monitoring that detects when production breaks, and quality checks on “important data slices” where mistakes matter most to users.

Why does ML create more testing complexity than traditional software?

What does “privacy controls in the pipeline” mean in practice?

What are “model specs” and why do they need unit testing?

How do canary deployments reduce risk during model rollout?

What does “numerical stability” refer to in model testing?

If a team can’t do everything, what should it prioritize?

Review Questions

- How does the ML Test Score rubric separate responsibilities across training/infrastructure tests, model tests, and serving-time monitoring?

- Give two examples of production failures that monitoring and input invariants are meant to catch that unit tests alone would miss.

- Why might “important data slices” be more actionable than a single overall metric when deciding whether a model is safe to deploy?

Key Points

- 1

Production ML requires testing across training, inference, and serving-time change; static pre-deployment checks are not enough.

- 2

The ML Test Score rubric organizes safeguards into infrastructure tests (training reproducibility), model tests (specs, stability, quality), and serving monitoring (data skew, invariants, dependency changes).

- 3

Privacy controls should prevent sensitive fields from entering the model pipeline, such as masking/dropping columns or mapping identifiers to anonymized IDs.

- 4

Deployment safety should rely on canary rollouts and fast rollback so issues affect only a small portion of users.

- 5

In a study of 36 production ML teams, average rubric scores were under one across most dimensions, indicating widespread gaps in automated testing and monitoring.

- 6

With limited resources, prioritize reproducible training, monitoring that alerts on production breakage, and quality validation on high-stakes data slices rather than only aggregate metrics.