Multivariable Calculus 31 | Lagrangian

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

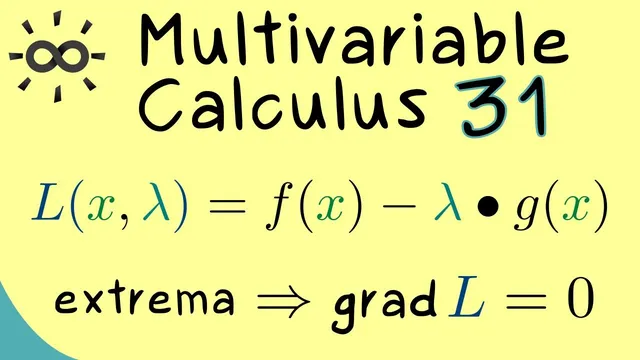

The Lagrangian packages both the objective and the constraints into one real-valued function.

Briefing

Constrained optimization in multivariable calculus gets a cleaner “recipe” once the Lagrange multipliers method is rewritten using a single object: the Lagrangian. Instead of solving separate equations for the constraint and for stationarity of the original function, the method bundles everything into one real-valued function whose gradient vanishes exactly when both the constraint and the multiplier-weighted gradient condition hold. This matters because it turns a constrained extremum problem into an unconstrained-looking system—making both computation and reasoning more systematic.

The setup starts with a target function and constraint functions , typically with . Writing the constraints as a vector map , the constrained extrema candidates must satisfy constraint equations plus stationarity equations involving gradients. Those stationarity equations introduce unknown multipliers , giving enough freedom to match the gradient components.

The key simplification is the Lagrangian. Define a real-valued function by where is the standard inner product in , i.e. . With this definition, the method becomes: compute the gradient of with respect to both sets of variables— and —and set it to zero.

Concretely, the gradient components split into two groups. Differentiating with respect to the coordinates of yields a condition built from gradients: the -part becomes . Differentiating with respect to each multiplier gives , so the entire -gradient collapses to . Therefore, holds exactly when both and are satisfied. The constrained extremum candidates are the -coordinates of solutions to this combined system.

Regularity assumptions matter for the method’s reliability: if the Jacobian of has the right rank (described as a “subjective map” condition in the transcript), then at a constrained local extremum there exist multipliers such that the Lagrangian gradient condition holds. The practical workflow is also emphasized: (1) correctly formulate the constraint function , (2) check the Jacobian regularity on the constraint set to avoid exceptional points, (3) build and solve to get a finite list of candidate points, and (4) decide whether each candidate is a maximum or minimum, typically using second-order information via the Hessian of for functions—though in many applications prior knowledge about suffices. The series closes by framing this Lagrangian-based method as the necessary-condition engine for extrema under constraints.

Cornell Notes

The Lagrange multipliers method can be rewritten using a single function, the Lagrangian , which turns constrained optimization into a system where a gradient must vanish. For constraints with and objective , the Lagrangian is defined as . Setting produces two conditions at once: , which forces , and , which gives the multiplier-weighted stationarity condition. Under a Jacobian regularity assumption, constrained local extrema must satisfy these equations for some .

Why does defining the Lagrangian simplify constrained extrema problems?

What do the derivatives of with respect to the multipliers tell you?

How does the -gradient condition relate to the original objective and the constraint gradients?

What role does the Jacobian regularity assumption for play?

After solving , how do you decide whether a candidate point is a maximum or minimum?

Review Questions

- Given and constraints , write the Lagrangian and state what equations result from setting .

- Explain why forces the constraint .

- What regularity condition on is needed so that constrained local extrema must satisfy the Lagrangian gradient condition for some multipliers?

Key Points

- 1

The Lagrangian packages both the objective and the constraints into one real-valued function.

- 2

Solving produces both the constraint equations and the stationarity condition .

- 3

Each multiplier derivative satisfies , so the -gradient vanishing is equivalent to satisfying all constraints.

- 4

Under Jacobian regularity assumptions for , any constrained local extremum must admit multipliers that make true.

- 5

A practical workflow is: formulate , check Jacobian regularity on , build , solve , then apply a maximum/minimum test.

- 6

For problems, the Hessian of the Lagrangian can provide a sufficient criterion to classify candidates, though simpler reasoning may suffice in practice.