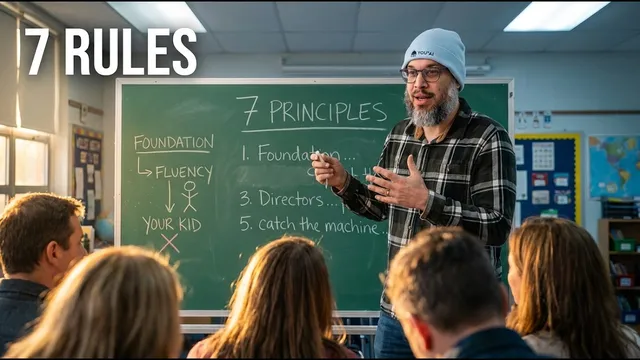

My 10-Year-Old Vibe Codes. She Also Does Math by Hand. Why That's the Only Strategy That Works.

Based on AI News & Strategy Daily | Nate B Jones's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI tutoring can improve learning, but children still need foundational skills to judge whether AI outputs are correct and reasonable.

Briefing

Artificial general intelligence may be arriving faster than schools can adapt, but the real education crisis is simpler: kids are adopting AI before they’ve built the mental foundations needed to use it well. The stakes are practical and measurable—AI tutoring can boost learning, yet educators are reporting that students who relied on AI early are arriving unable to read deeply, synthesize arguments, or sustain the struggle that turns knowledge into capability. The core prescription is “foundation first, leverage second”: keep reading, writing, and math-by-hand as non-negotiables, then introduce AI as a guided extension rather than a default replacement.

The argument starts with evidence that AI tutoring can outperform traditional instruction. Studies cited from Harvard and work involving Google DeepMind report that AI tutors can teach more content in less time and can outperform human tutors on problem-solving tasks; combining human teachers with AI tutoring reportedly doubles knowledge transfer. Usage is surging globally—students increasingly rely on AI for learning, with sharp growth reported in the UK. The implication isn’t that AI should be banned. It’s that the bottleneck has shifted: the constraint is no longer whether personalized tutoring works, but how to teach children what to do when a tool can generate answers instantly.

That’s where the “calculator moment” analogy comes in. In the 1970s, calculators were treated as cheating and feared to destroy arithmetic thinking. The eventual outcome was different: calculators changed what mathematical thinking meant, but only after students learned mechanics first—so they could estimate reasonableness, catch errors, and connect procedures to concepts. The same pattern is proposed for AI. If students skip the foundation, they lose the ability to evaluate outputs, recognize errors, and exercise judgment.

The transcript repeatedly ties AI use to specification quality and metacognition. Autonomous agents succeed or fail based on how well humans define objectives, constraints, and evaluation criteria; vague access without clear boundaries leads to trouble. Translating that into education, the goal is to teach children to write “specs” in plain language—what they want, what constraints matter, and what “good” looks like—so they can direct AI rather than outsource thinking. This is framed as a cognitive skill that develops through practice: kids should learn to notice when Claude or other systems are confidently wrong, and they should learn to sanity-check answers using their own understanding.

Concrete classroom-style examples reinforce the point. A child building a game with Claude isn’t just “prompting”; she iterates on intent by decomposing a vague desire into discrete requirements, testing results, and refining the specification. The transcript argues that this kind of work trains the same muscles used in real software development—problem decomposition, iteration, and precise communication.

A major warning targets AI detection. Andrej Karpathy’s quote—“You will never be able to detect the use of AI in homework”—is used to argue that detection regimes are mathematically unreliable and can punish students for work they didn’t do. Instead, education should rethink what it measures: shift toward in-class creation, oral exams, and assessments that reward understanding and judgment.

Finally, the transcript warns about cognitive offloading and learned helplessness: when AI makes tasks frictionless, students may stop building the neural pathways for reading, writing, and reasoning. The proposed solution is deliberate sequencing—teach foundations through effort, then add AI tools with guidance, and keep exercising without the tool so muscles don’t atrophy. The closing message is that the transition won’t be solved by either banning AI or ignoring it; it will be managed at kitchen-table scale through habits that build independence, taste, and metacognitive control.

Cornell Notes

The transcript argues that AI tutoring and AI tools can significantly improve learning, but children need a foundation first—reading, math by hand, and writing—so they can evaluate AI outputs and develop judgment. AI’s biggest educational risk is not that it replaces learning overnight; it can gradually erode skills through cognitive offloading, leaving students unable to read deeply, synthesize arguments, or sustain difficult work. The proposed remedy is deliberate sequencing: build cognitive infrastructure through effort, then introduce AI as a guided extension where kids practice directing it via clear specifications and metacognition. The approach also rejects AI-writing detection as unreliable and calls for assessments that measure understanding and the ability to critique and iterate.

Why does the transcript insist on “foundation first” even while citing evidence that AI tutoring boosts learning?

How does “specification quality” connect to teaching children to use AI effectively?

What does the transcript mean by metacognition in an AI age?

Why does the transcript argue that AI-writing detection is a dead end for schools?

What is “cognitive offloading,” and how does it relate to declining reading and writing skills?

How does the transcript propose teaching AI readiness without rushing into agent-level autonomy?

Review Questions

- What specific skills does the transcript say children must develop before they can safely use AI tools to solve problems?

- How does the “calculator moment” analogy support the idea that AI should be introduced after foundational learning rather than replacing it?

- In what ways does the transcript connect metacognition to better learning outcomes when using AI for writing or problem-solving?

Key Points

- 1

AI tutoring can improve learning, but children still need foundational skills to judge whether AI outputs are correct and reasonable.

- 2

The “calculator moment” shows tools work best when students learn mechanics first, then use the tool to deepen conceptual thinking.

- 3

AI agent performance depends heavily on human specification—clear goals, constraints, and evaluation criteria must be taught as a cognitive skill.

- 4

AI detection for homework is portrayed as unreliable; schools should shift assessment toward understanding, in-class work, and oral evaluation.

- 5

Cognitive offloading can quietly weaken reading, writing, and reasoning skills when students rely on AI before building the habit of struggle.

- 6

The transcript recommends deliberate sequencing: build foundations, guide tool use, practice directing AI, and only later move toward higher autonomy.

- 7

Metacognition—knowing when to use AI and when to rely on one’s own reasoning—is framed as the defining competence of the AI age.