New Privacy Keyboard By Rossmann

Based on The PrimeTime's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Google Takeout is used as an example of how voice-to-text transcriptions can be downloadable and searchable long after the original speech.

Briefing

A privacy keyboard built around offline, local AI is presented as a practical way to reduce one channel of surveillance—especially compared with Google’s voice-to-text, which can rely on cloud processing and leaves behind searchable transcriptions. The core claim is straightforward: Google can store and expose what people say through features like “voice to text,” and even years later those records can surface via Google Takeout as readable transcripts. Rossmann’s own example centers on finding “arguments” in his downloaded transcription history that he insists were actually from a decade earlier—evidence, in his view, that phone audio and speech-to-text logging can persist and be retrievable long after the moment.

That discovery becomes a broader indictment of how consent works in practice. He argues that most users never meaningfully read end-user license agreements, whether it’s software like Microsoft Windows or legal documents like NDAs, and that companies rely on that gap. He connects this to digital “ownership” versus “license” language, pointing to cases where “purchase” can turn out to be a revocable temporary right. His frustration extends beyond DRM to the idea that consumers pay for access that can be withdrawn, and he frames this as a pattern across subscription software and digital storefronts.

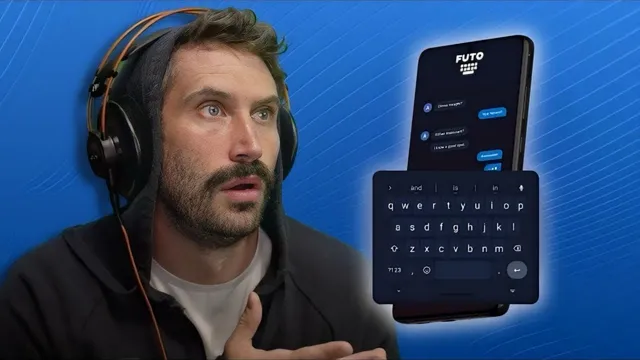

The discussion then pivots from surveillance anxiety to an alternative: a keyboard app called “photo.org keboard” (described as a pre-alpha product) that performs speech-to-text locally using an on-device AI/LM approach. The pitch is that it can work without connecting to the internet, can improve over time by training on the user, and can be paired with a firewall to block outbound connections while still functioning. He demonstrates offline dictation and claims it is faster and more accurate than Google’s voice-to-text, including punctuation behavior that he finds less intrusive than Google’s habit of auto-inserting punctuation.

Alongside the technical pitch, he tackles a moral dilemma about ad blocking. He says he avoids ad blockers on principle because blocking ads deprives developers of revenue, even though he wants privacy protections. He contrasts aggressive, ad-heavy sites with the ad-supported model that funds his own work, arguing that the ethics of blocking aren’t one-size-fits-all.

By the end, the takeaway is not that surveillance can be fully stopped, but that reducing “one less vector” matters. He also expresses skepticism toward both big tech and government assurances, arguing that anyone promising safety or security should be treated cautiously. The proposed path forward is incremental: use tools that keep more processing local, reduce reliance on always-listening cloud services, and accept that complete elimination of data collection may be unrealistic—while still pushing for meaningful constraints.

Cornell Notes

The transcript argues that speech-to-text systems can log and store what people say in ways that remain retrievable years later, citing Google Takeout as evidence through downloadable transcriptions. It broadens into a critique of consent and licensing: users rarely read end-user license agreements, and “purchase” can sometimes mean a revocable license rather than lasting ownership. As a countermeasure, the discussion promotes an offline, local-AI keyboard (“photo.org keboard”) that performs dictation without needing an internet connection and can be trained over time. The speaker claims it’s faster and more accurate than Google’s voice-to-text in offline use and offers more control over punctuation. The practical message is incremental privacy: reduce one surveillance vector even if total prevention isn’t feasible.

How does Google Takeout become evidence of long-term speech logging?

Why does the transcript emphasize end-user license agreements and NDAs?

What’s the complaint about “purchase” and digital content rights?

What does the proposed offline keyboard claim to do differently from Google voice-to-text?

How does the transcript connect privacy with ad-blocking ethics?

What’s the overall strategy for dealing with surveillance concerns?

Review Questions

- What specific mechanism (and example) is used to argue that speech-to-text transcriptions can resurface years later?

- How does the transcript distinguish between “DRM” concerns and the broader issue of revocable licenses or subscriptions?

- What concrete features of the offline keyboard are presented as privacy improvements, and what performance claims are made about it versus Google voice-to-text?

Key Points

- 1

Google Takeout is used as an example of how voice-to-text transcriptions can be downloadable and searchable long after the original speech.

- 2

Speech-to-text logging is framed as a persistent surveillance channel, even when users don’t realize it’s happening.

- 3

The transcript argues that meaningful consent is undermined when users don’t read end-user license agreements and legal terms.

- 4

Digital “purchase” is criticized when it effectively grants a revocable temporary license rather than lasting ownership.

- 5

An offline, local-AI keyboard (“photo.org keboard”) is promoted as a way to reduce reliance on internet-connected speech processing.

- 6

The speaker claims the offline keyboard can work with internet blocked (e.g., via a firewall) and offers faster, more accurate dictation in offline use.

- 7

A moral argument against ad blocking is used to show that privacy choices can conflict with supporting developer revenue models.