OpenAI might have just killed Claude

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

o4-mini is positioned as a high-value coding model with cited pricing of $110 per million input tokens and $4.40 per million output tokens.

Briefing

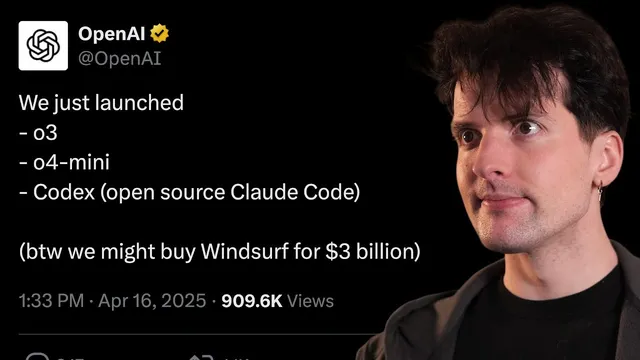

OpenAI’s latest wave—centered on o4-mini and o3-mini—signals a direct push to win back developer mindshare from Anthropic by pairing sharp coding performance with aggressive price cuts and a growing ecosystem of open, tool-first software. The models aren’t just “new releases”; the rollout leans heavily into how developers actually build: tool calls, code integration, and terminal/IDE workflows that reduce friction and cost.

On the model side, o4-mini is positioned as a standout value, with the transcript citing pricing of $110 per million input tokens and $4.40 per million output tokens—described as matching prior o3-mini pricing in practice. o3-mini is cheaper than Gemini 2.5 Pro on output and roughly similar on input, and the speaker frames the overall shift as a real “price war” response after earlier OpenAI pricing moves. The emphasis is that most developer tasks may not need the more expensive non-mini options, because o4-mini is expected to perform as well or better for the majority of use cases.

Benchmarks reinforce that claim, at least for coding and math-adjacent workloads. In a math competition setting, o3-mini scores 98 using Python-only, while o4-mini scores higher when tools are involved. For SWE-bench-style software engineering tasks, o3-mini “high smokes it” on one benchmark that previously made OpenAI look worse relative to Claude 3.5, and both o3-mini and o4-mini rank higher on a GitHub-focused benchmark (the transcript references “SW bench” and discusses accuracy across polyglot code). The speaker also notes a nuance: diffing performance (producing patches rather than full files) appears less improved for o4-mini than expected, with o3-mini still showing a meaningful gap in that area.

Beyond raw benchmarks, the transcript highlights a more strategic technical leap: multimodal “reasoning with images.” Instead of treating images as a separate step, the models are described as transforming user images via tools—cropping, zooming, rotating, and other image processing—while reasoning, and even using search during reasoning. The practical payoff is framed around OCR and troubleshooting: rotating and enhancing photos so text becomes readable, then producing step-by-step explanations for problems or root-cause analysis for build errors. Examples also include math rendering (“LaTeX rendering”) and a maze-solving workflow where the model uses Python to parse an image and then draws on it.

The competitive pressure intensifies with Codex, an open-source coding agent CLI for the terminal. The transcript contrasts it with Claude Code’s non-open-source approach, emphasizing that Codex is Apache licensed and designed to be usable beyond OpenAI’s own stack. It’s implemented with a React-style terminal UI (inkjs), suggesting a developer-friendly experience that could accelerate community adoption.

Finally, the transcript ties these moves to a broader “developer war” narrative: OpenAI is reportedly in talks to acquire Windsurf, a Cursor alternative gaining traction. The implied thesis is that OpenAI is attacking Anthropic’s remaining advantage—developer preference—by lowering costs, improving tool/agent workflows, and building open tooling that makes OpenAI models easier to integrate. The overall takeaway is a market shift where developer experience and cost per “intelligence unit” are becoming the battleground, not just model quality.

Cornell Notes

OpenAI’s o4-mini and o3-mini rollout is framed as a developer-focused offensive: strong coding/math performance paired with major price reductions and deeper tool use. The transcript highlights multimodal reasoning that can “think with images” by applying image transformations (crop/zoom/rotate) and using tools like search and Python during the reasoning process. Benchmarks cited include math and software engineering evaluations, where o4-mini is portrayed as often matching or beating o3-mini while remaining far cheaper than many alternatives. OpenAI also introduces Codex, an Apache-licensed terminal coding agent meant to work as an open tool, not a closed ecosystem. Together, these moves aim to erode Anthropic’s developer goodwill—especially around tool calls and coding workflows.

What makes o4-mini and o3-mini more than just “new models” in this rollout?

How do the cited benchmarks support the claim that o4-mini can replace pricier options for many tasks?

What does “thinking with images” mean operationally, and why does it matter for real workflows?

Why is Codex described as a potential “Claude Code killer”?

How do tool calls and Python access change what models can do?

What strategic competitive narrative ties model releases, open tooling, and a possible acquisition together?

Review Questions

- Which parts of the rollout are framed as the biggest developer wins: pricing, benchmarks, multimodal reasoning, or open-source tooling—and what evidence is given for each?

- How does “diffing” performance differ from “full file” generation in the benchmark discussion, and what implication does that have for choosing between o3-mini and o4-mini?

- Why might extensive tool calls be both beneficial (accuracy) and risky (cost), according to the transcript’s examples?

Key Points

- 1

o4-mini is positioned as a high-value coding model with cited pricing of $110 per million input tokens and $4.40 per million output tokens.

- 2

o3-mini is described as strong but less broadly useful than o4-mini for tool-enabled tasks, with the transcript suggesting o4-mini can cover most developer needs.

- 3

Benchmarks cited include math and software engineering evaluations, where o4-mini and o3-mini are portrayed as competitive or improved versus earlier comparisons to Claude 3.5.

- 4

OpenAI’s models are described as performing multimodal reasoning by transforming images with tools (crop/zoom/rotate/flip) and using tools like search and Python during reasoning.

- 5

Codex is highlighted as an Apache-licensed, open-source terminal coding agent, contrasted with Claude Code’s non-open-source approach.

- 6

The rollout is framed as a developer-focused competitive strategy aimed at reducing Anthropic’s developer goodwill through lower costs, better tool workflows, and open tooling.

- 7

A reported acquisition discussion around Windsurf is presented as part of building IDE/workflow dominance in the “developer war.”