OpenAI "We Are On The Wrong Side Of History" (of Open Source)

Based on The PrimeTime's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Altman’s comments frame open source as a historical and strategic issue, even while internal disagreement and near-term priorities limit immediate change.

Briefing

Sam Altman’s response to a question about releasing model weights and publishing research landed as a direct challenge to OpenAI’s current posture on open source. Altman said OpenAI is “on the wrong side of history” and should pursue a different open-source strategy, while also noting that not everyone at the company shares that view and that open sourcing isn’t the top priority right now. The remark matters because it reframes a long-running tension in AI: whether openness accelerates progress and builds trust—or whether it undermines a company’s ability to recoup massive development costs.

The discussion then pivots to the central risk question: does open sourcing parts of OpenAI’s models endanger the business? A common argument in favor of staying closed is cost and competitive leverage. Training and deploying frontier models is described as extraordinarily expensive, and giving away capabilities could let competitors build similar products without paying the same R&D bill—potentially weakening revenue at a time when ChatGPT’s burn rate is portrayed as high.

But the counterpoint emphasizes that openness can create value in ways that closed strategies often miss. One claim is that recent open releases—highlighted through the example of DeepSeek—demonstrate that “optimizations” can be “left on the table,” suggesting that open ecosystems can iterate faster and squeeze more performance out of existing model families. The transcript also argues that open source draws in talent that might not otherwise join the conversation, turning public artifacts into a proving ground for new ideas and implementations.

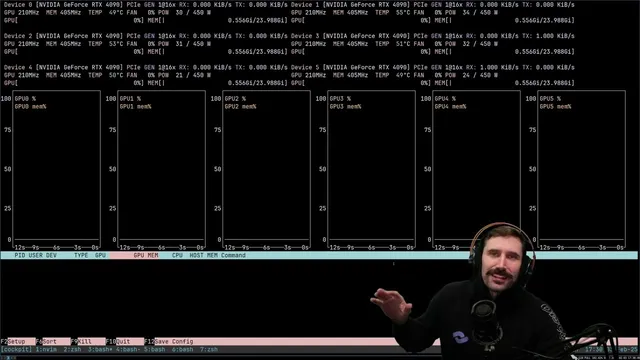

Another practical argument is that open sourcing doesn’t necessarily cannibalize the latest product. Even if weights are released, the newest and best results still come from using OpenAI’s hosted offerings. Meanwhile, running large models locally is framed as difficult for most people: it requires expensive GPUs, reliable uptime, and technical know-how. The transcript contrasts that reality with everyday users who just want tools—like email writing assistance—rather than self-hosting infrastructure. In that view, the average customer base is unlikely to disappear.

The only meaningful danger identified is that another organization could take released models and build something better, effectively leapfrogging OpenAI. Still, the transcript suggests a mitigation: don’t release the absolute latest model weights, and keep the newest capability tied to OpenAI’s platform.

Overall, the takeaway is a push for openness as a goodwill and ecosystem strategy—one that could increase adoption, attract contributors, and improve public perception without necessarily harming revenue. The transcript ends with a prediction that “o1 mini” will be open sourced within about a month, underscoring the belief that the company’s stance may shift soon.

Cornell Notes

Altman’s comments signal a potential shift in OpenAI’s open-source strategy, with the claim that the company has been “on the wrong side of history.” The discussion weighs the main business risk—high costs and competitors using released weights to build similar products—against openness benefits like faster iteration, more talent joining the ecosystem, and improved public goodwill. A key argument is that open sourcing older or non-latest models may not hurt revenue because most users won’t self-host large models; they’ll rely on hosted services for the newest capabilities. The transcript also points to evidence from open releases (e.g., DeepSeek) that community optimizations can unlock performance gains. The remaining concern is competitive leapfrogging, mitigated by not releasing the newest frontier weights.

Why does Altman’s “wrong side of history” remark matter for OpenAI’s strategy?

What is the strongest argument against open sourcing model weights, as presented here?

How does the transcript counter the “competitors will copy us” concern?

What role does DeepSeek play in the reasoning?

What is the “only danger” still identified, and how is it mitigated?

Why does the transcript claim open sourcing won’t meaningfully hurt OpenAI’s bottom line?

Review Questions

- What economic and competitive concerns are raised as reasons to avoid releasing model weights, and why are they considered plausible?

- According to the transcript, what practical barriers prevent most users from self-hosting large language models, and how does that affect revenue risk?

- How does the example of DeepSeek support the argument that openness can improve performance and accelerate innovation?

Key Points

- 1

Altman’s comments frame open source as a historical and strategic issue, even while internal disagreement and near-term priorities limit immediate change.

- 2

The main anti-open-source argument is that releasing weights could enable competitors to replicate capabilities without paying the same development costs.

- 3

Community openness is portrayed as a performance accelerator, with examples suggesting optimizations can be discovered and applied faster.

- 4

Running large models locally is described as difficult for most people due to GPU availability, cost, and operational requirements.

- 5

Open sourcing older or non-latest models is argued to be less risky because the newest capabilities still come from hosted services.

- 6

The remaining threat is competitive leapfrogging, which the transcript suggests can be reduced by not releasing the absolute latest frontier weights.

- 7

The discussion ends with a forecast that “o1 mini” may be open sourced within roughly a month.