Optimizing Neural Network Structures with Keras-Tuner

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Keras Tuner automates architecture trial-and-error by sampling tunable hyperparameters and training multiple model variants.

Briefing

Neural networks rarely get the “right” architecture on the first try—real performance usually comes from trial-and-error. Keras Tuner automates that search by letting developers declare which parts of a model are tunable (layer counts, neuron counts, dropout rates, etc.), then running systematic random trials to find configurations that maximize a chosen metric like validation accuracy. The practical payoff is less hand-tweaking and fewer overnight for-loops, especially when the number of architectural combinations balloons into the hundreds or thousands.

The tutorial frames the problem with a familiar workflow: manually adjust hyperparameters (number of layers, neurons per layer, dropout rate, batch normalization, learning-related choices), train each variant overnight, and record validation loss/accuracy to decide what to keep. That approach works but becomes “ugly” quickly because every small change forces a full re-test. Keras Tuner replaces the manual loop with a tuner object that samples hyperparameters from defined ranges or choices, builds models accordingly, trains them, and logs results.

To demonstrate, the walkthrough uses Fashion-MNIST (described as more challenging than MNIST) loaded from TensorFlow/Keras datasets. Images are 28×28 grayscale with 10 clothing classes. A baseline convolutional model is briefly trained first to confirm the setup works, reaching roughly 77% accuracy after a single epoch—far from the 90%+ target the tutorial aims to reach.

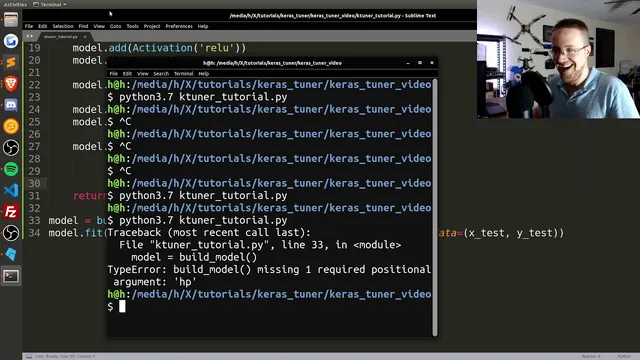

Next comes the tuning setup. A RandomSearch tuner is created with an objective of validation accuracy, a limited number of trials (initially small for a bug check), and a directory for logs. The key mechanism is defining hyperparameters inside the model-building function using HyperParameters objects. Instead of hard-coding architecture choices, the model uses tunable values such as: - an input-related dimension chosen as an integer range (32 to 256 with a step), - a dynamic number of convolutional layers chosen from 1 to 4, - per-layer convolution channel sizes chosen from another integer range (32 to 256).

After running a small search, the tuner reports the hyperparameters and scores for the trials. The tutorial then emphasizes practical workflow: saving the tuner object to a pickle so results can be revisited later without rerunning expensive searches. It also points to the logged trial artifacts—trial JSON files with hyperparameters and scores, plus checkpoints.

With a larger search completed, the results summary lists the top-performing configurations. The best validation accuracy shown is around 87%, with other top models clustered closely (roughly 86–87). The tutorial notes that a previously observed run reached 89.6%, and argues that pushing toward 90%+ is plausible by expanding the search, because the combinatorial space grows quickly when multiple hyperparameters vary together (layer counts × channel sizes × other options). Finally, the best model can be reconstructed as a TensorFlow model and inspected via a model summary, enabling direct training or prediction using the discovered architecture.

Cornell Notes

Keras Tuner turns architecture selection into a hyperparameter search problem. Instead of manually editing layer counts, channel sizes, dropout, or batch normalization and retraining overnight, the model-building function declares tunable parameters (integer ranges and categorical choices). A RandomSearch tuner then samples configurations, trains each one, and scores them using an objective such as validation accuracy on Fashion-MNIST. Results are logged per trial, and saving the tuner object (e.g., via pickle) lets you resume analysis without rerunning expensive experiments. The workflow ends by reconstructing the best-performing TensorFlow model and inspecting it with a summary.

Why does architecture tuning usually require trial-and-error, and what does Keras Tuner change about that process?

What dataset and evaluation target are used in the tuning example?

How are tunable architectural choices represented in the model-building function?

What do “max trials” and “executions_per_trial” mean in RandomSearch?

Why is saving the tuner object (pickle) and checking trial logs important?

How does the workflow produce a usable final model after tuning?

Review Questions

- What hyperparameters in the example are declared as tunable, and how do their ranges influence the size of the search space?

- How do max_trials and executions_per_trial trade off between speed and reliability of the validation-accuracy ranking?

- What information is stored per trial in the tuner’s log directory, and how does that help when you save the tuner object?

Key Points

- 1

Keras Tuner automates architecture trial-and-error by sampling tunable hyperparameters and training multiple model variants.

- 2

Define tunable parameters inside the model-building function using HyperParameters objects (e.g., integer ranges for channel sizes and layer counts).

- 3

Use a tuner objective such as validation accuracy to rank configurations consistently across trials.

- 4

Limit max_trials for quick bug checks, then increase the search budget to explore a larger combinatorial space.

- 5

Save the tuner object (e.g., via pickle) and use the log directory’s trial artifacts to avoid rerunning expensive searches.

- 6

After tuning, reconstruct the best TensorFlow model from the tuner results and inspect it with model.summary() before deploying or further training.