Ordinary Differential Equations 22 | Properties of the Matrix Exponential

Based on The Bright Side of Mathematics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Matrix exponentials e^(tA) are defined for every square matrix A using the power series Σ_{k=0}^∞ (t^k A^k)/k!.

Briefing

Matrix exponentials turn linear systems of ordinary differential equations into an explicit solution formula: once a square matrix A is given, the columns of e^(tA) span the solution space of the homogeneous system x' = Ax, and multiplying e^(tA) by an initial vector x0 yields the unique solution of the initial value problem. That makes it essential not only to define e^(tA), but also to understand how it behaves under differentiation and how it interacts with algebraic operations like inversion.

The matrix exponential is defined for every square matrix A via the power series e^(tA) = Σ_{k=0}^∞ (t^k A^k)/k!. Convergence is handled entry-by-entry: each of the n^2 matrix entries becomes an ordinary real power series, so the matrix exponential exists for all real t and all square matrices A. On a compact interval [a,b], the convergence is uniform, which upgrades the function from merely well-defined to differentiable in t. Differentiability matters because it allows the matrix exponential to be used as a building block for solving differential equations.

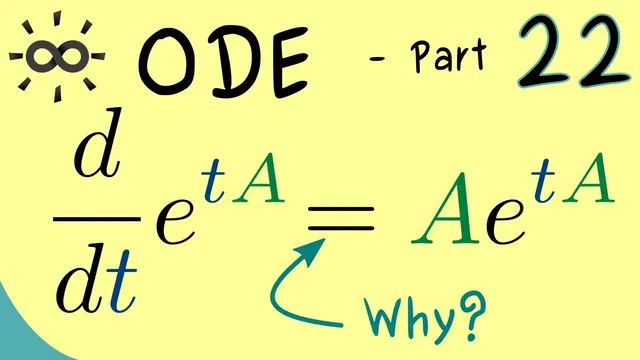

Differentiation follows from the derivative definition using a limit as h → 0 of (e^((t+h)A) − e^(tA))/h. The key technical step is exchanging limit operations, which is justified by the uniform convergence on compact intervals. After substituting the series definition and differentiating term-by-term, the derivative simplifies to a form mirroring the scalar exponential rule: d/dt e^(tA) = A e^(tA) = e^(tA) A. The equality of the “left-multiplication” and “right-multiplication” versions comes from the structure of the series, where A can be pulled through the matrix products by reindexing.

Not every familiar exponential identity survives unchanged in the matrix setting. The scalar identity e^(a+b) = e^a e^b generally fails because matrix multiplication is not commutative. A related product formula (often involving the “Cauchy product” of power series) can be proved cleanly only when the matrices commute—specifically, when AB = BA. Under that commutativity condition, the usual exponentiation identity holds, and it becomes possible to compute inverses. In particular, if A commutes with −A (which it does), then e^(tA) e^(−tA) = e^((tA) + (−tA)) = e^0 = I, so (e^(tA))^−1 = e^(−tA). This inversion property is what makes the matrix exponential a practical tool for solving and manipulating solutions to linear ODE systems.

With these properties—existence via power series, differentiability, the derivative rule, and the inverse formula under commuting conditions—the stage is set for using e^(tA) directly to solve systems of linear differential equations in subsequent lessons.

Cornell Notes

For a square matrix A, the matrix exponential e^(tA) is defined by the power series Σ_{k=0}^∞ (t^k A^k)/k!. Each entry is a convergent scalar series, and on compact t-intervals the convergence is uniform, which implies e^(tA) is differentiable in t. Differentiating using the limit definition and justified limit exchange yields the key rule d/dt e^(tA) = A e^(tA) = e^(tA) A. The familiar scalar identity e^(a+b) = e^a e^b does not hold for arbitrary matrices because multiplication is noncommutative; it works when the matrices commute (AB = BA). With that commuting condition, inverses follow: (e^(tA))^−1 = e^(−tA).

Why does the matrix exponential e^(tA) exist for every square matrix A and every real t?

How does uniform convergence on a compact interval [a,b] help with differentiation?

What is the derivative of e^(tA) with respect to t, and why are there two equivalent forms?

Why doesn’t the scalar exponentiation identity e^(a+b) = e^a e^b automatically carry over to matrices?

How does commutativity enable the inverse formula (e^(tA))^−1 = e^(−tA)?

Review Questions

- State the power series definition of e^(tA) and explain how convergence is justified for matrix entries.

- Derive (or recall) the formula for d/dt e^(tA) and state both equivalent multiplication orders.

- Under what condition does e^(A+B) = e^A e^B hold for matrices, and how does that condition lead to an inverse formula?

Key Points

- 1

Matrix exponentials e^(tA) are defined for every square matrix A using the power series Σ_{k=0}^∞ (t^k A^k)/k!.

- 2

Each matrix entry converges because the series can be treated as n^2 scalar power series in t.

- 3

Uniform convergence on compact intervals implies e^(tA) is differentiable with respect to t.

- 4

The derivative satisfies d/dt e^(tA) = A e^(tA) = e^(tA) A.

- 5

The scalar rule e^(a+b) = e^a e^b fails for general matrices due to noncommutativity.

- 6

The exponentiation identity works when matrices commute (AB = BA).

- 7

When the commuting condition applies, inverses follow: (e^(tA))^−1 = e^(−tA).