Overview (1) - ML Teams - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Treat ML team output as a vector sum: alignment of goals matters as much as individual effort.

Briefing

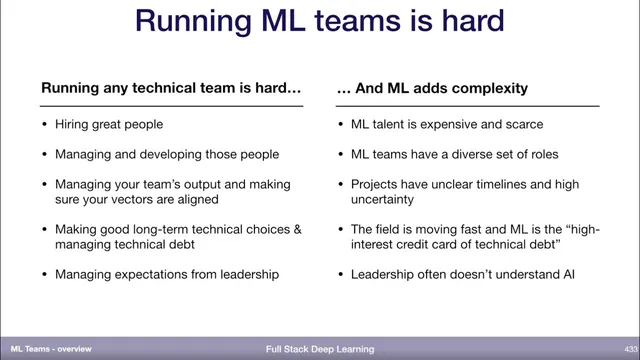

Machine learning teams are unusually difficult to run because every core responsibility of technical management—hiring, alignment of work, long-term engineering decisions, and communication with leadership—gets harder under the uncertainty and fast-moving nature of ML. The central management challenge is that a team’s output behaves like a “vector sum”: even if individuals work hard, the team can fail to deliver if efforts don’t point in the same direction. That alignment problem becomes more complex when ML work spans many different roles rather than a relatively uniform engineering skill set.

Hiring is a major pressure point. In 2019, ML talent is described as both expensive and scarce, and the talent pool is harder to assemble because ML teams require diverse roles—unlike many software engineering teams that can become more homogeneous as the discipline matures. On top of that, ML projects often carry unclear timelines and high uncertainty about what will work, making it harder to plan and measure progress compared with more conventional engineering projects.

Long-term technical decision-making also gets tougher. The field’s rapid pace means today’s model and tooling may become obsolete quickly, so leaders must choose architectures and practices while not knowing which approaches will still matter in a few years. Technical debt is singled out as especially severe in ML: it’s likened to a “high interest credit card,” implying that shortcuts in ML systems can compound costs and degrade future performance faster than in many other engineering contexts.

Managing up adds another layer. Organizational leadership may lack a solid understanding of AI and how ML projects differ from the rest of the work they oversee. That knowledge gap can create misaligned expectations around timelines, risk, and what “progress” looks like, forcing ML managers to spend extra effort translating ML realities to stakeholders.

The lecture’s goal is not to provide easy answers—running ML teams is framed as genuinely hard—but to offer a structured way to think about building and managing them. The plan starts with mapping the landscape of roles on ML teams and the skills each role typically needs. It then connects those roles into a functioning team, explains how such teams fit into the broader company organization, and outlines approaches to managing ML teams. The final portion turns toward practical career guidance: how to prepare for ML hiring, including interview preparation and strategies for getting a job in machine learning.

Cornell Notes

ML teams are hard to manage because uncertainty and rapid change amplify every management task: hiring, aligning individual work into a coherent team output, making long-term technical choices, and communicating with leadership. Output is treated as a vector sum—effort that doesn’t align toward shared goals produces little net progress. ML hiring is expensive and scarce, and ML teams need diverse roles, unlike many more homogeneous software engineering teams. ML projects often have unclear timelines, and technical debt is especially costly in ML, described as a “high interest credit card.” The lecture then lays out a roadmap: role landscape, team structure within the organization, management practices, and hiring/interview guidance for ML careers.

Why does “team output” depend on more than individual effort in ML teams?

What makes ML hiring harder than hiring for many software engineering teams?

How do ML projects differ from typical engineering projects in day-to-day management?

Why is technical debt portrayed as more dangerous in ML than in other engineering domains?

What does “managing up” mean in the context of ML teams?

What learning path does the lecture propose for understanding ML teams and management?

Review Questions

- How does the “vector sum” framing change how you would measure progress for an ML team?

- Which three sources of added complexity for ML management are emphasized, and how would each affect planning and stakeholder communication?

- What role diversity implies for hiring and team design on ML teams, compared with more homogeneous software engineering teams?

Key Points

- 1

Treat ML team output as a vector sum: alignment of goals matters as much as individual effort.

- 2

Expect ML hiring to be expensive and scarce, and plan for diverse role requirements rather than a single uniform skill set.

- 3

Manage ML projects with uncertainty in mind, since timelines and outcomes are often unclear early on.

- 4

Make long-term technical choices under fast-changing conditions, recognizing that today’s model may not remain relevant.

- 5

Treat ML technical debt as especially costly, since shortcuts can compound quickly over time.

- 6

Plan for “managing up” by translating ML-specific differences in risk, timelines, and progress to organizational leadership.

- 7

Use a structured approach to ML team building: roles first, then team composition, then management practices, then hiring and interview preparation.