Processing (6) - Data Management - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Nightly retraining requires orchestrating multiple data sources, including database metadata, user behavior signals from logs, and intermediate model outputs like cat/dog classifier results.

Briefing

Building a photo popularity predictor that updates daily forces data pipelines to do more than just “run a model.” The core need is reliable data management: every night, the system must retrain using fresh inputs drawn from multiple sources—photo metadata stored in a database, user behavior signals that may live in logs, and intermediate outputs from other models like cat/dog classifiers. Those intermediate results become features for the final training job, meaning the training workflow has dependencies that must be satisfied in the right order.

At first glance, the simplest approach resembles a Makefile: define a dependency tree where one task depends on specific files or artifacts, then rerun only what changed. This works well when dependencies are straightforward—such as “this training dataset file” or “this preprocessing output.” But real pipelines quickly outgrow file-based triggers. Dependencies can hinge on database state, external program execution, or runtime conditions that can’t be captured by file timestamps alone. Scaling also complicates matters: some steps may require a big cluster rather than a single machine, and in larger organizations many teams may run similar overnight jobs against different data sources.

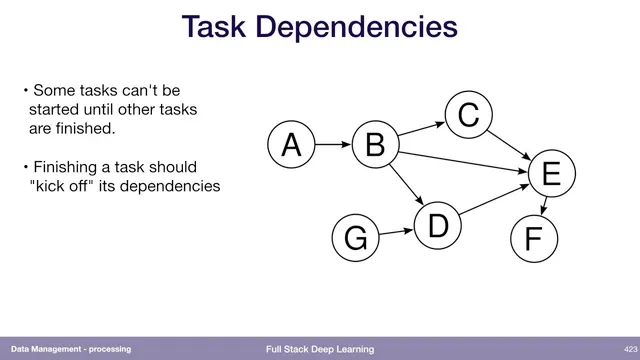

That’s where data workflows come in. Airflow is presented as a leading solution for orchestrating these complex, multi-step jobs. Airflow is Python-native and lets teams define tasks as operators—either built-in operators or wrappers around arbitrary Python code. The tasks are assembled into a directed acyclic graph (DAG) that encodes which steps must finish before others start. Once the DAG is computed, Airflow can submit work to a queue, monitor worker execution, restart failed tasks, and provide a dashboard for progress tracking. The operational burden is real: coordinating permissions, scheduling, and distributed execution adds software-development complexity.

The module also pushes a pragmatic rule: don’t over-engineer. Start with the simplest solution that works, then escalate only when the limitations become clear. A concrete example contrasts heavy Hadoop-style processing with classic Unix command-line pipelines for log searching. In one cited case, scanning terabytes of logs for specific word sequences took 26 minutes on Hadoop, while a Unix pipeline using tools like cat, grep, sort, and unique reportedly finished in about 70 seconds. The speedup is attributed to parallelism inherent in piped commands on multi-core machines, plus additional tuning such as swapping tools (e.g., using awk) and parallelizing further with xargs. The takeaway is that orchestration frameworks like Airflow can be necessary, but not every data task requires a full workflow system—sometimes a single well-chosen command line is enough to get results fast.

Cornell Notes

Daily retraining of a photo popularity model requires dependable data workflows because training inputs come from multiple places and depend on intermediate model outputs. A Makefile-style dependency tree works when tasks depend only on files, but real systems often depend on database state, external programs, and distributed execution across clusters and teams. Airflow addresses this by letting developers define tasks as Python operators arranged in a directed acyclic graph (DAG), then scheduling, monitoring, restarting failures, and tracking progress. The practical guidance is to avoid over-engineering: start with the simplest approach that works, such as efficient Unix pipelines for log processing, before adopting heavier orchestration.

Why does a “photo popularity predictor” force more than a single training script?

When does a Makefile-like approach work well, and when does it break down?

How does Airflow represent and execute complex dependencies?

What kinds of operational problems make workflow orchestration “complicated software development”?

Why does the Unix log-processing example matter in a discussion about Airflow?

Review Questions

- What specific data sources and intermediate outputs must be refreshed nightly for the photo popularity model, and how do they create dependencies?

- Compare file-based dependency tracking (Makefile) with DAG-based orchestration (Airflow). What dependency types cause Makefile-style approaches to fail?

- In the Unix vs. Hadoop example, which tools and pipeline properties are credited for the large speed difference?

Key Points

- 1

Nightly retraining requires orchestrating multiple data sources, including database metadata, user behavior signals from logs, and intermediate model outputs like cat/dog classifier results.

- 2

Makefile-style dependency trees are effective when tasks depend on files, but they struggle with dependencies on database state, external program execution, and non-file runtime conditions.

- 3

Airflow orchestrates complex pipelines by defining Python operators arranged in a directed acyclic graph (DAG) that enforces execution order.

- 4

Airflow can schedule tasks, submit them to queues, restart failed work, and provide progress dashboards—features that matter when pipelines run on clusters and at scale.

- 5

Workflow orchestration adds real engineering complexity (permissions, scheduling, distributed workers, monitoring), so it’s often best to start simpler.

- 6

Efficient Unix command-line pipelines can outperform large distributed systems for certain log-processing tasks by leveraging parallelism and optimized tools.