Reza Shabani - How Replit Trained Their Own LLMs (LLM Bootcamp)

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Replit trains Ghostwriter in-house to improve code-completion quality for specific languages and workflows, rather than relying on general-purpose or broadly trained code models.

Briefing

Replit’s Ghostwriter code-completion model is built through a tightly engineered pipeline designed to make smaller, cheaper, and more specialized LLMs—without sacrificing control over data, evaluation, or deployment. The core idea is that general-purpose models often miss what code-completion needs (especially across specific languages and coding styles), so Replit trains its own models using permissively licensed code at scale, then customizes tokenization, evaluation, and production serving to fit real user workflows.

The motivation for training in-house centers on customization, cost, and control. Replit argues that broad models like GPT-4 or “Chachi Turbo” aren’t well suited for code completion, and even within code-focused models (like Codex), language specialization matters—Replit’s users heavily use web stacks such as JSX and TSX, so its training is weighted toward those languages. Dependency reduction is another driver: Replit wants developers to access models without relying on a small set of external providers. Cost efficiency is framed as existential for scale: with roughly 20 million users, API pricing for tools like Copilot (noted as around “10 bucks a month”) would be too expensive, pushing Replit toward smaller models that can be hosted at drastically lower cost. Data privacy and security also factor in, along with keeping IP and controlling updates.

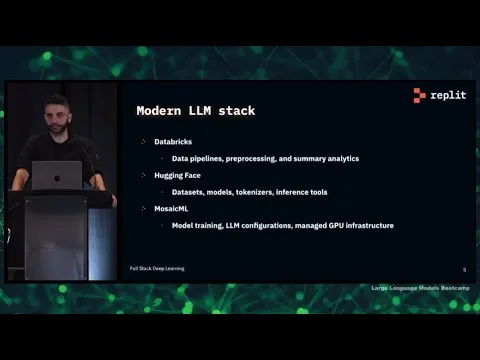

Technically, Replit’s stack is organized into three stages: data processing, model training, and production deployment. Data pipelines run primarily on Databricks, which handles preprocessing, analytics, transformations, and distributed scaling. The starting corpus comes from a large collection of permissively licensed code, deduped and made available on Hugging Face in Parquet or Hugging Face dataset formats. Sources include the GitHub archive and many repositories, filtered by file extensions and licenses, with near-deduplication across roughly 358 programming languages.

Before training, the pipeline scrubs and normalizes the data. Replit anonymizes sensitive content by removing or replacing emails, IP addresses, and secret keys with sampled placeholders. It removes auto-generated code using heuristics and regex, and it filters out minified or non-parsable code—using language-specific checks such as AST parsing for Python to avoid training on Python 2 artifacts. Quality filtering is attempted via signals like GitHub stars or issues, but Replit reports little evidence that those metrics reliably improve outcomes. A major differentiator is tokenizer training: instead of relying on an off-the-shelf Codex/codegen tokenizer, Replit trains a custom tokenizer and vocabulary from its own code data, aiming for a smaller vocabulary that better reflects code tokens. The result is faster training and inference and more efficient context usage.

Model training uses MosaicML, described as a GPU-focused platform for distributed training across multiple cloud providers, with pre-tuned LLM configurations and infrastructure for fault tolerance. Training runs are monitored with Weights & Biases, and the goal is stable loss curves without spikes.

Evaluation is treated as the hardest part. Replit uses “human eval” for code by generating code from prompts (e.g., writing a Python function) and then executing tests to verify correctness—leveraging Hugging Face code inference to run code quickly. But runtime cost, multi-language complexity, and the need for decontamination (ensuring test cases weren’t present in training data) make evaluation increasingly difficult as models saturate. Deployment into production uses FasterTransformer and Triton Inference Server, with batching, request cancellation, autoscaling, and handling of GPU constraints.

Lessons learned emphasize that data pipelines dominate effort and iteration speed, evaluation blends art and science, and real-world “model vibes” from user testing can reveal issues that offline metrics miss. Collaboration across the stack is essential because training-time features may not translate cleanly to inference-time libraries or latency budgets.

Cornell Notes

Replit trains its own Ghostwriter code-completion LLM to better match coding workflows, reduce reliance on external model APIs, and keep costs manageable for a large user base. The pipeline starts with permissively licensed, deduped code (sourced from the GitHub archive and filtered across ~358 languages), then uses Databricks for scalable preprocessing: anonymization, removal of auto-generated and non-parsable code, and quality-oriented filters. A key customization is training a custom tokenizer/vocabulary from Replit’s code data, producing a smaller, code-focused vocabulary that speeds training and inference. MosaicML runs distributed GPU training with tuned LLM configurations, while evaluation relies on executing generated code against test cases and aggressively decontaminating to avoid training-test leakage. Production serving uses FasterTransformer and Triton with batching, cancellation, and autoscaling to meet low-latency needs.

Why would a company choose to train its own LLM instead of using a general-purpose model?

What does Replit’s training pipeline look like end-to-end?

How does Replit prepare code data before training?

Why train a custom tokenizer, and what changes compared with using Codex/codegen tokenizers?

How does Replit evaluate code-completion models, and what makes it difficult?

What tools does Replit use for production inference, and how do they address latency?

Review Questions

- What three motivations does Replit emphasize for training in-house, and how do they connect to Ghostwriter’s design choices?

- Describe two specific data-pipeline filters Replit uses to improve training quality, and explain why each matters for model behavior.

- Why does decontamination become more difficult as code models improve, and how can it distort evaluation results?

Key Points

- 1

Replit trains Ghostwriter in-house to improve code-completion quality for specific languages and workflows, rather than relying on general-purpose or broadly trained code models.

- 2

Customization is paired with cost control: smaller, efficient models are necessary for a user base of roughly 20 million to avoid prohibitive API spend.

- 3

Databricks is central for scalable preprocessing—moving beyond Hugging Face Transformers abstractions to enable distributed transformations, analytics, and extensible notebook-based iteration.

- 4

Data cleaning is practical and targeted: anonymization removes sensitive identifiers, regex/heuristics remove auto-generated code, and parsability checks (including AST parsing for Python) filter out problematic or legacy code.

- 5

Tokenizer training is treated as a performance lever: a Ghostwriter-specific tokenizer/vocabulary stays more code-focused than Codex/codegen, enabling a smaller vocabulary and faster training/inference.

- 6

Evaluation for code correctness relies on executing generated outputs against tests, but runtime, multi-language complexity, and decontamination (train/test leakage) make it unusually hard.

- 7

Production serving uses FasterTransformer plus Triton with batching, request cancellation, and autoscaling to meet low-latency requirements under real GPU constraints.