Roles (2) - ML Teams - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

ML roles differ mainly by lifecycle ownership: product alignment, deployment/monitoring, pipeline building, model training-to-production, research modeling, and business analytics outputs.

Briefing

Machine learning teams split work across distinct roles—ML product management, DevOps, data engineering, ML engineering, ML research, and data science—but the differences mostly come down to which part of the ML lifecycle each role owns and how much software engineering depth they bring. The practical takeaway: labels, pipelines, training, deployment, and business-facing outputs don’t belong to one job title by default; they map to responsibilities, handoffs, and production accountability.

ML product managers sit at the intersection of business needs, users, and the ML team’s technical constraints. Their job mirrors traditional product management—prioritizing projects and ensuring execution matches requirements—but it adds ML-specific work like coordinating design docs, wireframes, plans, and project management workflows (often JIRA). DevOps engineers focus on getting models into production and keeping them running. Their deliverable is a deployed, monitored model system, frequently built using AWS tooling.

Data engineers build the plumbing: data pipelines that store, aggregate, monitor, and transform raw inputs into features and datasets ML teams can use. Their work products often look like distributed data systems (the transcript references Hadoop-style setups) and they help stream data into the ML workflow. ML engineers then take responsibility for the end-to-end operational lifecycle—training and prediction, and deploying models into real-world production. Their output is typically a prediction system that users or downstream services actually rely on.

ML researchers focus more narrowly on the training/prediction modeling portion, often in a research context aimed at forward-looking problems. The boundary between research and engineering can blur, but the usual pattern is that researchers either hand models off for productionization or work on ideas that may never justify full production deployment. Data scientists act as a catch-all title across organizations: often they answer business questions using analytics and data, producing reports, charts, and decision-support outputs.

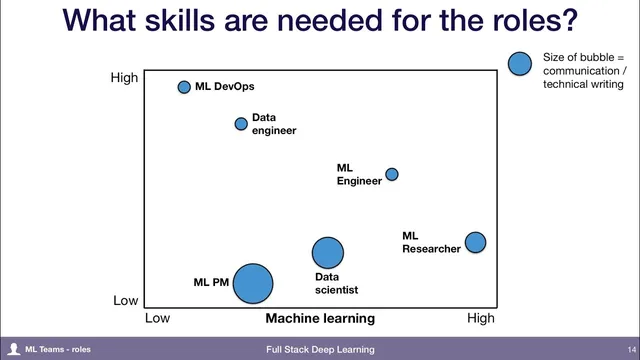

A skills map in the discussion ties machine learning knowledge to engineering depth, with communication and technical writing called out as a major differentiator. DevOps roles skew toward software engineering; strong software engineers can enter ML teams even without deep ML experience. Data engineering requires affinity with ML concepts because pipelines feed model training. ML engineering is described as rare because it blends ML capability (e.g., training models in TensorFlow) with software engineering. Researchers are ML experts but typically not expected to match the same level of software engineering depth. Data scientists vary widely in background, but success often depends heavily on communication and technical writing because their work frequently culminates in organizational reporting and leadership-facing decision tools.

The Q&A sharpens operational ownership: data labeling and quality control are often best treated as part of the machine learning function, since ML engineers consume labels and are accountable for prediction quality. Team composition has no universal ratio; it depends on the problem’s specifics. The discussion also notes a growing pattern: some teams add “full stack” internal tooling engineers to build ML-specific tooling that third parties don’t yet provide. When asked where to start for a new ML company, the advice leans toward hiring people closer to full-stack execution—capable of training models and deploying them—rather than beginning solely with researchers. Across roles, a shared core skill emerges: understanding how ML differs from traditional software, including failure modes, distribution shifts, and the reality that timelines and outcomes can’t be guaranteed until experiments run.

Cornell Notes

Machine learning teams organize work around lifecycle ownership: ML product managers align business/user priorities with ML constraints; DevOps engineers deploy and monitor models in production; data engineers build pipelines that feed ML workflows; ML engineers handle training-to-prediction and production deployment; ML researchers focus on modeling in a research context; data scientists serve as a broad analytics-and-insights role. The transcript emphasizes that role boundaries often blur, but accountability for prediction quality and production readiness drives where tasks like labeling and quality control should live. A skills framework links ML knowledge, software engineering depth, and communication/technical writing needs. For new teams, hiring toward full-stack execution can deliver value faster than starting only with research.

What distinguishes an ML product manager from a traditional product manager?

Where should data labeling and quality control responsibilities sit?

How do DevOps engineers and ML engineers differ in day-to-day deliverables?

Why is ML engineering described as a rare skill set?

What makes communication and technical writing especially important for some roles?

What advice is given for companies starting ML capabilities?

Review Questions

- Which role is most directly accountable for keeping a deployed model running in production, and what is its primary work product?

- Why does the transcript argue that data labeling often belongs to the machine learning function rather than a separate team?

- What shared mindset is described as core across ML roles, and how does it affect project planning and expectations?

Key Points

- 1

ML roles differ mainly by lifecycle ownership: product alignment, deployment/monitoring, pipeline building, model training-to-production, research modeling, and business analytics outputs.

- 2

DevOps engineers deliver deployed, monitored models—often using AWS tooling—while ML engineers own the broader training-to-prediction-to-production lifecycle.

- 3

Data engineers build and maintain pipelines (including feature creation and streaming into ML workflows) so ML teams can train effectively.

- 4

Data labeling and quality control frequently work best when owned by the machine learning function because ML engineers consume labels and are accountable for prediction quality.

- 5

ML engineering is rare because it requires both ML skills (e.g., TensorFlow model work) and strong software engineering for production systems.

- 6

For new ML efforts, hiring toward full-stack execution can produce earlier user value than starting with researchers alone.

- 7

A core cross-role skill is understanding ML’s non-determinism—failure modes, distribution shifts, and the inability to guarantee timelines without experimentation.