Scoping Review Steps - Heather Colquhoun

Based on Evidence Synthesis Ireland's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Scoping reviews are for mapping concepts, evidence types, and research gaps; intervention effectiveness questions usually require a different review approach.

Briefing

Scoping reviews are most useful when the research question is about mapping a field—what concepts exist, what evidence types are available, and where gaps remain—not when the goal is to determine whether an intervention works. Heather Colquhoun, drawing on years of collective experience and methodological work, frames scoping reviews as a form of reconnaissance: a systematic, exploratory way to describe the boundaries of a topic and the shape of the literature. That distinction matters because many journals have grown wary of low-quality scoping reviews, often produced with unclear purposes, weak methods, or outputs that don’t match the intended use of the findings.

Colquhoun grounds the discussion in the “family of knowledge synthesis,” placing scoping reviews alongside systematic reviews, rapid reviews, evidence maps, realist reviews, and living reviews. Across these approaches, screening and extraction practices often share similarities, but the question type and outputs differ. For scoping reviews, the defining features are the mapping objective and the focus on breadth: identifying concepts, types of evidence, and research gaps within a defined area. She emphasizes that scoping reviews should not be treated as a substitute for clinical effectiveness reviews; once intervention effects become central, the work should be reconsidered as a systematic review (or another appropriate approach) rather than a scoping review.

She also addresses persistent “gray areas” between scoping and systematic reviews, including cases where researchers label a review as “systematic” even when it is not about clinical effectiveness (for example, examining how theory is used in trials). The practical takeaway: the label alone is less important than the underlying purpose—what the review is meant to accomplish and how the results will be used.

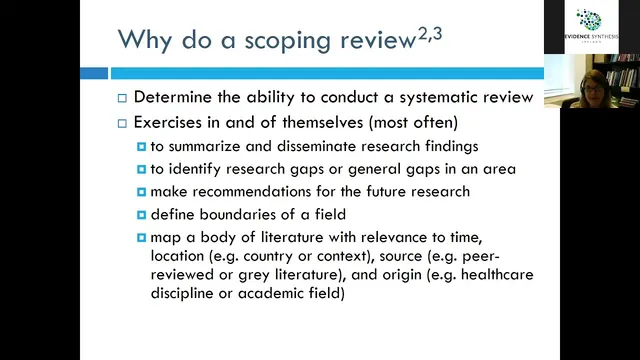

Why do scoping reviews? Common reasons include summarizing and disseminating research, identifying gaps, informing future research priorities, and mapping a body of literature relevant to multiple concepts. Colquhoun is skeptical of one frequent justification—doing a scoping review merely to decide whether a systematic review is feasible—because it can lead to wasted effort when the systematic review later turns out to be empty. She argues that scoping reviews are more defensible when they stand as an end product: a structured map of what exists and what is missing.

Methodologically, she highlights steps that improve rigor and credibility: publish or register a protocol (since Prospero may not accept scoping reviews, Open Science Framework is often used), search widely but with deliberate scope-limiting decisions, and frame the question using PCC (Population, Concept, Context) rather than forcing PICO. Purpose is treated as a design driver: without a clear “why,” teams can answer the question yet struggle to synthesize results into something actionable.

Colquhoun also points to recurring quality problems in published scoping reviews—low protocol reporting, inconsistent use of independent screening, incomplete extraction details, and missing flow diagrams (including PRISMA flow). She notes that rehabilitation-focused scoping reviews often use qualitative synthesis more frequently, but still show methodological gaps. A major improvement effort underway is the PRISMA extension for scoping reviews (20 essential reporting items plus optional items), supported by EQUATOR resources and tip sheets.

Overall, the core message is pragmatic: scoping reviews should be built around a mapping question, executed with transparent, protocol-driven rigor, and reported in a way that produces outputs aligned with their intended decision-making value.

Cornell Notes

Scoping reviews are systematic, exploratory knowledge syntheses designed to map a field—identifying concepts, evidence types, and research gaps—rather than to judge whether interventions are effective. Colquhoun emphasizes that the defining feature is the question and purpose: once intervention effects are the target, a systematic review is usually the better fit. She recommends publishing a protocol (often via Open Science Framework when Prospero won’t accept scoping reviews), using PCC to frame questions, and limiting scope using research-question logic rather than resource excuses alone. Purpose should guide outputs and synthesis methods, and reporting quality is improving through PRISMA for scoping reviews and related EQUATOR guidance. Published scoping reviews still commonly fall short on protocols, screening/extraction transparency, and PRISMA flow reporting.

What makes a scoping review different from a systematic review in practice?

Why is protocol publication (or registration) treated as a quality requirement for scoping reviews?

How should scoping review questions be framed when PICO feels awkward?

What’s the biggest design risk when scoping reviews don’t start with a clear purpose?

How can scope be limited without undermining the mapping goal?

What quality problems show up repeatedly in published scoping reviews?

Review Questions

- When does an intervention-focused question suggest switching from a scoping review to a systematic review?

- How does PCC framing help prevent scoping reviews from becoming unmanageably broad?

- What are three concrete reporting or methodological elements that commonly distinguish higher-quality scoping reviews from lower-quality ones?

Key Points

- 1

Scoping reviews are for mapping concepts, evidence types, and research gaps; intervention effectiveness questions usually require a different review approach.

- 2

A scoping review’s purpose must be explicit because it determines how results should be synthesized and what outputs will be useful.

- 3

Protocol publication improves rigor and transparency; when Prospero won’t accept scoping reviews, Open Science Framework is commonly used to post protocols.

- 4

Use PCC (Population, Concept, Context) to structure scoping review questions instead of forcing PICO.

- 5

Scope-limiting decisions should be justified by the research question (e.g., targeted gray literature, sampling reference lists, reducing objectives or extraction depth).

- 6

Independent screening and detailed extraction reporting are frequent weak points; PRISMA flow diagrams and eligibility criteria should be clearly reported.

- 7

PRISMA for scoping reviews (with EQUATOR resources) provides a standardized reporting checklist to raise quality and reduce journal rejections.