Simulating and understanding phase change | Guest video by Vilas Winstein

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Phase transitions in the lattice liquid–vapor model come from balancing energy minimization (adjacent molecules lower energy) against entropy maximization (many disordered configurations).

Briefing

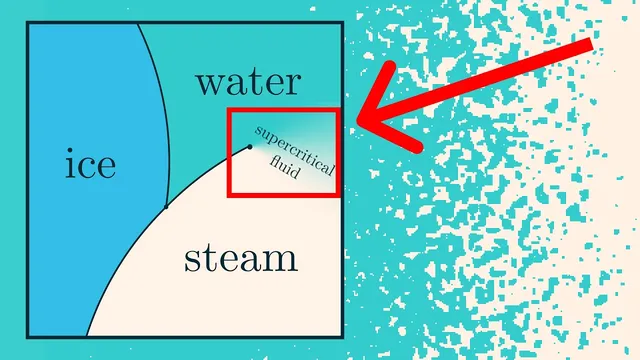

A discretized “liquid–vapor” model reproduces water-like phase behavior—complete with a liquid–gas phase transition, a supercritical region, metastability, and fractal structure at the critical point—using only a simple energy rule plus statistical mechanics. The key insight is that macroscopic phases emerge from a competition between energy minimization (favoring clumped molecules) and entropy maximization (favoring many disordered configurations). That balance shifts with temperature, and the model’s phase diagram in the (temperature, chemical potential) plane mirrors the qualitative shape of real water.

The simulation represents a box as a grid of pixels: blue pixels hold molecules, white pixels are empty space. Two parameters steer the system. Temperature controls how strongly energy matters relative to randomness. Pressure is hard to implement directly in a fixed-size grid, so the model uses chemical potential instead, which plays a role analogous to pressure by controlling the tendency for the number density to increase or decrease. At high temperature, density changes smoothly with chemical potential—matching the idea of a supercritical fluid where liquid and gas become continuously connected. At low temperature, the system abruptly switches between low-density (gas-like) and high-density (liquid-like) behavior, producing a phase transition line; during the transition, the grid separates into regions that are mostly liquid-like or gas-like rather than smoothly blending.

Under the hood, the model samples microstates using the Boltzmann distribution: each configuration’s probability is proportional to exp(−E/T), where E is the microstate energy. The video motivates this formula by starting from isolated systems where all microstates at fixed energy are equally likely, then coupling two systems and asking what quantity equalizes at equilibrium. The equalized derivative of entropy with respect to energy is 1/T, which leads directly to exp(−E/T) when a small system is placed in a large heat bath at temperature T.

To make the phase transition happen in a tractable way, the energy function is deliberately simple: molecules prefer to be adjacent, with each neighboring pair lowering energy (implemented as −1 per adjacent pair, and 0 otherwise). At low temperature, the energy advantage of forming a dense droplet beats the entropy loss from restricting configurations. At high temperature, entropy dominates, so dispersed “gas” configurations win.

Efficient sampling requires more than writing down the distribution. Instead of enumerating exponentially many microstates, the simulation uses Markov chain Monte Carlo via Kawasaki Dynamics: repeatedly propose local moves that conserve molecule count (swap a molecule between two pixels) and accept them with probabilities determined by the Boltzmann weight ratio exp(−ΔE/T). Allowing molecule number to fluctuate leads to a grand-canonical version controlled by chemical potential, enabling larger, GPU-friendly simulations by proposing add/remove moves per pixel.

Beyond the phase diagram, the model shows physically familiar phenomena. Droplets and bubbles expand only after crossing the transition line far enough to overcome kinetic “kick” requirements; otherwise the system can linger in the metastable gas phase until a sufficiently large droplet forms. At the critical point, density patterns become self-similar fractals with scale invariance. The same underlying lattice physics maps onto the Ising model (liquid/gas ↔ spin up/down) and, with continuous spin directions, onto the XY model with vortices. Finally, the video emphasizes universality: many microscopic details can change without destroying the qualitative macroscopic behavior, which is why simplified models can still capture the essence of real phase transitions.

Cornell Notes

The liquid–vapor lattice model generates a realistic phase diagram by sampling microstates with the Boltzmann distribution, where configuration probability scales like exp(−E/T). Temperature controls the tradeoff between energy (favoring adjacent molecules and dense droplets) and entropy (favoring many disordered configurations). Chemical potential replaces pressure by controlling the preferred molecule density when the system can exchange particles with a reservoir. At high temperature the density varies smoothly (supercritical behavior), while at low temperature the model switches abruptly between gas-like and liquid-like macrostates along a phase transition line. Near the critical point, the system forms fractal, self-similar structures, and the same physics connects to the Ising and XY models via spin interpretations.

Why does the model use chemical potential instead of pressure, and how does it affect density?

How does the Boltzmann distribution arise from equilibrium between two systems?

What specific energy rule makes droplets form at low temperature?

How does Kawasaki Dynamics sample the Boltzmann distribution without enumerating all microstates?

Why can the system stay in the “wrong” phase near the transition line?

What makes the critical point special in this model, and what other models does it connect to?

Review Questions

- How does the competition between energy and entropy determine whether the model behaves like a gas or a liquid at a given temperature?

- Derive the logic that identifies temperature as the equalized quantity ∂S/∂E, and explain how that leads to exp(−E/T) probabilities.

- What conditions produce metastability in the simulation, and how does inserting a large droplet change the outcome?

Key Points

- 1

Phase transitions in the lattice liquid–vapor model come from balancing energy minimization (adjacent molecules lower energy) against entropy maximization (many disordered configurations).

- 2

Temperature governs the strength of the Boltzmann weighting exp(−E/T), shifting the system between gas-like and liquid-like macrostates.

- 3

Chemical potential replaces pressure in fixed-size simulations by controlling particle density through grand-canonical exchange with a heat bath.

- 4

The Boltzmann distribution is derived by coupling two isolated systems and requiring equilibrium when ∂S/∂E equalizes, identifying that derivative as 1/T.

- 5

Efficient sampling uses Markov chain Monte Carlo: Kawasaki Dynamics proposes local swaps and accepts them with probabilities set by exp(−ΔE/T).

- 6

The model exhibits metastability because droplets must exceed a critical size to grow; otherwise the system reverts to the original phase.

- 7

At the critical point, the system becomes scale-invariant with fractal, self-similar structures and connects to Ising and XY models via spin interpretations.