Social learning in independent multi-agent reinfor… | Kamal N’dousse | OpenAI Scholars Demo Day 2020

Based on OpenAI's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Social learning is framed as a testable question for independent RL agents: when does observing experts improve learning beyond what direct experience provides?

Briefing

Social learning is central to human intelligence, but it’s unclear when independent reinforcement-learning agents can benefit from one another’s behavior. Kamal N’dousse frames the core problem as a test of whether RL agents can learn from “experts” merely by observing them in shared environments—and if so, under what conditions that social signal beats learning from direct experience.

The talk starts with a human-scale motivation and a monkey parable from experimental sociology: monkeys punish any peer that tries to reach bananas after prior punishment (cold water). Even when the punishment stops, the behavior persists, and new monkeys quickly get punished for attempting the same forbidden action—suggesting a cultural pattern can emerge from observation and enforcement. N’dousse notes the story is apocryphal, but treats it as a template for studying social learning in artificial agents.

To investigate, he builds tools for independent multi-agent RL. He develops marl grid, an open-source grid-world suite compatible with the OpenAI Gym API, designed to scale to many agents and support reproducible experiments. A key environment is “goal cycle,” where agents must traverse gold tiles in a specific order to earn reward; stepping on tiles out of order triggers a configurable penalty. By tuning the penalty, the environment changes how costly exploration is: with low penalties, agents can “mess up” while still eventually finding rewards, while with high penalties, exploration becomes so aversive that agents learn to commit early to a first successful path and avoid alternatives. This knob lets him control the difficulty of learning from the environment itself—crucial for testing whether expert demonstrations provide an advantage.

On the algorithm side, he reports that DQN struggled when long-horizon behavior required memory, even after adding LSTM and trying limited prioritized experience replay. Switching to PPO produced a major improvement. Further gains came from a practical technique he calls “hidden state refreshing”: during PPO’s repeated update steps, the LSTM hidden states are periodically refreshed so the agent’s internal memory doesn’t become stale relative to the evolving policy. With this change, goal-cycle agents achieve higher rewards and more stable training.

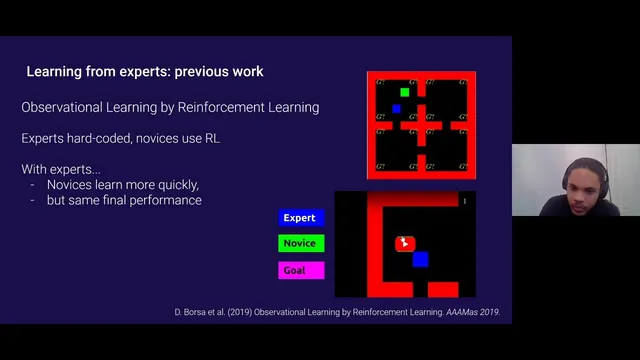

With the infrastructure in place, N’dousse turns to observational learning. He first tries to replicate a DeepMind result, “Observational Learning by Reinforcement Learning” (where experts are hard-coded and novices learn via RL in a simple grid). In that earlier work, experts speed up novice learning, but the novices ultimately don’t outperform what they would achieve learning alone. In N’dousse’s replication on a cluttered grid with a single goal, experts again fail to provide a learning speedup.

The more interesting outcome appears in goal cycle. When the cycle structure is masked from novice agents—so direct environmental information is less immediately actionable—novices can learn to follow expert agents’ behavior. In demonstrations, novices converge toward the experts’ strategy, though they may land at slightly lower performance when an expert happens to get trapped, indicating imitation can propagate suboptimal trajectories.

The emerging conclusion is cautious: learning from experts is difficult when agents can already infer the task from direct interaction. Social cues become valuable when the environment makes the right strategy hard to discover on one’s own, and when expert behavior provides information that direct observation doesn’t readily reveal. Next steps include scaling to more goals and varying penalty settings, plus measuring whether novices truly acquire the same skill by testing them in new environments without experts. He also proposes exploring priors or mechanisms that encourage social learning, and asks whether novices could ever surpass experts—an open direction for future experiments.

Cornell Notes

N’dousse investigates when independent RL agents can learn from experts just by observing them in shared environments. Using marl grid and a goal-cycle task where agents must follow an ordered sequence of actions, he shows that direct learning often dominates: in a cluttered single-goal grid, expert presence doesn’t speed up novice learning. But when the task structure is masked so novices can’t easily infer the correct cycle from experience, novices learn to imitate expert behavior and converge toward the experts’ strategy. Algorithmic progress—especially PPO with LSTM and “hidden state refreshing”—is presented as enabling stable long-horizon learning needed for these social-learning tests.

Why does the goal-cycle environment matter for studying social learning?

What role does “hidden state refreshing” play in the RL results?

How did DQN with memory perform compared with PPO?

What happened in the replication of DeepMind’s observational learning result?

Under what condition did expert observation start to help in goal cycle?

Review Questions

- What experimental lever (environment parameter or observation condition) most directly determines whether expert behavior becomes useful to novices in goal cycle?

- How does hidden state refreshing address the mismatch between stored LSTM memory and the policy being updated during PPO training?

- Why might experts fail to help in a single-goal cluttered grid but succeed when the cycle structure is masked?

Key Points

- 1

Social learning is framed as a testable question for independent RL agents: when does observing experts improve learning beyond what direct experience provides?

- 2

The marl grid framework supports scalable, configurable multi-agent grid-world experiments compatible with the OpenAI Gym API.

- 3

Goal cycle uses ordered gold tiles plus configurable penalties to tune exploration difficulty, letting researchers control how learnable the task is without social information.

- 4

PPO with LSTM outperformed DQN with memory for long-horizon behavior, and “hidden state refreshing” improved stability by preventing stale memory during repeated PPO updates.

- 5

Replicating DeepMind’s observational learning result in a cluttered single-goal grid found no novice speedup from expert presence.

- 6

Masking the goal-cycle structure enabled novices to imitate experts, indicating social cues matter most when direct inference is hard.

- 7

Next-step evaluation emphasizes whether novices acquire transferable skill by testing them in new environments without experts, not just by matching expert trajectories during training.