Sources (2) - Data Management - Full Stack Deep Learning

Based on The Full Stack's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

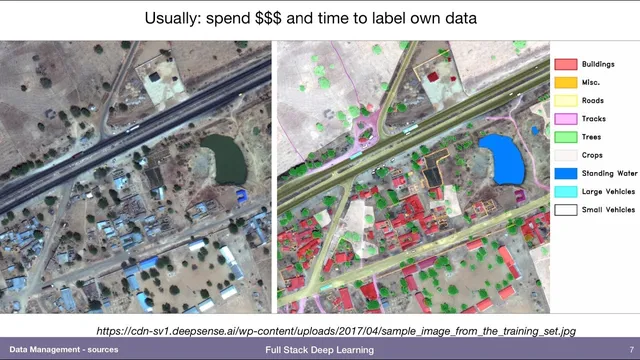

Most production deep learning success depends on data sourcing and labeling strategy as much as model architecture.

Briefing

Deep learning in production often hinges less on flashy model design and more on how teams source, label, and multiply data. Label-hungry approaches dominate because most real-world tasks need supervised learning, but the transcript draws a line between methods that still depend heavily on labeled examples and those that don’t. Reinforcement learning and GANs can reduce reliance on labeled data, yet they’re framed as less practical for production today than standard supervised pipelines—so the focus stays on label data and on ways to make it cheaper and more effective.

When labeled data is scarce, the transcript argues that public datasets don’t create a lasting advantage: anyone can download them. The competitive edge comes from a “data flywheel”—shipping a model, collecting user interactions, and then labeling new data generated by real usage. Google Photos is used as the clearest example. Even if competitors start with academic or publicly available labeled face datasets and quickly label more faces themselves, they can’t match Google’s scale (described as roughly a billion labeled faces). Waiting to reach that accuracy before shipping is treated as a dead end. Instead, the proposed strategy is to deploy a high-precision model that makes few mistakes, even if it misses many matches (low recall). Then the app can ask users targeted questions—whether two photos show the same person—turning user feedback into fresh labels. That feedback loop improves the system over time, blending semi-supervised learning ideas with production instrumentation.

Semi-supervised learning is then defined as using unlabeled data to help label other data. One example comes from NLP: if only part of a sentence is visible, the missing portion becomes a “label” to predict. Vision gets a parallel approach: use unlabeled images by training a model to predict spatial relationships—such as offsets between patches—so the model learns structure in how images are generated. That learned structure can boost performance on supervised computer vision tasks.

Data augmentation is presented as a must-have for vision and a useful lever across domains. A single labeled image can be transformed—shifting, rotating, shearing, changing contrast, or pixelating—while preserving the underlying class (e.g., a car still looks like a car). The transcript claims this often yields several percentage points of accuracy and notes common tooling such as Keras’ ImageDataGenerator and fastai’s libraries.

For non-vision data, augmentation takes different forms: tabular data can be perturbed by masking or deleting features; speech and video can be modified by changing speed, inserting pauses, or masking frequency bands. Synthetic data is treated as an underrated starting point, especially when real data is expensive or risky. The Dropbox OCR pipeline is cited as an example of generating millions of synthetic word images. A receipts-reading project by Andrea Moffitt uses Blender to simulate realistic distortions—mesh deformation, illumination, and camera effects—so OCR models learn from messy, real-world inputs.

The transcript also addresses practical constraints: there’s no free lunch—generating more data can’t add new signal unless the augmentation injects realistic structure or domain knowledge. It suggests starting with pretrained weights when available (e.g., ImageNet) to reduce the amount of task-specific data needed. For imbalanced datasets, it recommends sample weighting and mentions focal loss or iterative retraining that emphasizes previously misclassified examples. Overall, the throughline is that production success depends on turning limited data into a continuously improving training resource—through user feedback, semi-supervised learning, augmentation, and carefully engineered synthetic data.

Cornell Notes

Production-focused deep learning success depends on how teams obtain and multiply data, not just how they tune models. Public datasets alone rarely confer an advantage; the transcript emphasizes a “data flywheel” where deployed systems collect user-generated examples and then convert interactions into labels. Semi-supervised learning reduces label needs by creating training targets from unlabeled data (e.g., predicting missing sentence parts or learning patch offsets in images). Data augmentation is treated as essential in vision and useful elsewhere by applying realistic perturbations that preserve meaning while creating new training inputs. For scarce or risky domains, synthetic data—generated with tools like Blender or via OCR-style pipelines—can help, but only when it injects real-world structure rather than merely duplicating existing signal.

Why does the transcript argue that public labeled datasets don’t create a durable edge?

How does the Google Photos example illustrate a practical alternative to waiting for perfect accuracy?

What does semi-supervised learning mean in this transcript, and how are labels created without manual annotation?

Why is data augmentation described as “must-do” for vision, and what kinds of transformations are used?

How does the transcript connect synthetic data to real-world performance, and what’s the key limitation?

What approaches are suggested for imbalanced datasets?

Review Questions

- What specific mechanism turns user interactions into training labels in the “data flywheel” example, and why does high precision matter for that mechanism?

- Give one NLP and one vision example of how semi-supervised learning creates training targets from unlabeled data.

- What does the transcript mean by “no free lunch” in the context of synthetic data and augmentation?

Key Points

- 1

Most production deep learning success depends on data sourcing and labeling strategy as much as model architecture.

- 2

Public labeled datasets are a weak differentiator because competitors can start from the same data; user-generated data can be harder to replicate.

- 3

A “data flywheel” improves models by deploying first, collecting real usage, and converting interactions into new labels.

- 4

Semi-supervised learning creates training targets from unlabeled data by masking or withholding parts of inputs and predicting the missing parts.

- 5

Vision data augmentation is treated as essential; realistic geometric and photometric transforms can yield measurable accuracy gains.

- 6

Synthetic data can be valuable in expensive or risky domains, but it must encode realistic deformations and conditions to add useful signal.

- 7

For imbalanced datasets, sample weighting and focal-loss-style emphasis on hard/rare examples help prevent the loss from being dominated by easy majority cases.