Sparks of AGI? - Analyzing GPT-4 and the latest GPT/LLM Models

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

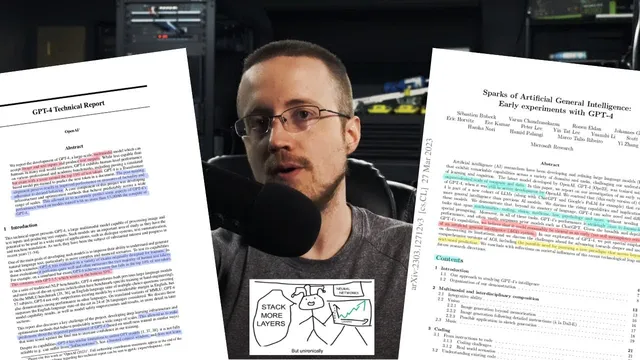

GPT-4’s multimodal capability is portrayed as meaningfully more than image captioning: it can interpret visual structure, explain context-dependent humor, and read/summarize text and charts from image-based documents.

Briefing

GPT-4’s biggest real-world leap is multimodal understanding: it can take both text and images as input and produce not just descriptions of what’s in a picture, but explanations that require context—like why an image is funny. In OpenAI’s reported examples, GPT-4 correctly identifies visual structure (such as panel dividers), interprets unusual scenes (like ironing on a moving taxi roof), and even handles memes that combine humor with technical details. It also performs OCR-style reading from image-based documents and charts, including summarizing a research-paper page and explaining a specific figure. The practical implication is that “robotic vision” style workflows may become more feasible even without full video-rate perception—smaller or future variants could eventually run fast enough for continuous environments.

Beyond vision, the transcript highlights two other major themes from GPT-4’s development: predictable scaling and exam performance. OpenAI and Microsoft report that smaller models can be trained and used to forecast larger-model capabilities with far less compute—up to “ten thousand times less,” according to the claims discussed. That matters because it could reduce cost and, in theory, support safety decisions like pausing training if a model is predicted to become too capable. But the transcript also argues that such methods must be open to public scrutiny; otherwise, secrecy could create a gatekeeping dynamic where a few companies control both the forecast tools and the resulting policy leverage.

Exam results are treated as both impressive and controversial. GPT-4’s strong performance is framed as largely coming from pre-training rather than reinforcement learning through human feedback (RLHF) and related alignment steps. Yet the transcript raises doubts about test-data leakage: statements about how much exam content was included in training appear inconsistent, and the lack of reproducible methods makes it hard to verify. Even if leakage is minimal, the transcript emphasizes a broader limitation of language models: they can be confidently wrong, and small errors can cascade in long, auto-regressive outputs. That means benchmark scores may overstate “understanding” relative to how models behave in messy, real tasks.

Microsoft’s “Sparks of AGI” comparisons are also met with skepticism. The transcript describes reproduced examples where GPT-4’s gains over GPT-3.5 sometimes look incremental rather than transformative—especially on tasks that require planning, where both models can fail because they generate tokens sequentially. It also points out that GPT-4’s strengths in theory-of-mind style puzzles (inferring what someone else thinks or feels) appear surprisingly broad, but that similar “good” behavior can be found in smaller models too.

Finally, the transcript pivots to safety concerns: hallucinations, privacy risks (including doxing via inference), bias and representation drift, and the difficulty of alignment across shifting social norms. It notes that models can be used to manipulate humans (including via pre-alignment vulnerabilities) and that emergent capabilities are hard to predict—even if scaling forecasts exist. The overall takeaway is cautious: GPT-4 is a powerful tool with meaningful advances, but treating it as “AGI” based on cherry-picked demonstrations risks hype, while the real work is learning how to use these systems reliably and building safeguards that can survive edge cases and rapid iteration.

Cornell Notes

GPT-4’s most emphasized capability is multimodal input: it can interpret images and answer questions about what’s shown, including explanations that rely on context (like humor) and reading text from image-based documents. The transcript also highlights “predictable scaling,” where smaller models are used to forecast larger-model performance, which could reduce compute and potentially support safety decisions—though it should be publicly auditable. Benchmark and exam performance are treated as impressive but hard to verify due to possible inconsistencies about whether test items appeared in training. Across tasks, the transcript repeatedly returns to a core limitation of language models: sequential, auto-regressive generation makes planning and certain math behaviors brittle, while alignment techniques (like reward models and “show your work”) can sometimes patch gaps. Overall, the piece argues for treating GPT-4 as a tool—powerful, but not reliably “AGI”—and for focusing on safety, privacy, bias, and evaluation rigor.

What does multimodality add beyond “describing images,” and why does it matter for real applications?

How does “predictable scaling” work in the claims discussed, and what safety tradeoff does it introduce?

Why are exam scores treated as both meaningful and suspect?

What recurring technical limitation is attributed to GPT-style models, and how do alignment or prompting mitigate it?

How does the transcript evaluate Microsoft’s “Sparks of AGI” comparisons between GPT-4 and GPT-3.5?

What safety and societal risks are emphasized beyond hallucinations?

Review Questions

- Which specific multimodal behaviors are cited as evidence of GPT-4’s image understanding, and how do they go beyond simple captioning?

- What kinds of inconsistencies about exam training data are raised, and why do they matter for interpreting benchmark results?

- How does the transcript connect auto-regressive generation to failures in planning and math, and what interventions are suggested to reduce those failures?

Key Points

- 1

GPT-4’s multimodal capability is portrayed as meaningfully more than image captioning: it can interpret visual structure, explain context-dependent humor, and read/summarize text and charts from image-based documents.

- 2

Predictable scaling claims suggest smaller models can forecast larger-model capabilities with much less compute, but the transcript argues such methods must be open to scrutiny to avoid gatekeeping.

- 3

Strong exam and benchmark performance is treated as impressive yet hard to validate because training-data relationships to test items are described inconsistently and not reproducibly.

- 4

GPT-style models’ auto-regressive generation makes planning and certain structured reasoning tasks brittle; prompting for stepwise work and alignment techniques can sometimes compensate.

- 5

Rule-based reward models (RBRM) are described as a safety/alignment mechanism that can classify unsafe content and shape responses, including using GPT-4 in the process.

- 6

Safety risks extend beyond hallucinations to privacy (doxing via inference), bias/representation drift, and the difficulty of aligning behavior across contentious, changing social norms.

- 7

The transcript urges skepticism toward “AGI” framing and emphasizes treating these systems as powerful tools while improving evaluation rigor and safeguards.