State of GPT

Based on West Coast Machine Learning's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

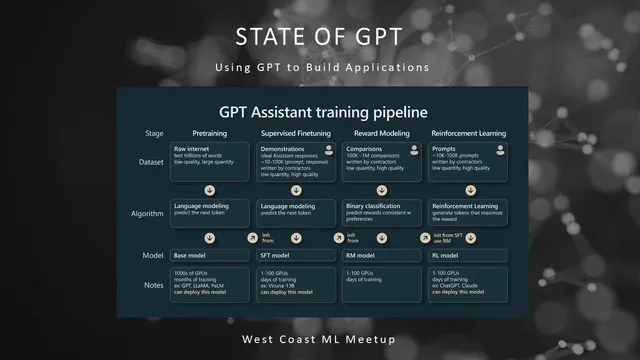

GPT-style assistants are trained in four serial stages: pre-training, supervised fine-tuning, reward modeling, and RLHF, with pre-training consuming the vast majority of compute.

Briefing

Large language models are built through a pipeline that starts with internet-scale next-token pre-training and then progressively adds human preference signals—turning raw “document completers” into instruction-following assistants. The central takeaway is that most of the capability comes from the pre-training stage, while later steps (supervised fine-tuning and RLHF) mainly reshape behavior toward helpfulness and alignment with what people rank as better outputs.

The training recipe begins with four serial stages: pre-training, supervised fine-tuning, reward modeling, and reinforcement learning from human feedback. Pre-training is the computational heavyweight: it consumes roughly 99% of training compute and uses thousands of GPUs over months. Data is assembled from mixtures such as Common Crawl and C4, plus higher-quality sources like GitHub, Wikipedia, books, and Stack Exchange, sampled according to set proportions. Raw text is converted into token sequences via tokenization methods (e.g., byte pair encoding), then fed into a Transformer that learns by predicting the next token across extremely long contexts.

Hyperparameters and scale illustrate why parameter count alone is a weak proxy for ability. A model like Llama (about 65B parameters) can outperform a larger model (e.g., GPT-3 at 175B) when trained longer on more tokens—1.4 trillion tokens versus 300 billion in the cited comparison. The talk also emphasized that training loss curves can show sharp spikes without necessarily indicating classic overfitting; rare “bad batches” or unusually difficult sequences can produce large temporary jumps.

After pre-training, the model’s general representations enable two practical adaptation paths. One is fine-tuning: sentiment classification, for example, shifts from training a task-specific model from scratch to taking a pre-trained Transformer and adapting it with comparatively small labeled datasets. The other is prompting: around the GPT-2 era, models became effective at completing tasks from instructions and examples embedded in the prompt (few-shot prompting), without additional gradient updates.

To make assistants that reliably follow instructions, the pipeline moves beyond base models. Supervised fine-tuning trains on prompt–ideal-response pairs created by human contractors, using tens of thousands of high-quality examples and continuing language modeling on this curated data. Then reward modeling collects comparative judgments: contractors rank multiple candidate completions for the same prompt, and a reward model learns to predict those rankings. Finally, reinforcement learning uses the reward model as a fixed scorer, updating the assistant so sampled outputs receive higher predicted reward.

Why this extra RLHF step matters: humans tend to prefer RLHF-tuned outputs over base or merely prompted models. The talk offered one intuition—judging quality via comparisons is easier than generating perfect examples—so human feedback can be leveraged more efficiently.

The second half shifted to how to use these assistants effectively. Because Transformers are “token simulators” without human-style inner monologue or self-correction, prompting often needs to supply structure: show-your-work patterns (e.g., “let’s think step by step”), self-consistency via multiple samples, and techniques like tree-of-thought that combine prompt templates with search logic. For harder tasks, tool use and retrieval augmented generation help: load relevant documents into context via embeddings and vector search (e.g., Llama Index), enforce output formats through constraint prompting (e.g., JSON), and consider fine-tuning only after prompt engineering is exhausted. The practical recommendation was to start with the most capable model (notably GPT-4) for best results, then optimize cost and latency later—while keeping expectations grounded in limitations like hallucinations, knowledge cutoffs, and susceptibility to prompt injection and jailbreak attacks.

Cornell Notes

The core idea is that GPT-style assistants are built in stages: massive next-token pre-training first, then supervised fine-tuning, then RLHF using human preference comparisons. Pre-training teaches general language representations by predicting the next token over huge token counts; later stages mainly reshape behavior toward helpful, truthful, and harmless responses. Reward modeling trains a model to score candidate completions based on ranked human judgments, and reinforcement learning updates the assistant to produce outputs that score higher. This matters because it explains why prompting works for many tasks, but why instruction-following quality often improves substantially after RLHF. It also guides practical usage: spread reasoning across more tokens, use multiple samples or search, and add tools or retrieval when the task exceeds what pure text generation can reliably handle.

Why does pre-training dominate compute, and what does the model learn during that phase?

How do supervised fine-tuning and RLHF differ from base-model prompting?

What causes spikes in training loss curves, and why they may not imply overfitting?

Why does prompting often need “show your work” or structured reasoning?

How do retrieval and constraint prompting improve reliability?

Review Questions

- What are the four serial training stages for GPT-style assistants, and what new data signal does each stage add?

- Why is parameter count not a reliable predictor of model capability in the talk’s comparisons?

- Give two prompting or system-level techniques that compensate for the model’s lack of self-correction during generation, and explain how each helps.

Key Points

- 1

GPT-style assistants are trained in four serial stages: pre-training, supervised fine-tuning, reward modeling, and RLHF, with pre-training consuming the vast majority of compute.

- 2

Pre-training learns general language representations via next-token prediction over tokenized internet-scale data; longer training on more tokens can outweigh higher parameter counts.

- 3

Base models are document completers; prompting can induce task behavior, but supervised fine-tuning and RLHF are what reliably shift outputs toward instruction-following and human preferences.

- 4

RLHF works by training a reward model from human rankings of multiple candidate completions, then using that reward model to reinforce higher-quality generations.

- 5

Effective prompting often requires spreading reasoning across more tokens (e.g., “let’s think step by step”), sampling multiple candidates, and selecting or voting on better outputs.

- 6

For tasks needing specific facts or strict output structure, retrieval augmented generation and constraint prompting (e.g., JSON templates) improve reliability.

- 7

Because LLMs can hallucinate, lack knowledge beyond their cutoff, and are vulnerable to attacks, they’re best used in low-stakes workflows with human oversight and tool-assisted guardrails.