SWE Stop Learning - The Rise Of Expert Beginners

Based on The PrimeTime's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Software team dysfunction can persist not only because top talent leaves, but because groups develop internal dynamics that normalize stagnation and toxicity.

Briefing

Software teams rot from the inside when people stop progressing and settle into “expert beginner” status—an in-between competence level where confidence outpaces real understanding. The core claim is that this stagnation isn’t just a byproduct of losing top talent to greener pastures (the “Dead Sea effect”); it also emerges from group dynamics that convert external pressures—like adopting trendy technologies pushed by persuasive insiders—into internal, persistent incompetence and professional toxicity.

The discussion starts by reframing why organizations end up with lots of impressive-sounding titles that don’t match real output. While the Dead Sea effect explains how the most capable developers are often the most marketable and therefore leave when conditions sour, it doesn’t fully explain why dysfunction persists even when talented people remain. The missing piece is a “magic” inside the group: a mechanism that turns bad external decisions into a culture where people rationalize mediocrity, stop learning, and protect their status. A personal example illustrates the pattern: a team gets swept into a new approach—described as a Netflix-era effort involving reactive/backpressure ideas and a generalized server-driven protocol for application behavior. The pitch is compelling, authority follows, and the architecture becomes a long-running sink of complexity. Eventually, people stop wanting to work on it, not because the idea was impossible, but because the organization’s learning loop breaks: the team keeps investing in the narrative rather than correcting course.

To explain how individuals opt into permanent mediocrity, the transcript pivots to skill acquisition. A bowling story shows the psychological trap: the narrator improves quickly by “faking it” with an unconventional technique, then hits a ceiling around a 160 average. Advice to switch to proper form comes with a painful transition period—worse performance before improvement. The lesson is that real mastery requires deliberate practice that temporarily reduces confidence and output.

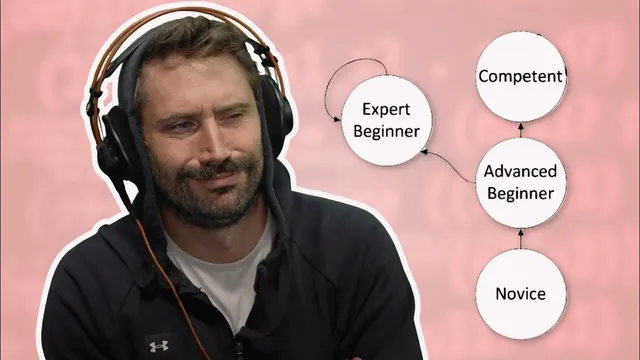

That framework is connected to the Dreyfus model of skill acquisition (novice → advanced beginner → competent → proficient → expert). The key danger zone is the advanced beginner stage, where people lack big-picture understanding yet can mistake familiarity for expertise—an echo of Dunning-Kruger dynamics. The transcript introduces a further concept: “expert beginner,” where someone has enough experience to feel capable, but not enough understanding to recognize what they still don’t know. This state can become self-reinforcing, especially when feedback cycles in software are slow (months across a project) compared with fast feedback in sports.

AI tools and copilot-like assistance are presented as a modern accelerant of this risk. The concern isn’t that AI is inherently bad; it’s that it can shorten the hard part of problem-solving—figuring out how to go from problem (A) to solution (C) and then translating that into code. When developers can “bounce” ideas off tools that generate answers, some may develop learned helplessness: they stop practicing independent reasoning, rely on copy-paste, and struggle when tools aren’t available.

The practical antidote offered is cultural and personal: build things outside one’s comfort zone, treat learning like a hobby, and force yourself to own the decisions. Personal projects—where you live with your architecture choices over time—create the kind of feedback and maintenance experience that prevents settling into expert beginner. The larger warning is that once people entrench at that level, they can entrench degenerative group behavior too, setting up the next stage of the series: how expert beginners contribute to toxic, failing team dynamics even when the organization still has talented members.

Cornell Notes

The transcript argues that software dysfunction can persist even when talented developers remain, because groups can develop an internal culture of stagnation. Individuals often opt into “expert beginner” status: enough experience to feel confident, but not enough big-picture understanding to keep improving. The Dreyfus model and a bowling story illustrate how people hit ceilings when they stop doing the uncomfortable deliberate practice required for mastery. Slow software feedback cycles make this worse, since it can take months to learn whether an approach is truly working. AI-assisted coding tools may intensify the problem by reducing the need to practice independent problem-solving, potentially fostering learned helplessness. The proposed countermeasure is to build personal projects outside one’s expertise and maintain ownership of decisions so learning continues.

How does the “Dead Sea effect” explain talent loss, and why isn’t it enough to explain persistent incompetence?

What does the bowling story teach about hitting a performance ceiling?

What is the “expert beginner” concept, and how does it relate to the Dreyfus model?

Why does AI/coding assistance risk increasing learned helplessness?

What practical steps are suggested to avoid settling into expert beginner?

Review Questions

- What internal group mechanism does the transcript claim can turn external bad decisions into long-term organizational incompetence?

- How do slow feedback cycles in software make it easier to remain stuck at an “expert beginner” level?

- Why does the transcript treat deliberate practice (even when it temporarily worsens performance) as essential for moving beyond a skill ceiling?

Key Points

- 1

Software team dysfunction can persist not only because top talent leaves, but because groups develop internal dynamics that normalize stagnation and toxicity.

- 2

The “expert beginner” state is confidence without big-picture understanding, where people rationalize that they’ve already reached expert status.

- 3

The Dreyfus model’s advanced beginner stage is a known trap: limited understanding can create overconfidence and slow progression.

- 4

Software differs from sports because meaningful feedback often arrives over months during full project lifecycles, making self-correction harder.

- 5

AI-assisted coding can increase learned helplessness by reducing the need to practice independent problem-solving and reasoning from problem to solution.

- 6

Deliberate practice often requires a painful transition period where performance drops before it improves.

- 7

Personal projects and building outside one’s comfort zone are presented as practical ways to keep learning and avoid tool-dependent stagnation.