The 5 Levels of AI Coding (Why Most of You Won't Make It Past Level 2)

Based on AI News & Strategy Daily | Nate B Jones's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI coding can make teams slower when it’s added to existing human workflows without redesign, even if code generation is faster.

Briefing

AI coding is accelerating in the places where software is treated like an autonomous production system—but most developers and companies are getting slower because they’re still running human-centered workflows. A key gap separates frontier “dark factory” teams that turn specifications into production software with minimal human intervention from the broader industry that bolts AI tools onto existing processes and then pays the cost in evaluation time, review overhead, and subtle debugging.

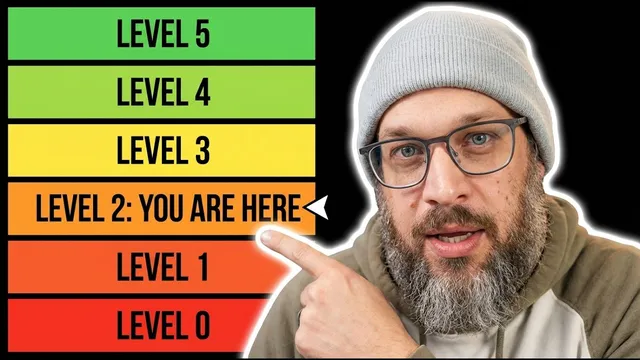

The transcript frames this mismatch through a “five levels of vibe coding” ladder attributed to Dan Shapiro of Glow Forge. Level 0 is autocomplete-style assistance (accepting AI-suggested lines, like early GitHub Copilot). Level 1 is “coding intern,” where the human breaks work into discrete tasks and reviews everything the AI produces. Level 2 (“junior developer”) shifts to multifile changes and feature work across modules, with the human still reading the full output. Level 3 (“developer as manager”) flips the relationship: the AI implements while the human mainly approves PRs and makes judgment calls. Level 4 (“developer as product manager”) means writing specifications and checking outcomes—code becomes a black box evaluated through tests and metrics. Level 5 (“dark factory”) is the end state: specifications go in, working software comes out, and no human writes or reviews the code.

The transcript argues that the industry’s biggest misconception is treating the problem as a tool gap. Even as Claude Code and OpenAI’s Codex-style systems increasingly generate their own code, adoption studies show real-world slowdowns. A 2025 randomized control trial by METR reported experienced open-source developers using AI tools completed tasks 19% slower than those without AI. The study also found developers misestimated the speedup, believing they’d be 24% faster when they weren’t. The proposed cause is workflow disruption: developers spend time evaluating AI output, correcting near-miss code, context-switching between mental models and generated changes, and debugging errors that look correct at first glance.

To illustrate Level 5, the transcript spotlights Strong DM’s “software factory,” a three-engineer operation built around an open-source coding agent called attractor and a repo consisting of three markdown specification files. Instead of relying on traditional in-code tests, Strong DM uses “scenarios”—behavioral evaluations stored outside the codebase so the agent can’t see or game the criteria. It also uses a “digital twin universe,” simulated versions of external services (including Jira, Slack, Google Docs, Google Drive, and Google Sheets) to run integration-like checks without touching production systems. The result is production software built end-to-end by agents, with a stated emphasis on compute cost and scale (including a benchmark of spending at least $1,000 per human engineer per day to keep the factory improving).

Finally, the transcript connects dark-factory automation to organizational and career shifts. Traditional coordination structures—sprints, standups, code review, QA—exist because humans write and validate code. When agents implement, those layers become friction, and the bottleneck moves to specification quality and judgment. That shift also threatens the junior developer pipeline: the transcript cites studies projecting declines in junior roles and argues that entry-level learning-by-doing is hollowed out when AI handles the work juniors used to practice. The proposed remedy is not fewer engineers, but different skills: systems thinking, customer intuition, and the ability to write precise specs that agents can execute correctly. The central takeaway is blunt: dark factories are real and working, but most organizations are stuck at lower levels, and closing the gap requires people and process redesign—not just better AI tools.

Cornell Notes

The transcript lays out a “five levels of vibe coding” framework that maps how far AI-assisted development has progressed—from autocomplete to fully autonomous “dark factories.” Most teams remain stuck around Levels 1–3, where humans still review or read generated code, and that mismatch with existing workflows can cause measurable slowdowns. A randomized control trial reported experienced developers using AI tools completed tasks 19% slower, largely due to workflow disruption and reliability concerns. Level 5 teams like Strong DM aim to eliminate human code-writing and code-review by feeding markdown specifications into agentic systems that test via external “scenarios” and simulated “digital twins.” The practical implication: the bottleneck shifts from coding speed to specification quality, organizational redesign, and human judgment.

What are the five levels of “vibe coding,” and where does the industry mostly land?

Why did experienced developers get slower with AI tools in the METR randomized trial?

How does Strong DM’s Level 5 approach differ from traditional testing?

What is the “digital twin universe,” and why does it matter for autonomous software factories?

What organizational changes does the transcript say become necessary as teams move toward Level 5?

How does the transcript connect dark-factory automation to junior developer job decline?

Review Questions

- Which level of the five-level framework best matches a workflow where humans still read every line of generated code, and why?

- What mechanisms does the transcript propose to prevent AI agents from “teaching to the test,” and how do scenarios and holdouts achieve that?

- How does the bottleneck shift from implementation speed to specification quality, and what organizational roles change as a result?

Key Points

- 1

AI coding can make teams slower when it’s added to existing human workflows without redesign, even if code generation is faster.

- 2

The “five levels” framework clarifies that most teams operate around Levels 1–3, not Level 5 dark factories.

- 3

A randomized control trial reported experienced developers using AI tools finished tasks 19% slower, attributed to evaluation time, context switching, and subtle debugging.

- 4

Level 5 systems like Strong DM rely on external “scenarios” and simulated “digital twins” to evaluate correctness without letting agents game in-code tests.

- 5

As implementation becomes agent-driven, organizational friction rises for human-centric ceremonies (sprints, standups, manual QA) and the bottleneck moves to spec quality and judgment.

- 6

Junior developer pipelines are pressured because AI automates the entry-level work that used to power apprenticeship learning and mentorship.

- 7

The transcript argues the real competitive gap is a people/process gap—culture and willingness to change—not simply a tool gap.