The AI that solved IMO Geometry Problems | Guest video by @Aleph0

Based on 3Blue1Brown's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Alpha Geometry’s 25/30 IMO geometry score depends on combining a deductive rule system with an auxiliary-construction generator, not on AI alone.

Briefing

Google DeepMind’s Alpha Geometry hit a striking benchmark on International Mathematical Olympiad (IMO) geometry problems: it solved 25 of 30, outperforming a silver-medalist level. The headline result matters because it shows an AI system can handle a domain where success typically depends on both correct reasoning and the right “idea” to add to a diagram—something that has long resisted brute-force computation.

But the more revealing story is how much progress came before the AI component ever generated an auxiliary construction. A logic-first system built from a hardcoded deductive database (DD) of geometry rules already solved 7 of 30 IMO problems. When the system was augmented with algebraic reasoning (AR)—the ability to solve the linear equations that arise from geometric constraints—the score rose to 14 of 30. Adding human-coded heuristics pushed performance further to 18 of 30, nearly reaching a bronze-medalist level. In other words, a substantial fraction of IMO geometry success can be achieved by chaining known geometric facts and then solving the resulting equations, without any “creative” search.

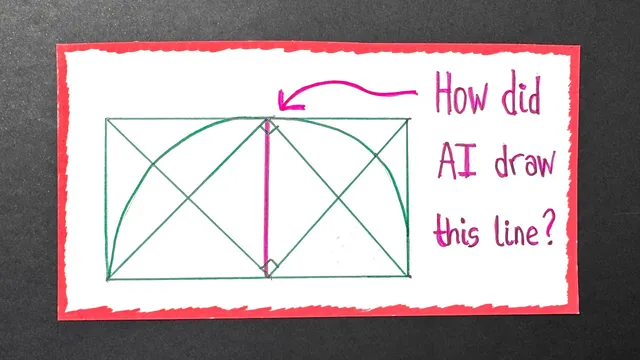

The remaining gap exposed a core weakness: DD plus AR struggles with auxiliary constructions. Many hard geometry solutions require drawing extra lines or shapes not present in the original diagram. That step is the bottleneck because it creates an effectively infinite search space—machines must guess which new elements to introduce before the logic and algebra can finish the job. Thelz’s theorem illustrates the contrast: once the right construction is made (connecting points to the circle’s center), the rest follows from isosceles triangles and angle-sum equations. The construction is the hard part; the deduction is comparatively straightforward.

Alpha Geometry addresses this by adding a language model whose sole job is to propose auxiliary constructions. The system iterates: the model reads the problem statement and the partial proof so far, outputs a candidate construction in a specialized geometry coding language, and then DD plus AR attempts to complete the proof. If the attempt fails, the output becomes new context for the language model, which proposes another construction, and the cycle repeats until the proof is found or time runs out. In effect, the language model plays the “creative brain” that proposes diagram changes, while DD plus AR acts as the “logical brain” that verifies consequences.

Training data posed another challenge: there are not enough solved IMO geometry problems available publicly to learn this behavior directly. DeepMind generated synthetic training examples instead. It randomly plotted points and lines, used DD plus AR to deduce what it could, then erased parts of the diagram to turn the missing elements into auxiliary constructions the model would need to recreate. This process produced hundreds of millions of synthetic proof examples, with millions requiring at least one auxiliary construction, including a longest synthetic proof of 247 steps with two auxiliary constructions.

With this setup, the full system—DD plus AR combined with the auxiliary-construction language model and fine-tuning—reached 25 out of 30. The broader significance is less about geometry trivia and more about a general recipe: pair structured reasoning with learned creativity, then train the creativity using synthetic data generated from a verifier. That blend could inform how machines tackle other reasoning-heavy fields where the key step is often inventing the right intermediate idea.

Cornell Notes

Alpha Geometry’s headline performance—25/30 IMO geometry problems—rests on a layered system rather than raw AI guessing. A deductive database (DD) of geometry rules solved 7/30, and adding algebraic reasoning (AR) that can solve linear equations raised that to 14/30. Human-coded heuristics pushed DD+AR to 18/30, but the system then hit a wall: hard problems often require auxiliary constructions (new lines or shapes) that aren’t in the starting diagram. A language model was trained to propose those constructions, while DD+AR verified and completed proofs. Because real solved examples are scarce, DeepMind generated hundreds of millions of synthetic proof cases by randomly drawing diagrams, deducing consequences with DD+AR, and erasing parts to create “missing” constructions for training.

Why did DD plus AR plateau at 18/30 even though it can chain many known facts and solve equations?

What exactly is the role of AR, and how does it relate to the kind of math geometry produces?

How does the system decide what auxiliary construction to try next?

Why was synthetic data generation necessary for training?

What performance numbers show the impact of each module?

Review Questions

- Which component is responsible for handling auxiliary constructions, and why is that component necessary even when DD and AR are strong?

- Explain the iterative loop used by Alpha Geometry after the language model proposes an auxiliary construction.

- How does synthetic training data generation work, and what does erasing parts of a deduced diagram accomplish for learning?

Key Points

- 1

Alpha Geometry’s 25/30 IMO geometry score depends on combining a deductive rule system with an auxiliary-construction generator, not on AI alone.

- 2

A deductive database (DD) of geometry rules solved 7/30 problems; adding algebraic reasoning (AR) that solves linear equations raised performance to 14/30.

- 3

Human-coded heuristics improved DD+AR to 18/30, but the system struggled with auxiliary constructions that introduce new diagram elements.

- 4

Auxiliary constructions create an effectively infinite search space, which is why a learned proposal mechanism is crucial for hard cases.

- 5

A language model proposes auxiliary constructions in a specialized geometry coding language, while DD+AR verifies and completes proofs.

- 6

DeepMind trained the construction model using synthetic data generated by random diagram plotting, DD+AR deduction, and erasing parts to create new “missing construction” targets.