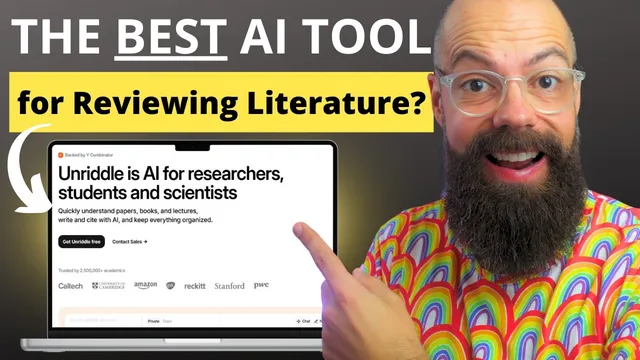

The AI That Will Save You WEEKS of Research Time! Unriddle AI

Based on Andy Stapleton's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Unriddle’s strongest feature is research Q&A that returns answers with clickable citations to the exact referenced passages.

Briefing

Unriddle is positioned as a research-focused AI workspace that turns imported papers and other materials into searchable, question-answerable knowledge—complete with citations that link back to the exact parts of source documents. The core payoff is speed: instead of rereading stacks of articles to find what matters, researchers can ask targeted questions, get answers, and then click references to verify claims directly in the original text, reducing the risk of unsupported “hallucinated” details.

The workflow starts with importing. Unriddle accepts documents plus other formats—plain text, videos, audio, websites, images, and recordings—then builds a library where each item can be summarized and queried. A key feature is suggested questions that help users who don’t know where to begin. For deeper exploration, users can ask the system about specific concepts (the transcript uses an example about how the complexity of active materials affects outcomes), and every response includes clickable references that jump to the relevant passage in the paper.

Beyond single-article Q&A, Unriddle supports grouping papers into collections so users can ask questions across a curated set rather than their entire library. The transcript describes a manual grouping process (select papers, then group them under a named idea) and then querying that group—for instance, asking which devices are “best” across papers about OPV devices. The answers are paired with links back to where the information was drawn in each source, including navigation to the cited sections.

Users can also query their entire reference library at once, such as asking for the best indoor organic photovoltaic devices. Results come back quickly and include references that can be opened to check the underlying sources. One limitation noted is that chat history doesn’t appear to be saved or is at least not obvious, which can be annoying when revisiting earlier questions.

Unriddle also includes an academic writing area. It offers an AI writing assistant embedded alongside a writing canvas, with an “AI partner” that can suggest structures and help draft sections of a literature review. The writing interface is described as powerful but somewhat clunky—formatting and highlighting controls are not as intuitive as in competing tools. Still, the system provides practical actions like rewrite, paraphrase, shorten/expand, and “find citations,” with citation insertion and format settings.

A newer “record” feature lets users dictate ideas by voice and then convert the transcript into polished text suitable for peer-reviewed writing. Early use is promising for brainstorming, but the transcript highlights a frustrating gap: prompts that reference a figure described verbally may fail because the system can’t reliably connect the recording content to the requested figure context. Finally, a graph/visual element appears to be more confusing than helpful at the moment, with unclear purpose and limited discoverability.

Overall, Unriddle’s strongest value is extracting and verifying information from imported research. Its writing and recording features look like meaningful additions, but they’re still maturing compared with the smoother, more reliable paper-unriddling experience.

Cornell Notes

Unriddle is a research-oriented AI tool that imports papers and other media, then lets users ask questions and receive answers tied to clickable citations. That citation linking is the main safeguard: readers can jump straight to the exact passage used, which helps avoid unsupported claims. The platform also supports grouping papers into collections, enabling targeted literature-review style questions across a subset of sources. For writing, it provides an embedded assistant with actions like rewrite, paraphrase, and citation insertion, though the interface is described as somewhat clunky. A voice “record” feature can turn dictation into draft text, but early attempts show limitations in using spoken context (like figure descriptions) reliably.

How does Unriddle reduce the risk of unreliable answers when summarizing research?

What’s the difference between asking about one paper, a group of papers, and an entire library?

What capabilities does Unriddle offer for importing research materials?

How does Unriddle support academic writing, and what friction points were noted?

What does the voice recording feature do well, and where does it break down?

Review Questions

- What mechanisms in Unriddle make it easier to verify AI-generated answers against original research?

- How would you structure a literature review workflow using Unriddle’s grouping feature versus querying your entire library?

- Which parts of Unriddle’s writing and recording tools appear most mature, and which parts still need improvement based on the transcript?

Key Points

- 1

Unriddle’s strongest feature is research Q&A that returns answers with clickable citations to the exact referenced passages.

- 2

Importing supports multiple formats beyond PDFs, including plain text, videos, audio, websites, images, and recordings.

- 3

Paper grouping enables targeted questions across a curated subset of literature rather than the whole library.

- 4

Library-wide queries can quickly surface relevant themes and “best” examples, with references for verification.

- 5

Unriddle’s writing tools include an embedded AI assistant with actions like rewrite, paraphrase, and citation insertion, but the interface is described as clunky.

- 6

The voice recording feature can convert dictation into draft text, yet it may struggle to use spoken context reliably (e.g., figure descriptions).

- 7

Chat history and some UI elements (like a graph feature) appear unclear or not fully integrated, creating friction for repeat use.