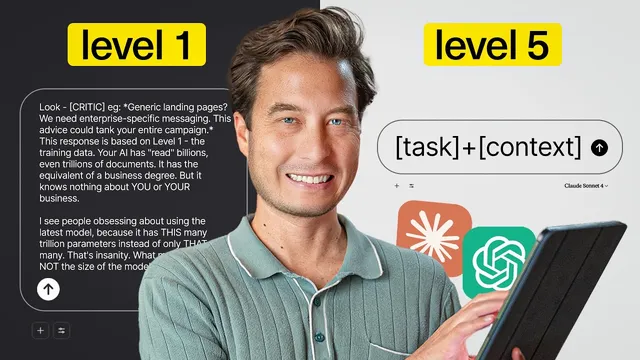

The Future of AI Prompting: 5 Context Levels

Based on Tiago Forte's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

AI output quality improves most when context is layered, not when prompts are endlessly rewritten.

Briefing

AI output quality is no longer mainly a matter of writing clever prompts. The biggest gains come from feeding an LLM the right “context” layers—information that stays attached to the model’s work—so it stops guessing and starts producing results tailored to a specific business, audience, and project.

The contrast is stark in a live example: a request for a marketing strategy for a flagship product initially returns something polished but fundamentally off-target. It invents audience segments (consultants and agencies, operations managers), assumes priorities like SEO, and overstates partnership emphasis—details that don’t match the real business. The problem isn’t model intelligence; it’s that the AI is operating with only its baseline training knowledge (context level one). That baseline is broad—LLMs have read billions or even trillions of documents—but it has no built-in awareness of the user’s company, customers, constraints, or goals.

From there, the framework shifts to five context levels. Level two is the system prompt: hidden instructions set by the model provider that can’t be edited directly, but can be “exploited.” In the transcript, Claude is used as an example—trigger words can force deeper analysis, producing a noticeably more comprehensive strategy even when the user adds only a couple of extra words. The improvement isn’t just length; it becomes wider (market sizing, growth trends, competitive landscape) and more implication-aware.

Level three is user preferences, where control finally becomes practical. By setting communication preferences once—such as “answer in bullet points,” “be conservative in thinking,” and “report your level of certainty”—the AI becomes more consistent across chats. The transcript highlights that this also changes behavior: the model becomes more conservative with predictions and explicitly flags weak spots or missing information.

Level four is project knowledge, described as the most transformative and underused layer. Within a project workspace, the AI can access specific documents and structured details: product names and abbreviations, cohort numbers, target business-size ranges (10 to 50 employees), prior participant testimonials, event tooling (Luma), sales channels, competitors, content plans (email newsletter and LinkedIn), and even messaging differentiators. Conversations inside that project then behave like collaborating with a fully onboarded team member rather than an intern.

Level five is the actual prompt—the one placed in the chat box. With the earlier context layers already loaded, the prompt can be short yet extremely effective. The transcript culminates in a request to generate a comprehensive marketing strategy for “cohort three” with an October 2025 timeline, analysis of past wins and complaints, team assignments and OKRs, and at least 10 YouTube video ideas tied to persona and benefits. The AI responds with a level of structure—case study templates, email nurture sequences, sales enablement, discovery questions, ROI frameworks, objection handling—that would take weeks to assemble manually.

The takeaway is a practical roadmap: find the system prompt triggers, add multiple user preferences, create one project and attach relevant files, then rely on short “one-sentence” prompts to outperform long prompt essays. Model size matters far less than context depth when the goal is reliable, business-specific intelligence.

Cornell Notes

The transcript argues that AI performance hinges less on prompt cleverness and more on “context layers” that persist across requests. Baseline training knowledge (level one) makes models generic and prone to inventing details. System prompts (level two) can be leveraged with trigger words to unlock deeper reasoning, while user preferences (level three) let people standardize format, conservatism, and certainty reporting. Project knowledge (level four) is presented as the biggest unlock: uploaded documents and structured project data let the AI produce strategies grounded in real products, cohorts, testimonials, channels, and competitors. With those layers in place, a short, specific prompt (level five) can generate highly actionable marketing plans, OKRs, and content ideas in minutes.

Why do “smart” prompts sometimes still produce wrong marketing strategies?

What is level two context, and how can it improve results without adding much user text?

How does level three (user preferences) change day-to-day AI behavior?

What makes level four (project knowledge) “the most transformative” layer?

How does level five prompting work once the earlier context layers are set up?

What is the practical “context mastery” roadmap given at the end?

Review Questions

- If an LLM invents audience segments and priorities, which context layer is most likely missing, and why?

- What changes in output quality when user preferences include “be conservative” and “tell me your level of certainty”?

- Why can a short level-five prompt produce a weeks-long deliverable once project knowledge is loaded?

Key Points

- 1

AI output quality improves most when context is layered, not when prompts are endlessly rewritten.

- 2

Level one is baseline training knowledge; it lacks awareness of a specific business, so it tends to guess.

- 3

System prompts (level two) can’t be edited, but trigger words can unlock deeper reasoning.

- 4

User preferences (level three) standardize format and reasoning behavior across chats, including certainty reporting and conservatism.

- 5

Project knowledge (level four) grounds responses in uploaded documents and structured project data, enabling highly specific strategies.

- 6

With context layers in place, a concise level-five prompt can generate detailed plans, OKRs, and content ideas quickly.

- 7

A short roadmap—find system triggers, set preferences, create one project with files—aims to replace long prompt essays with one-sentence prompts.