The Most Important Algorithm Of All Time

Based on Veritasium's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

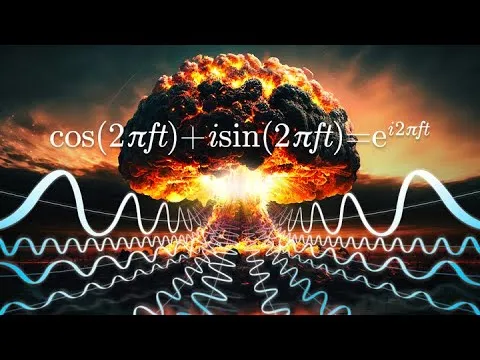

Fourier transforms convert time-domain signals into frequency spectra, which is crucial for distinguishing event types like earthquakes versus nuclear explosions.

Briefing

The Fast Fourier Transform (FFT) became a linchpin for turning messy real-world signals into frequency information—so efficiently that it helped make modern signal processing practical, from WiFi and 5G to radar and sonar. Its deeper historical twist: the same computational breakthrough was pursued partly to monitor covert underground nuclear tests, where the ability to distinguish explosions from earthquakes depended on Fourier analysis.

After World War II, nuclear weapons testing expanded rapidly, and verification became the central problem in any attempt to curb the arms race. The Baruch plan proposed international control of radioactive materials, but the Soviet Union rejected it, and negotiations over testing followed instead. Even when major powers seriously considered a comprehensive test ban, trust was fragile: each side feared the other would keep testing covertly and “leapfrog” technologically. Atmospheric and underwater tests were easier to detect—radioactive isotopes disperse through the air, and underwater blasts generate distinctive sounds picked up by hydrophones. Underground tests were different. Radiation is largely contained, on-site compliance visits were rejected as espionage, and the world needed a way to detect faint ground vibrations from afar.

That detection challenge turned into a signal-processing problem. Seismometers record ground motion, but the raw “squiggles” don’t directly reveal whether a signal came from a nuclear explosion or an earthquake, nor the explosion’s yield or burial depth. The information is there, but it’s encoded in the frequency content. Extracting that content requires a Fourier transform: decomposing a signal into sine waves at different frequencies, with amplitudes and phases that reconstruct the original waveform.

The obstacle wasn’t the math idea—it was the computation. A straightforward discrete Fourier transform requires on the order of N² operations for N samples, which was far too slow for the volume of seismic data needed to monitor many events across many stations. The breakthrough came in 1963 when Richard Garwin and John Tukey connected the dots at a meeting of the President’s Science Advisory Committee. Tukey had a method to compute the discrete Fourier transform far faster, reducing the workload to roughly N log₂ N. The FFT achieves this by repeatedly splitting the data into even- and odd-indexed samples and reusing symmetries in sinusoidal components, eliminating large chunks of redundant calculations.

Garwin later pushed the algorithm toward implementation—approaching IBM researcher James Cooley to program it—while keeping the nuclear-testing motivation quiet. Cooley and Tukey published the algorithm in 1965, and FFT usage spread quickly. Yet the nuclear-test-ban window had already narrowed: the partial ban in 1963 left underground testing untouched, and underground detonations accelerated for decades. The transcript also adds a counterfactual: Carl Friedrich Gauss may have developed a discrete Fourier transform and an FFT-like method as early as 1805, but it wasn’t published in a usable form until after his death, limiting adoption.

Today, FFTs underpin compression and most of modern signal processing. By converting signals into frequency bins, they reveal that many real-world datasets concentrate energy in a small subset of components—making it possible to discard most coefficients and still reconstruct images and audio. The result is a technology that quietly powers everyday communication while also illustrating how a single computational idea can ripple across science, security, and culture.

Cornell Notes

The FFT (Fast Fourier Transform) makes Fourier analysis practical by cutting the computation of a discrete Fourier transform from about N² operations down to roughly N log₂ N. That speedup matters because real monitoring tasks—like distinguishing underground nuclear tests from earthquakes using seismometer data—depend on extracting frequency content from noisy time-series signals. The transcript traces how Fourier transforms were needed for seismic verification, why direct computation was too slow, and how Garwin and Tukey’s FFT breakthrough enabled feasible analysis. It also notes a historical near-miss: Gauss may have developed an FFT-like approach in 1805 but didn’t publish it in a way that spread widely. FFTs later became foundational for modern signal processing and compression, including image encoding.

Why did detecting underground nuclear tests require Fourier transforms rather than just reading seismometer waveforms directly?

What made the straightforward discrete Fourier transform computationally impractical for nuclear-test monitoring?

How does the FFT reduce the work from N² to N log₂ N?

Why was the nuclear test ban only partial in 1963, even though atmospheric and underwater tests could be detected?

What historical “missed opportunity” does the transcript add about Gauss and FFT-like ideas?

How does FFT-based compression work for images, according to the transcript?

Review Questions

- What specific information in seismometer data becomes accessible once signals are transformed into the frequency domain?

- Explain, in your own words, how even/odd splitting and reuse of partial results leads to FFT’s N log₂ N efficiency.

- Why did the inability to verify underground tests undermine the goal of a comprehensive nuclear test ban?

Key Points

- 1

Fourier transforms convert time-domain signals into frequency spectra, which is crucial for distinguishing event types like earthquakes versus nuclear explosions.

- 2

Direct discrete Fourier transforms scale as N² operations, making them too slow for large-scale seismic monitoring.

- 3

The FFT reduces computation to about N log₂ N by recursively splitting inputs into even and odd indices and reusing symmetric relationships among sinusoidal components.

- 4

Verification constraints shaped nuclear policy: atmospheric and underwater tests were detectable, but underground tests were hard to verify without reliable remote detection methods.

- 5

Garwin and Tukey’s FFT breakthrough arrived after the 1963 partial test ban, when underground testing continued and the arms race accelerated.

- 6

Gauss may have developed FFT-like ideas in 1805, but delayed and unclear publication limited early adoption.

- 7

FFT-based compression works because real-world images concentrate most energy in a small subset of frequency components, allowing most coefficients to be discarded and still reconstructed.