The Problem With The Butterfly Effect

Based on minutephysics's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Sensitivity to initial conditions can amplify tiny differences into large outcome changes, but that doesn’t justify treating one specific event as the decisive cause.

Briefing

The “butterfly effect” gets the mechanics of chaos right—tiny differences in initial conditions can produce wildly different outcomes—but it misleads on what matters most: causality and predictability. In nonlinear chaotic systems, small perturbations can indeed amplify, yet the classic weather-and-butterfly framing suggests a single, meaningful trigger (“a butterfly flap causes a tornado”) in a way that doesn’t fit how causes actually work in complex systems.

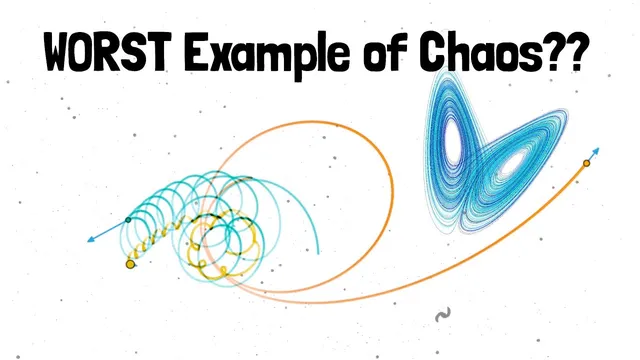

The transcript offers two main objections. First, weather is not the only place where sensitivity to initial conditions appears. Simple chaotic models—like a chaotic pendulum or multiple planets orbiting each other—can show the same dramatic divergence from small initial differences without the “busy” complexity of weather. That matters because it shifts attention away from what makes a system chaotic in the first place and toward the spectacle of weather.

Second, the butterfly framing clashes with ordinary causality. A butterfly flap is neither necessary nor sufficient for a tornado. The argument uses two probability-based causal notions: “probability of necessity” asks whether an outcome would still happen if the putative cause didn’t occur, and “probability of sufficiency” asks whether the cause alone would reliably produce the outcome. In a deterministic chaotic setting, the tornado can happen with many different tiny differences—different butterflies, a leaf falling at a slightly different time, or other minute disturbances. That means the butterfly flap is not necessary. And it’s not sufficient either: even if a butterfly flaps, most such flaps won’t lead to a tornado, so the flap doesn’t guarantee the outcome.

The transcript then reframes the “fine-tuning” idea. For a tornado to hinge on a particular flap, the system must be poised at a tipping point where many parameters line up just right. But because many other small changes would also shift the system away from that tipping point, the butterfly flap is just one of countless possible microscopic differences among many.

From there, the core critique sharpens: chaos is fundamentally about unpredictability. Even when the underlying dynamics are deterministic, the sensitivity to initial conditions makes it impossible to know those conditions accurately enough for useful prediction. The butterfly effect, by emphasizing a dramatic causal story, overstates what can be inferred about predictability and underplays the central lesson of chaos.

As a replacement, the transcript proposes the “too many butterflies effect.” The point isn’t that one butterfly matters; it’s that there are so many tiny, hard-to-track perturbations across a system that no single microscopic event can be treated as the decisive cause. The takeaway is less “a flap causes a storm” and more “countless small uncertainties make outcomes effectively unpredictable.”

Cornell Notes

The butterfly effect gets chaos’s amplification mechanism right but distorts the causal story. Small changes in initial conditions can drive large differences in outcomes, yet a specific “butterfly flap” is neither necessary (tornadoes can occur without that flap) nor sufficient (a flap doesn’t reliably produce a tornado). The transcript argues that chaos’s real lesson is unpredictability: deterministic systems can still be practically unforecastable because initial conditions can’t be measured precisely enough. Instead of one decisive trigger, the “too many butterflies effect” emphasizes that countless microscopic perturbations compete, making outcomes hinge on many untrackable details.

Why does the transcript say the butterfly effect misrepresents causality?

How can chaos be demonstrated without weather?

What does “fine-tuning” mean in the context of the butterfly effect?

Why does determinism not guarantee predictability in chaotic systems?

What is the “too many butterflies effect,” and how does it replace the original metaphor?

Review Questions

- In the transcript’s causal framework, what distinguishes a necessary cause from a sufficient cause, and how does the butterfly flap fail both tests?

- Why does sensitivity to initial conditions undermine prediction even when the system is deterministic?

- How does the “too many butterflies effect” change the way you interpret microscopic events in a chaotic system?

Key Points

- 1

Sensitivity to initial conditions can amplify tiny differences into large outcome changes, but that doesn’t justify treating one specific event as the decisive cause.

- 2

Weather is not required to demonstrate chaos; simple nonlinear systems like a chaotic pendulum or interacting planets can show the same sensitivity.

- 3

A proposed cause can fail “necessity” if the outcome still occurs without it, and fail “sufficiency” if the cause alone doesn’t reliably produce the outcome.

- 4

In chaotic systems, many microscopic perturbations can push the system toward or away from a tipping point, making any single trigger causally fragile.

- 5

Chaos’s central lesson is practical unpredictability: deterministic dynamics can still be impossible to forecast due to measurement limits.

- 6

The “too many butterflies effect” reframes chaos as an untrackable accumulation of tiny influences rather than a single butterfly-driven storyline.