The Universe Is Racing Apart. We May Finally Know Why.

Based on PBS Space Time's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

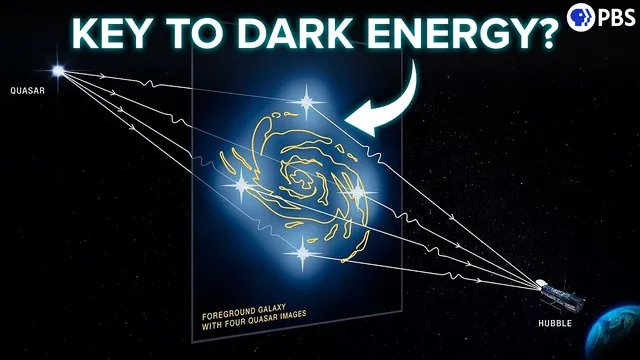

Time-delay cosmography measures the Hubble constant by timing how variable quasars (or supernovae) appear at different times in multiple strongly lensed images.

Briefing

The most promising path to resolving the “Hubble tension”—a persistent mismatch between how fast the universe expands in the early cosmos versus the late universe—may come from timing the flickering echoes of gravitationally lensed quasars and supernovae. Instead of relying on the usual distance ladder steps that make supernova measurements vulnerable to systematic errors, time-delay cosmography uses the fact that gravity bends light into multiple paths. Those paths have different lengths and pass through different gravitational potentials, so the same distant source is observed at slightly different times. Measuring those time delays can directly tie to the Hubble constant, offering an independent check on whether the expansion rate inferred from the cosmic microwave background (CMB) and Type Ia supernovae is truly inconsistent—or whether one side is being biased by assumptions.

The tension is well established: CMB data, interpreted through the standard cosmological model, imply a Hubble constant that is a few percent lower than the value derived from Type Ia supernovae, which track expansion history at relatively recent times. If both measurement programs are correct, the cosmological model used to connect early and late epochs may be incomplete—particularly because it often assumes dark energy has constant density. But before rewriting cosmology, astronomers need independent, high-precision expansion measurements with different systematics. Time-delay cosmography is designed for that role.

Quasars are central to the approach because they vary in brightness on timescales of hours to months. When a quasar is strongly lensed, multiple images appear around a foreground galaxy. Each image shows the quasar’s lightcurve shifted in time; cross-matching the patterns yields the time delay. The underlying physics can be expressed using Fermat’s principle of least time, producing a formula in which the leading term scales with the Hubble constant. That structure is the attraction: the method is not built on a long chain of external distance calibrations.

The obstacles are practical and statistical. Strongly lensed quasars are rare because the alignment must be precise. Even when they exist, the dominant challenge is modeling the lensing galaxy’s mass distribution, including dark matter. Unseen mass along the line of sight creates degeneracies such as the mass-sheet degeneracy, and nearby structures can perturb the lensing signal. Observationally, the images are close together, often blended in ground-based data, and monitoring is interrupted by day/night cycles and seasonal gaps when the Sun blocks observations. These factors inflate uncertainties in each lens’s inferred H0.

Current results illustrate both promise and limits. The H0LiCOW collaboration reported H0 = 73.3 (with uncertainty) from six lensed quasars in 2019, while TDCOSMO later refined the analysis and added two more lenses to obtain 71.8 (with uncertainty). Individually, these values do not decisively confirm the Hubble tension, but when combined with supernova constraints they increase the statistical significance—while still leaving room for unknown systematics that could artificially raise the supernova-based expansion rate.

Supernovae also have a future in this framework. The time-delay measurement of the lensed supernova Refsdal produced an H0 around 70 with large error bars, and the lensed Type Ia event SN H0pe provided an especially valuable cross-check because it links lensing and Type Ia calibration in one system. The key advantage of quasars is abundance: more lensed systems mean uncertainties shrink through statistics.

That scaling is about to accelerate. In early 2026, the Vera Rubin Observatory’s LSST survey (covering the southern sky every few days for 10 years) is expected to find thousands of lensed quasars and hundreds of lensed supernovae, with estimates suggesting H0 uncertainties could drop to the percent level or better. If that happens, the Hubble tension could become effectively unavoidable. Beyond H0, the same datasets could track how dark energy evolves over cosmic time. Recent DESI results already hint that dark energy weakened with time, and time-delay cosmography may become a crucial tool for testing whether that trend reflects real physics rather than measurement artifacts.

Cornell Notes

Time-delay cosmography measures the expansion rate by timing how long the same variable source takes to reach us along different gravitationally lensed paths. For strongly lensed quasars, brightness flickers on hours-to-months timescales, letting astronomers align multiple lightcurves and extract the time delay. The resulting time-delay formula includes a term that scales with the Hubble constant, providing an independent route to H0 that avoids the supernova distance-calibration chain. The method’s bottlenecks are rare lenses, difficult lens-galaxy mass modeling (including dark matter and line-of-sight effects like the mass-sheet degeneracy), and observational gaps that broaden uncertainties. Upcoming surveys such as Vera Rubin’s LSST should multiply the number of usable lenses, shrinking error bars enough to sharpen the Hubble tension and probe dark energy’s evolution.

How does gravitational lensing turn quasar variability into a measurement of cosmic expansion?

Why does the time-delay method avoid some of the vulnerabilities of Type Ia supernova cosmology?

What makes lens modeling the hardest part of time-delay cosmography?

Why do observational constraints inflate uncertainties in measured time delays?

What do current quasar time-delay results imply about the Hubble tension?

How might LSST change the prospects for both H0 and dark energy tests?

Review Questions

- What physical ingredients (geometric and gravitational) contribute to the time delay between lensed images, and how does that connect to H0?

- Which specific degeneracy in lens modeling can be caused by unseen mass along the line of sight, and how can stellar kinematics help?

- Why does increasing the number of lensed quasars and lensed supernovae reduce uncertainty, and what survey is expected to drive that increase?

Key Points

- 1

Time-delay cosmography measures the Hubble constant by timing how variable quasars (or supernovae) appear at different times in multiple strongly lensed images.

- 2

The time delay arises from both different path lengths and Shapiro (gravitational) time delay as light traverses the lens’s gravitational potential.

- 3

The method’s H0 dependence comes directly from the lensing time-delay equation, reducing reliance on the supernova distance-calibration chain.

- 4

The dominant limitations are the rarity of suitable strong lenses, uncertain lens-galaxy mass models (especially dark matter and line-of-sight effects), and observational gaps that blur lightcurve alignment.

- 5

Current quasar time-delay measurements (H0LiCOW and TDCOSMO) lean toward the higher late-universe H0 but still have large error bars, so they don’t yet fully settle the Hubble tension alone.

- 6

Combining time-delay results with supernova constraints increases the statistical weight of the discrepancy, while leaving open the possibility of hidden systematics in supernova analyses.

- 7

LSST and complementary surveys are expected to multiply the number of lensed systems, potentially shrinking H0 uncertainties to the percent level and enabling tests of dark energy evolution over cosmic time.