Thematic Analysis with ChatGPT - full analysis from Codes to Themes

Based on Qualitative Researcher Dr Kriukow's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Start with open coding while withholding research questions to avoid premature theme formation.

Briefing

The core takeaway is a fully auditable thematic analysis workflow that uses ChatGPT (or a similar language model) from first-pass coding all the way to final themes—without skipping the evidence trail needed for academic credibility. The process is designed to keep the model from “jumping ahead” into theme-making too early, while still producing structured outputs that can be defended in a methodology chapter.

The workflow starts with five interview transcripts about training language models and cultural bias in AI. To preserve objectivity, the analysis withholds the research questions during the initial coding stage. Instead, the transcripts are pasted one by one (rather than uploaded in bulk) to reduce errors and to keep the model’s context stable. After each transcript, ChatGPT generates initial or “open” codes paired with coded extracts—creating the first layer of audit trail. The analyst resists the model’s suggestions to merge or cluster codes at this point, because the goal is to capture a comprehensive list of initial codes before any higher-level interpretation.

Documentation is treated as part of the method, not an afterthought. After each transcript’s coding table is produced, the analyst saves separate documents containing that transcript’s initial codes, and also requests a consolidated running list of all codes at the end of stage one. This creates a clear chain from raw text to coded units, which matters when writing up decisions or responding to academic scrutiny.

Stage two shifts into focus coding (also called axial coding in the transcript’s framing). Here, codes are grouped into descriptive categories without changing their meaning or wording. The analyst emphasizes traceability: code names and phrasing should remain faithful to the original coding so that later theme claims can be traced back to specific extracts.

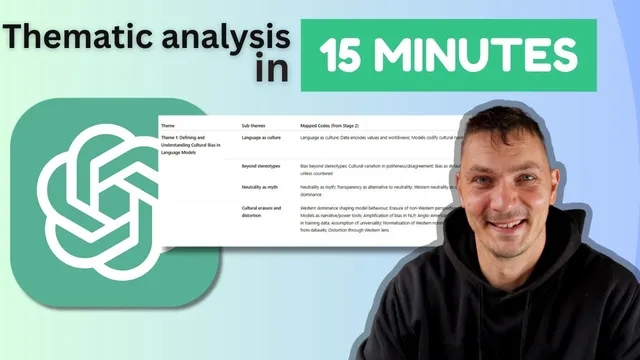

Only after the focus-coded structure is established does the process introduce the research questions. With the aims now provided, ChatGPT generates a thematic framework that maps themes and subthemes to the research questions. At this stage, merging codes, combining categories, and renaming are considered acceptable because the analysis is moving toward the final interpretive layer.

The final stages focus on making the findings usable and defensible. The analyst prefers tables over visual maps for clarity and requests multiple publication-style outputs: a table of themes and subthemes, a mapping that links stage-two codes to the thematic framework, and—crucially—tables that show which participant discussed each subtheme. To strengthen the evidence base, ChatGPT is also asked to produce a theme-by-theme list of quotes supporting each subtheme, enabling direct back-referencing to the original transcripts.

Overall, the method turns language-model assistance into a structured, stepwise qualitative analysis pipeline: open coding with withheld aims, focus coding with preserved code wording, then theme development with research questions—backed by an audit trail of codes, participant attribution, and verbatim quotes.

Cornell Notes

A structured thematic analysis workflow uses ChatGPT from open coding to final themes while preserving an academic audit trail. The process begins by pasting interview transcripts one at a time and withholding research questions so the model doesn’t create themes prematurely. Stage one produces initial codes with coded extracts, saved separately per participant and also as a consolidated code list. Stage two groups those initial codes into focus (axial) codes without changing code wording, protecting traceability. After research questions are introduced, ChatGPT generates themes and subthemes, then outputs tables that map codes to themes, attribute subthemes to participants, and list supporting quotes for each subtheme—making the findings defensible in writing.

Why are research questions withheld during the first coding stage, and what does that protect against?

What traceability practices are used to build an audit trail from transcript to theme?

How does the workflow handle the difference between open coding and focus (axial) coding?

When are research questions introduced, and what changes once they are?

What outputs are requested to make findings publication-ready and defensible?

Why paste transcripts one by one instead of uploading all at once?

Review Questions

- How does withholding research questions during open coding influence the credibility of the resulting themes?

- What specific traceability artifacts (files, lists, tables) are created at each stage, and how do they support an audit trail?

- Why is preserving code wording emphasized during focus coding, and what problem could arise if code names were changed?

Key Points

- 1

Start with open coding while withholding research questions to avoid premature theme formation.

- 2

Paste transcripts one at a time to reduce model shortcuts and maintain coding detail.

- 3

Generate initial codes with coded extracts, then save per-participant code documents to preserve an audit trail.

- 4

During focus (axial) coding, group codes without changing their wording or meaning to protect traceability.

- 5

Introduce research questions only after open and focus coding, then build a thematic framework that maps themes to those questions.

- 6

Use table-based outputs that include participant attribution and verbatim quotes for each subtheme to make findings defensible in writing.