They cut Node.js Memory in half 👀

Based on Theo - t3․gg's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

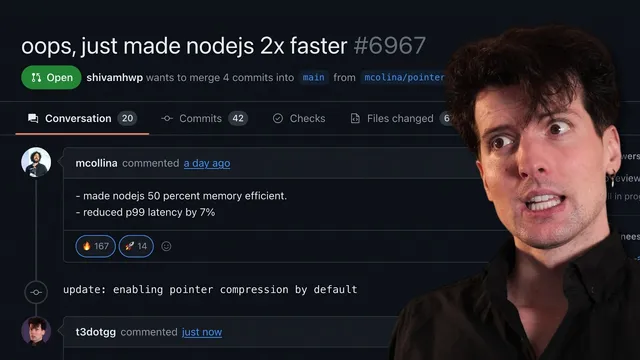

V8 pointer compression can cut Node.js heap memory by about 50% by storing 32-bit offsets instead of 64-bit pointers inside the V8 heap.

Briefing

Node.js can cut heap memory use by roughly half without changing application code by enabling V8’s pointer compression—turning 64-bit pointers into 32-bit offsets inside V8’s “memory cage.” The headline win matters because memory, not CPU, increasingly limits how many Node instances fit on a server, how densely multi-tenant SaaS platforms can pack workloads, and whether edge deployments can run at all under tight RAM caps.

Pointer compression works by storing smaller references in the JavaScript heap: instead of 64-bit addresses, V8 keeps 32-bit offsets relative to a fixed base address. When the engine reads a pointer, it reconstructs the full address by adding the base; when writing, it compresses by subtracting the base. That halves pointer size (8 bytes to 4 bytes), and because many common V8 objects—objects, arrays, closures, maps, and sets—contain lots of internal pointers, the heap can shrink by about 50% for the same logical workload. The trade-off is CPU work: each heap access needs an extra add/subtract in C++ (not in JavaScript). The cost is small enough that real-world benchmarks show modest latency changes.

The reason Node didn’t enable pointer compression by default came down to two historical constraints. First was the “4 gig cage” limitation: earlier designs required the entire Node process—main thread plus worker threads—to share a single 4 GB memory space for compressed pointers. That clashes with Node’s worker model, where parallel execution needs separate memory behavior and workers can’t share memory the same way. Cloudflare and V8 contributors addressed this by moving from a single shared cage to per-isolate cages using “isolate groups,” so each V8 isolate (or thread group) can have its own 4 GB compressed-pointer space.

Second was concern about overhead from compressing and decompressing pointers on every heap access. To quantify the impact, the Node community created a custom Docker image build (“Node Caged”) with pointer compression turned on, rather than relying on a runtime flag. Benchmarks ran on AWS EKS with production-style workloads. Results reported: about 50% memory savings with only a 2–4% increase in average latency, and a reduced P99 latency by about 7%—a particularly important outcome because long-tail requests often align with garbage collection pauses.

Garbage collection benefits from smaller objects and less heap scanning. With compressed pointers, the heap holds the same logical data in less space, so major GC has less to scan and compaction moves less data. The practical effect shows up in tail latency: shorter GC pauses reduce the chance that the slowest requests are delayed by memory cleanup.

The work also targets real deployment economics. If a Kubernetes pod drops from ~2 GB to ~1 GB, teams can run roughly twice as many pods per node (or cut node count in half) without changing code. The transcript cites an example fleet where halving nodes could save thousands of dollars per year, and potentially far more at scale.

Compatibility isn’t universal. Pointer compression won’t help if a V8 isolate needs more than 4 GB of heap. Native add-ons and ArrayBuffer allocations don’t count against the 4 GB cage limit, but add-ons built with the legacy NAN approach may break because pointer compression changes V8 internals; add-ons using the Node API are generally unaffected. The practical takeaway: teams running Dockerized Node apps can experiment by swapping in the provided image, validate with realistic benchmarks, and potentially unlock higher density, cheaper infrastructure, and better tail latency—especially for memory-bound services like WebSocket-heavy systems and edge workloads with strict RAM limits.

Cornell Notes

Pointer compression in V8 can cut Node.js heap memory roughly in half by storing 32-bit offsets instead of 64-bit pointers inside the V8 heap (“memory cage”). Node historically didn’t enable it because compressed pointers required a shared 4 GB cage across the whole process, which conflicts with Node’s worker/thread model, and because of concerns about CPU overhead. Cloudflare and V8 contributors added “isolate groups” so each isolate can have its own compressed-pointer cage, removing the 4 GB sharing bottleneck. To make adoption easy, the community produced “Node Caged,” a custom Docker image build with pointer compression enabled, avoiding compile-time changes. Benchmarks on AWS EKS reported ~50% memory savings with only small average-latency changes and improved P99 latency, largely because smaller heaps reduce major garbage-collection work and pause time.

How does pointer compression reduce memory in V8, and why does it often translate to ~50% heap savings?

What blocked Node from enabling pointer compression by default, and how did “isolate groups” fix it?

Why was a custom Docker image (“Node Caged”) used instead of a simple runtime flag?

What latency impact did benchmarks report, and why does P99 often improve?

What are the key compatibility constraints and native add-on concerns?

Where do the practical benefits show up in real deployments?

Review Questions

- What exact mechanism turns 64-bit pointers into 32-bit references in V8, and what operations happen on reads vs writes?

- Why did the original 4 GB cage limitation conflict with Node’s worker/thread model, and what does “isolate groups” change?

- How do smaller heap objects influence major garbage collection, and how does that relate to P99 latency outcomes?

Key Points

- 1

V8 pointer compression can cut Node.js heap memory by about 50% by storing 32-bit offsets instead of 64-bit pointers inside the V8 heap.

- 2

Node historically avoided pointer compression due to the shared 4 GB “memory cage” across main thread and workers; isolate groups enable per-isolate cages.

- 3

A custom Docker image (“Node Caged”) was used because pointer compression required build-time enablement rather than a simple runtime flag.

- 4

Benchmarks reported ~50% memory savings with only small average-latency changes and improved P99 latency, attributed largely to reduced major GC work and shorter pauses.

- 5

Smaller heaps reduce garbage-collection scanning and compaction movement, which can improve long-tail request latency when GC pauses align with slow requests.

- 6

Pointer compression has limits: it won’t help if a V8 isolate needs more than 4 GB of heap, and legacy NAN-based native add-ons may be incompatible.

- 7

Memory savings can translate directly into higher Kubernetes pod density, better multi-tenant packing, and feasibility of edge deployments with strict RAM caps.