This AI System Turns Your Data Into a Publishable Paper (STRESS-FREE)

Based on Andy Stapleton's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Start with data interrogation using AI-assisted questioning, but keep privacy safeguards in mind (device-stored tools, institutional policies, and AI sandboxes).

Briefing

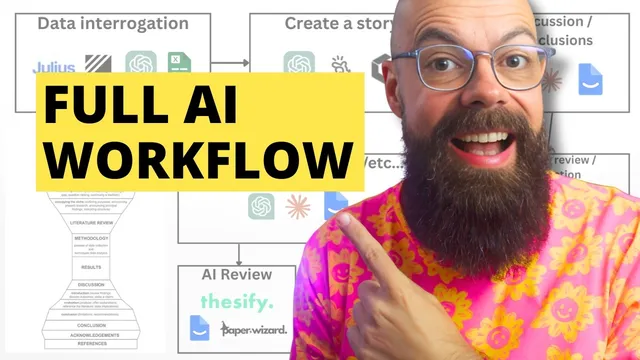

Turning raw research into a publishable paper is increasingly a step-by-step workflow where AI helps at every stage—but the process still hinges on one human requirement: a compelling, defensible story built around the results, discussion, and take-home message.

The workflow starts with data interrogation. Researchers load raw datasets and early ideas into tools designed to ask questions of the data—seeking patterns, testing what the numbers actually support, and accelerating the shift from “I have data” to “I understand what the data is saying.” Julius AI is positioned as an “analyst in your pocket” for generating leads and possible conclusions. Data security is treated as a gating factor: Julius AI is described as having a robust data policy, while DataLine is highlighted as open source and privacy-first, with data accessed and stored on the user’s device rather than in the cloud. For institutions, the transcript points to the growing use of AI sandboxes—controlled environments meant to prevent research data from being sent to external systems—urging researchers to check whether their university provides one. Traditional tools like Excel, R, and other statistical packages remain part of the interrogation layer.

Once the data narrative begins to form, the next step is story creation. Large language models such as ChatGPT and Claude are used to craft the “reason–finding–outcome” arc by feeding in figures, diagrams, and captions and asking what story the results tell. The transcript also mentions more “paper-drafting” approaches that move beyond brainstorming: Manis and Genspark are described as generating paper drafts from provided data, while Gatsby AI is presented as able to build out a full paper structure from a user’s story inputs. The emphasis is clear: these drafts are for shaping and testing how the argument reads, not for immediate submission.

The core of the manuscript—discussion and conclusions—comes next, framed as the most memorable part of the paper. AI tools are recommended for brainstorming a strong take-home message and for iterating on discussion and conclusion language. PaperPal is singled out as particularly useful for manuscript section templates and structured writing support, including brainstorming, rewriting, and generating key sections. The transcript stresses a sequencing rule: don’t move on to the remaining “annoying” sections (abstract, methodology, acknowledgements, keywords, lay summaries) until the discussion and conclusion are solid, because the rest becomes frustrating without a clear narrative.

For the literature review and/or introduction, the workflow leans on tools that help identify field consensus and synthesize background from both external sources and the researcher’s own references. SciSpace is recommended for consensus-building, while NotebookLM is suggested for interrogating a researcher’s reference library and generating background framing aligned to the paper’s discussion and conclusions. Other tools like Elicit (referred to as “answer this”) are mentioned as additional options.

Finally, the manuscript enters quality control and revision. Thesisify is recommended for section-by-section feedback, including whether claims are backed by data and where weaknesses or gaps appear. PaperPal’s plagiarism check and submission check are positioned as practical pre-submission safeguards, including blind-spot detection. Despite heavy AI assistance, the transcript ends with a non-negotiable step: manual review by the author and ideally supervisors and collaborators, with accountability for every word and the final decision on how to weigh feedback. The result is a loop—revise sections as needed, but only after the story is submittable—before peer review delivers the brutal final test.

Cornell Notes

The transcript lays out an AI-assisted academic writing workflow that runs from data interrogation to final submission, but it treats one element as non-negotiable: the paper must have a compelling, logical story anchored in the discussion and conclusions. Researchers use AI tools to question raw data (e.g., Julius AI) while prioritizing privacy through options like DataLine (device-stored, no cloud) and university AI sandboxes. Story-building comes next using large language models such as ChatGPT and Claude, plus draft-generators like Manis, Genspark, and Gatsby AI for testing structure and phrasing. The process then focuses on discussion and take-home messaging (with tools like PaperPal), followed by literature review support (SciSpace, NotebookLM). Quality control uses Thesisify and PaperPal checks, but a manual read and supervisor/collaborator review remain essential before submission.

Why does the workflow insist on building the “story” before filling in the rest of the manuscript?

How does the transcript address data privacy when using AI for research writing?

What’s the difference between using ChatGPT/Claude for story brainstorming versus using tools that generate full drafts?

Which parts of a peer-reviewed paper receive the most emphasis for AI assistance?

How do Thesisify and PaperPal fit into the revision and submission stage?

What does “manual review” mean in this workflow?

Review Questions

- If discussion and conclusions aren’t compelling yet, what should a researcher do next according to the workflow’s sequencing rule?

- What privacy measures does the transcript recommend when using AI tools with research data?

- How do Thesisify’s feedback and PaperPal’s checks complement each other before journal submission?

Key Points

- 1

Start with data interrogation using AI-assisted questioning, but keep privacy safeguards in mind (device-stored tools, institutional policies, and AI sandboxes).

- 2

Build the paper’s narrative by turning figures, diagrams, and captions into a clear reason–finding–outcome story before drafting everything else.

- 3

Treat discussion and conclusions as the paper’s “take-home” core; don’t move on to minor sections until those are strong.

- 4

Use large language models for story brainstorming and draft generators (like Manis, Genspark, Gatsby AI) to test structure—then revise manually rather than submitting immediately.

- 5

Strengthen literature review and/or introduction by synthesizing field consensus (SciSpace) and interrogating your own references (NotebookLM).

- 6

Run quality control with tools like Thesisify for section-level weaknesses and PaperPal for plagiarism and submission checks.

- 7

Finish with a mandatory manual review by the author and ideally supervisors/collaborators, with the primary author accountable for the final wording.