Training a Unitree G1 to Walk w/ Reinforcement Learning

Based on sentdex's video on YouTube. If you like this content, support the original creators by watching, liking and subscribing to their content.

Sim-to-real reliability improves when the actuator control law is consistent between simulation and hardware; switching from MJLab implicit PD to an explicit Python/Torch PD controller was a turning point.

Briefing

A workable path from simulation to a real Unitree G1 humanoid hinges less on fancy training tricks and more on making the simulator “match” the robot’s control loop—down to actuator control details and what the policy has (and hasn’t) experienced. After years of sim-to-real attempts, the project reaches a turning point by switching from MJLab’s default implicit PD control to an explicit PD controller implemented in Python/Torch, then validating the policy through a strict sim-to-sim pipeline before risking the real robot.

The core sim-to-real problem is distribution shift: neural policies trained in simulation can fail catastrophically when real-world physics—gravity, friction, contact dynamics—doesn’t line up. The practical response is to reduce the gap by ensuring the robot is “simulable” enough that sim-to-sim performance predicts sim-to-real behavior. The workflow starts with MJLab (chosen because others have already demonstrated sim-to-real on Unitree G1 using it), then trains a locomotion policy in a velocity-control setup. Motion imitation is mentioned as an alternative, but the focus is on velocity-based control to build toward a general-purpose gait that can steer and handle varied terrain.

Training configuration details emphasize scaling and hardware constraints: tens of thousands of parallel simulated environments (e.g., 50,000) to speed learning on GPUs like an RTX 4090, with training times ranging from about an hour for a shorter run to longer multi-hour or day-long sessions depending on terrain complexity and rollout settings. The policy is first tested in MJLab, where it shows balance but also reveals issues like jitter and “shuffle” behavior—symptoms the author links to downstream wear on joints.

The decisive step is sim-to-sim validation using the same MJLab/Isaac Lab pipeline and then the same control code path used for the live robot. A key failure mode appears when the robot falls: in training, episodes terminate before the torso reaches a “lying on the ground” state, meaning the neural network never sees that out-of-distribution condition. On the real robot, that can trigger unstable behavior. The mitigation is operational rather than learned: detect a fall/out-of-distribution condition and switch the robot into a “full damp” mode so it doesn’t lose control. This makes real-world testing safer while still allowing the policy to be evaluated on walking and uneven terrain.

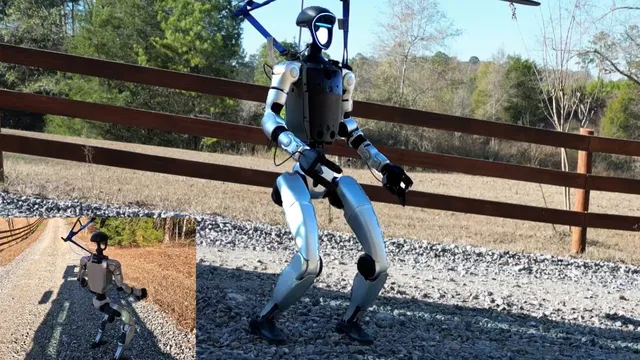

Once sim-to-sim looks credible, the policy runs on the Unitree G1 in a mobile gantry setup—ratchet-strapped to tractor pallet forks—so the robot can be tested on slopes, leaf-covered ground, and gravel without free-fall risk. The author uses a Dell GB10 compute unit (low power, small form factor) and controls the robot via keyboard/GUI, with an emergency stop strategy that hard-shuts down a proprietary board.

The field tests succeed in the sense that the robot can walk, balance, and recover in challenging conditions, but hardware fragility becomes the limiting factor. The robot’s arm repeatedly detaches, forcing repairs and later upgrades to Inspire five-finger hands. Even after mechanical setbacks, the locomotion policy continues to function, reinforcing the idea that the lower-body gait is the foundation—while arms can be refined later via freezing/smoothing strategies once stability is achieved.

Beyond the immediate demo, the project frames the next milestone as practical manipulation: getting the G1 to squat, pick up items (like kitchen objects or toys), and stand—likely requiring a locomotion policy plus a separate arm/gripper policy, coordinated by a higher-level task flow. The broader takeaway is that sim-to-real becomes tractable when control interfaces and failure handling are made consistent, and when sim-to-sim is treated as a gatekeeper rather than a formality.

Cornell Notes

The project’s breakthrough for sim-to-real on a Unitree G1 comes from tightening the control-loop match between simulation and hardware. The author finds that MJLab’s default implicit PD control (which relies on privileged/engine data and future backfilling) blocks reliable sim-to-real, so they switch to an explicit PD controller implemented in Python/Torch so the same controller runs in both sim-to-sim and sim-to-real. They also address a major out-of-distribution failure: the policy never sees “lying on the ground” states during training because episodes terminate earlier, so real falls can destabilize behavior; a fall detector triggers “full damp” instead. After sim-to-sim validation, the policy runs on the real robot in a mobile gantry setup and shows stable walking on slopes and debris, though hardware issues (arm detachments) drive repairs and hand upgrades. The next target is manipulation tasks like picking up objects from the floor using separate locomotion and gripper/arm policies.

Why does sim-to-real fail so often for deep RL locomotion policies, and what practical lever reduces that risk here?

What role does sim-to-sim play beyond “sanity checking,” and why is it treated as a gate before touching the real robot?

How is the “falling” out-of-distribution problem handled during real-world testing?

What specific controller mismatch did the author identify as a likely blocker for sim-to-real?

Why does the velocity-trained policy show “shuffle” behavior, and what observation mismatch is suspected?

What hardware and operational constraints shaped the real-world experiments?

Review Questions

- What changes were made to the PD controller to improve sim-to-real reliability, and why does the author think implicit PD was problematic?

- How does episode termination during training create an out-of-distribution condition during real falls, and what safety mechanism addresses it?

- What observation mismatch (related to velocity) does the author suspect is driving shuffle/jitter behavior, and what mitigation is proposed?

Key Points

- 1

Sim-to-real reliability improves when the actuator control law is consistent between simulation and hardware; switching from MJLab implicit PD to an explicit Python/Torch PD controller was a turning point.

- 2

Sim-to-sim validation is treated as a hard gate: policies are only deployed to the real Unitree G1 after matching pipeline/control-code behavior in simulation.

- 3

Training termination conditions matter: because the policy never sees “lying on the ground,” real falls can destabilize behavior unless a safety fallback is used.

- 4

A practical safety strategy is to detect fall/out-of-distribution states and switch the robot to “full damp” rather than letting the learned policy run uncontrolled.

- 5

Velocity-based locomotion can develop shuffle/jitter when the real robot lacks the same observation signals (notably linear velocity) used during training.

- 6

Real-world testing used a mobile gantry (tractor pallet forks) plus a hard emergency stop to reduce risk while evaluating policies on slopes and debris.

- 7

Hardware limitations (arm detachments) can become the bottleneck; locomotion can still remain functional while manipulation and arm stability are addressed later.